Enhancing Mission Analysis: Integrating Artificial Intelligence Into the Military Decision-Making Process

Abstract

This article details an experiment at the U.S. Army Command and General Staff College (CGSC) testing the integration of Artificial Intelligence (AI) agents, built on the Palantir Vantage platform, into Step 2 (Mission Analysis) of the Military Decision-Making Process (MDMP). A traditional 14-student human staff was compared against a two-student AI-augmented team using specialized AI personas (Overall, IPOE, Combined, and MA Brief agents) to generate running estimates, Intelligence Preparation of the Operational Environment (IPOE) products, problem/mission statements, and other key outputs. The experiment concludes that AI serves as a powerful cognitive partner for accelerating Mission Analysis, particularly in text-heavy tasks and filling expertise shortfalls, but requires human validation for realism, graphics, and final judgment to enhance commander decision-making in modern warfare.

Introduction

The evolving nature of modern warfare demands that military organizations not only adapt to new threats but also leverage emerging technologies to gain a decisive advantage. Artificial Intelligence (AI), particularly Large Language Models (LLMs), offers a promising avenue for enhancing the Military Decision-Making Process (MDMP). This article examines an experiment conducted at the Command and General Staff College (CGSC) to integrate AI into Mission Analysis (MA). Drawing parallels to recent applications in wargaming, the study tested two hypotheses: whether AI could generate MA products comparable in quality to those produced by human staffs, and whether AI personas could fill expertise gaps in specialized warfighting functions. Using the Palantir Vantage platform to develop AI agents, the experiment revealed significant successes in efficiency and text-based outputs, while underscoring the need for human oversight. These findings provide a blueprint for accelerating MA, ultimately enabling commanders to make faster, more informed decisions.

Experiment Setup: Traditional vs. AI-Augmented Approaches

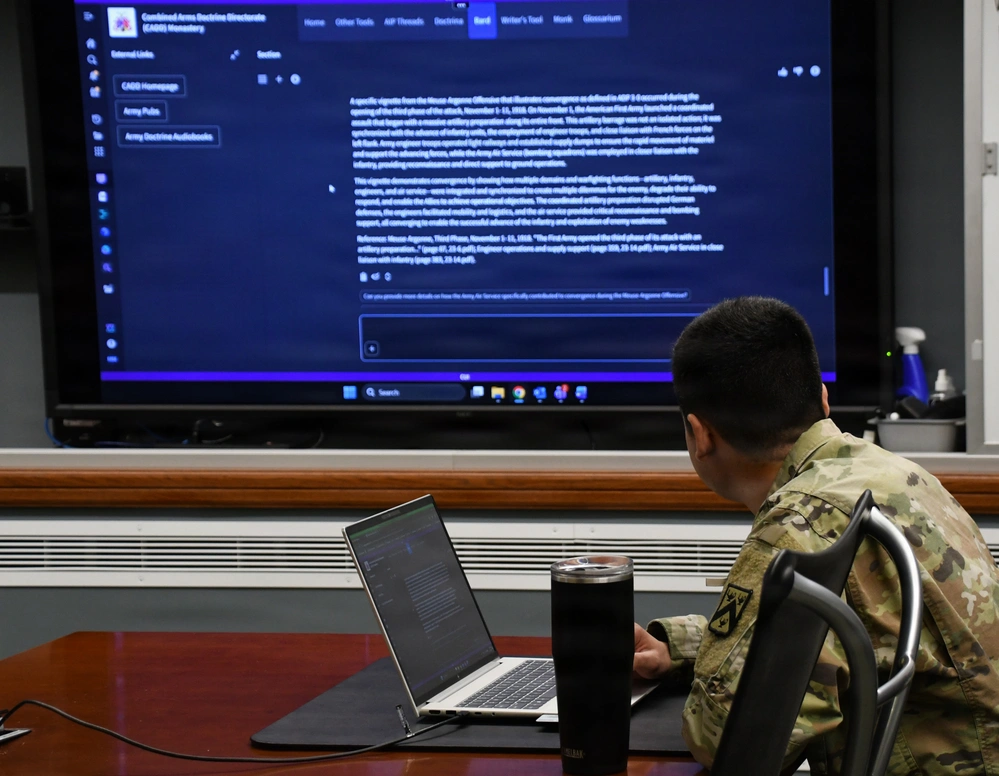

The experiment pitted a traditional human staff against an AI-assisted team to evaluate AI’s role in MA. A group of 14 students formed the human staff, executing MA using standard doctrinal methods outlined in FM 5-0 and FM 3-0. They relied on their collective knowledge, scenario documents (including the base order and annexes), and the commander’s guidance to produce running estimates, Intelligence Preparation of the Operational Environment (IPOE), and key outputs like problem and mission statements. This team had minimal AI support, focusing on manual analysis. In contrast, a two-student team developed AI agents on the Palantir Vantage platform, a robust tool for building tailored AI solutions. Palantir Vantage facilitated the creation of AI personas (AIP agents) by allowing seamless integration of doctrinal documents, scenario data, and custom instructions into LLMs. This platform’s ontology-based structuring—converting raw documents into optimized, parsable formats—mirrored techniques used in prior wargaming experiments, enabling efficient knowledge ingestion without overwhelming the model’s context window. The AI team aimed to produce a parallel MA brief, addressing both hypotheses through specialized agents.

Developing AI Agents on Palantir

Vantage Agent development began with an assessment of warfighting functions, initially considering one agent per function. However, Palantir Vantage’s flexibility allowed consolidation into three core AIP agents, each with targeted roles, inputs, and outputs. This streamlined approach reduced complexity while maximizing coverage.

- Overall Agent: Serving as the lead, this agent ingested all scenario products, doctrinal references (e.g., FM 3-0 and FM 5-0), and the commander’s guidance. On Palantir Vantage, documents were converted into ontologies for rapid querying. The agent’s responsibilities included generating running estimates by warfighting function, identifying asset availability/shortfalls, constraints, Essential Elements of Friendly Information (EEFI), facts/assumptions, tasks (specified, implied, essential), and risks. Outputs were structured text, forming the foundation for broader MA products.

- IPOE Agent: Specialized in the intelligence warfighting function, this agent focused on IPOE processes per ATP 2-01.3. Inputs were limited to Annex B (Intelligence), the base order, and relevant doctrine, ensuring a focused expertise simulation. Using Palantir Vantage, the agent produced detailed IPOE steps, including the Intelligence Collection (IC) plan, Modified Combined Obstacle Overlay (MCOO), key terrain analysis, Areas of Operation/Areas of Interest (AoA/AoI), enemy Situation Template (SITEMP), event template, High-Value Target (HVT) list, intelligence gaps, IC requirements table, and overall enemy situation. This addressed the second hypothesis by filling potential intelligence expertise gaps.

- Combined Agent: Analogous to an executive officer (XO) or S3, this agent aggregated outputs from the Overall and IPOE agents. It synthesized data to generate a timeline, problem statement, mission statement, and proposed Course of Action (COA) evaluation criteria. Palantir Vantage enabled iterative refinement, allowing the agent to cross-reference inputs without redundant data uploads.

A fourth agent, the MA Brief Agent, was later added to compile all outputs into a cohesive brief. This agent lacked direct access to original scenarios, relying solely on synthesized products to generate slides. Instructions for all agents were meticulously crafted on Palantir Vantage, emphasizing doctrinal fidelity, unit focus, and key operational elements. To avoid bias, instructions were generated using a separate LLM (Claude Sonnet 4.5), while agents operated on GPT-4.1 equivalents. Instruction lengths varied, with the Overall Agent’s being the most detailed to ensure comprehensive coverage.

Execution and Key Discoveries

The human staff completed MA in approximately 5 hours: 4.5 hours for analysis, 0.5 hours for slide creation, and 0.5 hours for rehearsals. The AI team, leveraging Palantir Vantage’s automation, finished in just 2 hours—1 hour for agent setup and 1 hour for product generation—yielding a 3-hour efficiency gain. The results highlighted AI’s strengths and limitations. In text-based outputs, AI excelled: problem and mission statements were clearer, more concise, and doctrinally aligned than human versions. For instance, AI-generated statements synthesized complex inputs with precision, outperforming the human team’s occasionally verbose drafts. Running estimates and task identifications were similarly robust, demonstrating AI’s ability to process vast doctrinal data rapidly.

However, visualization posed a challenge. The IPOE Agent produced accurate text descriptions (e.g., MCOO details and SITEMP narratives) but could not generate imagery like maps or diagrams—critical for MA briefs. While it provided instructions for visuals, this fell short of human products, which included supporting graphics. This limitation reduced the IPOE section’s effectiveness, scoring it at 30% equivalence to human outputs when visuals were factored in.

Overall, the AI brief achieved 60% equivalence to the human version, rising to 90% when excluding visual-heavy slides. Missing elements, like risk assessments, traced back to instructional gaps, underscoring prompt design’s importance—a skill akin to crafting clear orders.

Assessment and Successes

Using a 100% equivalence rating (exact match to human products), Hypothesis 1 was partially validated: AI produced viable MA outputs, particularly in non-visual areas, sometimes surpassing human quality in synthesis and clarity. Hypothesis 2 was affirmed for IPOE; the specialized agent effectively simulated expertise, identifying gaps and templates that aligned closely with human analysis, albeit without visuals. Successes stemmed from Palantir Vantage’s capabilities: ontology structuring accelerated data handling, enabling agents to “reason” doctrinally without human intervention. This paralleled wargaming insights, where simplified prompts yielded realistic outcomes. AI’s impartiality also surfaced hidden assumptions, challenging human biases and enhancing rigor.

Lessons Learned for Implementation

Several takeaways emerged for Army-wide adoption:

- Human-in-the-Loop Essential: AI augments, not replaces, judgment. Human validation ensures realism, especially for visuals and contextual nuances.

- Instructional Expertise Critical: Detailed, unbiased prompts are key. Units must train staffs in “AI tasking” to avoid omissions.

- Data Integrity Matters: Accurate inputs yield reliable outputs. Maintain up-to-date doctrines and scenarios in platforms like Palantir Vantage.

- Efficiency as a Force Multiplier: Time savings allow more iterations, deeper analysis, and reduced staff fatigue.

- Visualization Integration Needed: Future developments should incorporate AI tools for graphics generation to close this gap.

Skepticism

Critics rightly warn that over-reliance on AI agents in Mission Analysis risks automation bias, where staffs uncritically accept the model’s polished, doctrinally fluent outputs as objectively superior; skill atrophy, as junior officers increasingly outsource the intellectual heavy lifting of running estimates, task analysis, and assumption vetting to LLMs as well as the subtle danger that AI’s structural coherence masks unresolved priorities or command trade-offs that a human staff would surface through friction and debate. These concerns are legitimate in principle, particularly in high-stakes operational environments where over-trusting fluent but contextually shallow synthesis could erode collective professional judgment over time. However, the experiment and this article’s own emphasis on rigorous human-in-the-loop validation, deliberate “AI tasking” training, and explicit retention of commander and staff oversight directly mitigate these risks by positioning AI as a rapid drafting and assumption-challenging tool rather than a decision authority. Far from causing atrophy, well-designed integration can actually sharpen human skills by freeing staff from rote synthesis to focus on creative problem-framing, visual integration, and ethical judgment—precisely the higher-order functions that distinguish military professionals. Ultimately, treating AI outputs with the same healthy skepticism applied to any staff recommendation preserves the friction essential to robust MDMP while harnessing the technology’s speed and consistency as a genuine force multiplier.

Conclusion

This experiment illustrates AI’s potential to accelerate Mission Analysis, transforming Step 2 of MDMP from a time-intensive process into a more efficient one. By developing AIP agents on platforms like Palantir Vantage, staffs can fill expertise gaps—such as in intelligence—or expedite tasks like running estimates and problem statement drafting. Successes in text-based synthesis and speed highlight AI as a cognitive partner, enabling rigorous, assumption-challenging analysis. However, human oversight remains indispensable for validation, visualization, and holistic judgment. As the Army operationalizes AI, investing in doctrine, training, and infrastructure will ensure it becomes a cornerstone of decision-making, providing a competitive edge in future conflicts.

(The views expressed in this article are those of the author and do not necessarily reflect the official policy or position of the Department of the Army, the Department of War, or the U.S. Government.)