C-UAS Operations: We Need a Single Pane of Glass

![]() The Core Problem: Fragmentation When the Interface Becomes the Adversary

The Core Problem: Fragmentation When the Interface Becomes the Adversary

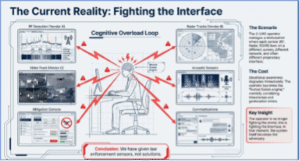

The counter unmanned aerial systems (C‑UAS) operator leaned over her workstation, eyes darting between a cluster of windows scattered across her graphical interface. Each pane represented a different data layer, a different sensor, a different fragment of the operational picture she was expected to hold together in real time. This was on one monitor, but several others existed as well. As she toggled and switched her focus between them, the degradation of situational awareness was immediate and unmistakable. What began as a manageable task with a single data stream quickly became cognitive overload when multiplied across six or more independent feeds. The operator was no longer fighting the drone; she was fighting the interface. In that moment, the system itself became the adversary. This is not an isolated observation. It is a systemic flaw that has quietly embedded itself into the fabric of modern C-UAS operations, and unless we confront it directly, we will continue to ask operators to perform the impossible. Now, multiply that by potentially six or more data streams in a layered sensor approach, and you have just created operational paralysis. We have given local law enforcement sensors, not solutions. We must do better.

The following ideas and analysis are based on operational observation, system behavior, and first-principles command-and-control common practices logic, rather than a single study, product, or doctrine publication. While established concepts from joint operations, cybersecurity architecture, and human factors inform the discussion, the conclusions reflect an assessment of how C-UAS systems are currently deployed and operated in practice, particularly at the state, local, tribal, and territorial levels.

Why C-UAS is a Command-and-Control Challenge

Over the past several years, we have made significant progress in small C-UAS thought, technology, and operational approaches—but we still have a long way to go. The recent SAFER SKIES legislation is a positive step, yet it falls short in several critical areas. More importantly, it has introduced real confusion among state, local, tribal, and territorial (SLTT) and military stakeholders regarding what and when it authorizes operations—particularly when it comes to the mitigation mission.

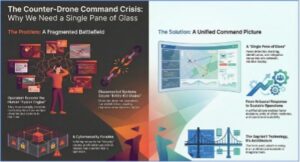

Law enforcement agencies in major metropolitan areas are doing their best to set the conditions for safer skies, as are military installations across the country. In practice, however, many are primarily tracking small drones using RF-based detection systems such as AeroScope—a system sold by the world’s largest drone manufacturer, DJI Technology, based in China. While newer detection systems are emerging that extend beyond proprietary DJI Technology protocols and potentially a full-spectrum signals capability, the practical reality is that most mitigation today is not technological; it is procedural. Operators identify a drone and attempt to contact the pilot, asking them to land, and military installations still disagree on defining the perimeter for authority to act. In both cases, this is a compliance-based outcome, not a defeat mechanism. This approach works because the majority of drone contacts fall into the “clueless–careless” portion of the threat triangle—hobbyists, uninformed operators, or individuals unknowingly violating airspace restrictions, and this is a good thing, but it will not always be the case. In reality, systems, policies, and architectures built around this assumption collapse rapidly when confronted with deliberate, evasive, or autonomous threats. A malicious or technically competent actor will not respond to phone calls, door knocks, or verbal commands. The most concerning gap in current C-UAS operations is not a single sensor or legal authority—it is the absence of a unified visualization interface, which practitioners consistently refer to as a single pane of glass.

The Single Pane of Glass

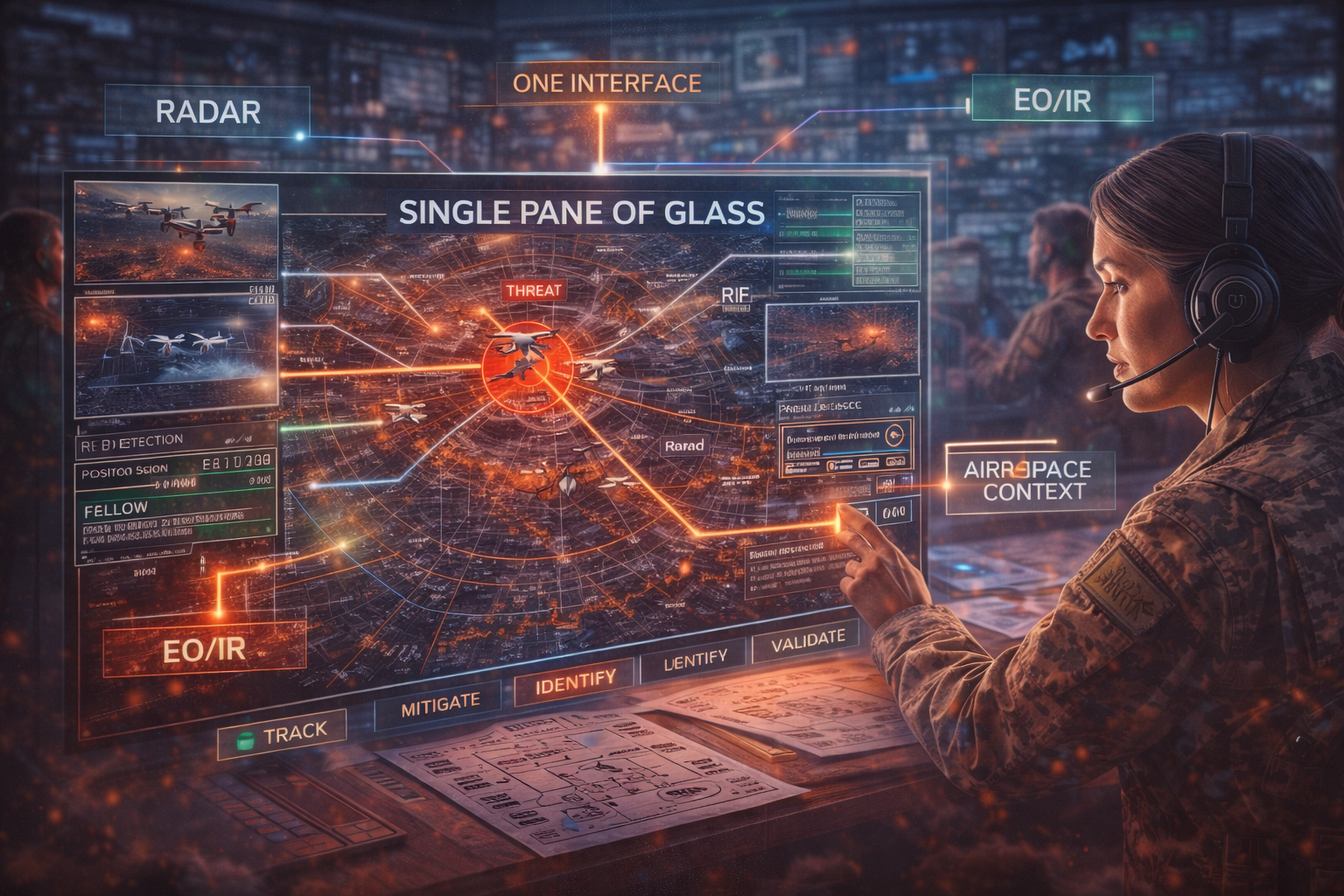

In essence, this is the one-stop operational environment detection, identification, tracking, mitigation status, airspace context, and decision support into a coherent, intuitive display. In military terms, this is simply a Common Operating Picture (COP). Shockingly, many current C-UAS operations lack a single pane that brings all the tools together in one place for decision-making. Operators are often forced to manage multiple independent sensor systems, each with its own user interface, map symbology, alert logic, and update rate. RF detection appears on one screen. Radar tracks appear on another. EO/IR video feeds are displayed elsewhere. Mitigation tools—if authorized—are controlled through entirely separate consoles. The operator effectively becomes the fusion engine, mentally correlating timestamps, geolocation errors, track IDs, and confidence levels in real time.

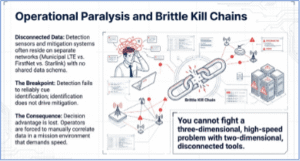

This is cognitively expensive in a mission environment that demands speed, clarity, and decisiveness. This fragmentation extends beyond visualization into data transport and communications architecture. Detection sensors and mitigation systems often reside on entirely separate networks—one on municipal long-term evolution (LTE), another on FirstNet (potentially), another on Starlink—with no shared data schema or latency guarantees. The outcome is not merely inefficiency, but fragile kill chains. Detection fails to reliably cue identification, identification does not consistently drive mitigation, and mitigation effects are not immediately visible in the operating picture.

This condition undermines core principles of command and control. Unity of effort collapses when systems operate in isolation rather than as a coherent whole. Decision advantage is lost when operators are forced into manual correlation and workarounds. System resilience degrades when mission success depends on parallel, disconnected communication paths instead of an integrated architecture. Meanwhile, training and sustainment demands grow disproportionally, as each additional interface requires separate expertise, certification, and ongoing proficiency.

Even more critically, the lack of a single pane of glass prevents scalable operations. A city managing a marathon, a stadium, and an airport simultaneously cannot afford bespoke sensor stacks and custom integrations at each site. Without a unified COP, C-UAS remains artisanal rather than operationalized. To be clear, this is not a technology availability problem. Sensors and effectors exist. Data fusion engines exist. What is missing is an architectural mandate—a requirement that C-UAS systems integrate into a unified command-and-control layer with standardized data models, common symbology, role-based access, and policy-aware decision support.

Until this gap is addressed, SLTT agencies remain trapped in a reactive posture: competent at talking compliant operators down, poorly positioned to defeat autonomous or adversarial systems, and dangerously dependent on the operator’s ability to mentally fuse chaos under stress. Just look at a new concept for fiber-optic-controlled small drones. These platforms are navigated via a cable resembling a fishing line that essentially makes them invisible to detection if radar is not present in the stack. Even more urgent are recent reports of these drones being used in urban environments with increased fiber protection and strength to allow for maneuvering in and around structures. C-UAS is no longer a sensor problem. It is a command-and-control problem. Without a true single pane of glass, we are asking operators to fight a three-dimensional, high-speed problem with two-dimensional, disconnected tools.

Constraints: Cyber, Policy, and AI

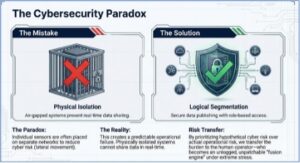

Cybersecurity considerations and operational paradox are the next issue. In many current C-UAS deployments, individual sensors are intentionally placed on separate communications networks. This is often justified as a cybersecurity measure, based on the belief that isolating systems reduces the risk of compromise or lateral movement by an adversary. While this concern is not unfounded at the network level, its operational consequences are frequently misunderstood. Cybersecurity-driven segmentation is valid when applied as logical separation within a well-designed architecture. It becomes problematic when it results in physically isolated systems that cannot share data in real time.  Situational awareness fragments, latency increases, and operators are forced to manually correlate information across multiple interfaces and networks. A measure intended to increase security instead introduces a predictable operational failure. In practice, the widespread use of separate communication modality links for detection and mitigation systems is often driven as much by vendor risk avoidance, acquisition silos, and policy ambiguity as by true cyber strategy. Without an architectural authority defining how systems should securely interoperate, vendors default to isolation because it is simpler to certify and easier to defend contractually; however, it creates the next level of complexity for the C-UAS team.

Situational awareness fragments, latency increases, and operators are forced to manually correlate information across multiple interfaces and networks. A measure intended to increase security instead introduces a predictable operational failure. In practice, the widespread use of separate communication modality links for detection and mitigation systems is often driven as much by vendor risk avoidance, acquisition silos, and policy ambiguity as by true cyber strategy. Without an architectural authority defining how systems should securely interoperate, vendors default to isolation because it is simpler to certify and easier to defend contractually; however, it creates the next level of complexity for the C-UAS team.

The result is a paradox: hypothetical cyber risk is prioritized over operational risk. Fragmented communications prevent the formation of a true common operating picture, degrade sensor cueing and track correlation, and slow decision-making precisely when speed and clarity are most critical. Rather than increasing security, this approach transfers risk to the human operator, who becomes the ad hoc fusion engine—unlogged, un-patchable, and under extreme stress. A cyber-resilient C-UAS architecture does not require isolated situational awareness. It requires logical segmentation, one-way data publishing, role-based access controls, and visibility into network health—all while maintaining a unified operational picture. When designed correctly, cybersecurity and operational effectiveness are mutually reinforcing rather than competing priorities.

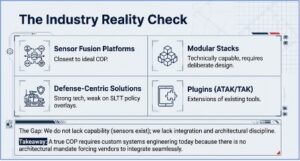

So, what is the key operational reality check? No single product today universally delivers every element of the ideal COP straight out of the box. Today’s C-UAS solutions generally fall into several categories. Some focus on sensor fusion and unified display platforms that come closest to delivering a true common operating picture. Others offer modular sensor and effector stacks that are technically capable of integration but require deliberate architectural design to function as a coherent system. A third group consists of defense- or platform-centric integrated solutions, often optimized for military operations and environments rather than the legal, operational, and resource constraints faced by SLTT agencies.

Finally, there are solutions designed as plugins into broader command-and-control ecosystems, such as ATAK or TAK, that extend existing situational awareness tools rather than replacing them. The reality of the industry, however, is more nuanced. While some vendors claim to provide unified situational awareness, many in practice deliver integration layers or APIs intended to feed an external common operating picture. A fully seamless, low-latency, standardized COP—one that integrates detection, tracking, mitigation control, and policy overlays into a single operational view—still largely requires custom systems engineering and integration work for each deployment. Furthermore, the industry landscape reflects this tension. Defense-centric solutions often excel in military environments but lack the policy-aware overlays required for SLTT operations. Still, these remain far short of visualization at the operator level that provides ease of use, stable environments, and a clear picture of what decision should be made. In short, we do not lack capability; we lack integration, architectural discipline, and operational mandates. This could not be more important now than ever. SAFER SKIES has begun delegating mitigation authority to SLTT, and these tools will become part of the “kit” needed to secure our most vulnerable facilities, venues, and sensitive sites. Integration between detection and defeat sensors is not a want; it is a need.

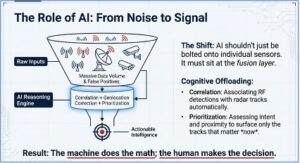

Now, let’s discuss the maturity of artificial intelligence and considerations for this concept. Artificial intelligence is often presented as the solution to C-UAS complexity, but in practice, AI has largely been bolted onto the very fragmentation it is supposed to fix. Many current systems apply machine learning narrowly—improving RF classification, object recognition in EO/IR feeds, or anomaly detection in radar returns—without addressing the broader command-and-control problem. The result is better individual sensors, not better operations. An AI model that improves detection accuracy by 10% is of limited value if its output still appears on a separate screen, with different symbology, latency, and confidence logic than every other system in the stack. In this context, AI risks accelerating data generation while worsening cognitive overload. Where AI does matter—critically—is at the fusion, prioritization, and decision-support layers. A true single pane of glass is not just a visualization challenge; it is a reasoning challenge. AI is uniquely suited to perform the correlation work that operators are currently forced to do mentally: associating RF detections with radar tracks, reconciling sensor confidence levels, resolving geolocation error margins, and maintaining persistent track identity across sensor handoffs. Properly applied, AI becomes the fusion engine—reducing dozens of raw inputs into a smaller number of high-confidence, explainable operational objects that an operator can act on.

Equally important, AI enables temporal and spatial compression of decision-making. In a fast-moving, three-dimensional environment, the question is not simply what is in the air, but what matters now. AI-driven prioritization can assess intent indicators, flight behavior, proximity to protected assets, historical patterns, and policy constraints simultaneously—surfacing the few tracks that require immediate attention while suppressing noise. This is not autonomous engagement; it is cognitive offloading. The human remains the decision authority, but the machine continuously curates the problem set so that attention is focused where seconds truly matter. AI also plays a decisive role in policy-aware decision support, particularly for SLTT operations operating under constrained legal authorities. An AI-enabled COP can continuously evaluate detected activity against geofencing rules, temporary flight restrictions, event-specific authorities, mitigation permissions, and collateral risk models—presenting operators with not only what can be done, but what is legally and operationally appropriate in that moment. Without this layer, operators are forced to recall policy from memory under stress, introducing hesitation precisely when speed and confidence are required. With it, AI becomes an enabler of disciplined action rather than an opaque black box. However, none of this is achievable without architectural discipline. AI cannot compensate for disconnected networks, proprietary data models, or isolated sensor stacks. In fact, AI systems perform best when data is standardized, time-synchronized, and shared through a unified command-and-control layer.

Without a single pane of glass, AI becomes just another pane—another alert stream competing for attention. With it, AI becomes the force multiplier that finally allows C-UAS operations to scale, adapt, and remain resilient against autonomous and adversarial threats. In short, AI is not the answer to C-UAS fragmentation—but it is indispensable to solving it. The future of effective C-UAS operations lies not in autonomous defeat, but in AI-enabled command and control: machines doing what they do best—correlating, prioritizing, and reasoning at machine speed—so humans can do what only they can do: make accountable, lawful, and confident decisions under pressure.

Next are the operational and policy implications associated with this challenge. The speed of decision-making boils down to manual correlation of multiple interfaces, which slows response times. In high-tempo operations, every second counts. Resilience under stress is critical when systems are disconnected, and operators must act as the fusion engine, errors multiply, and the likelihood of mission failure increases. Training and sustainment multiply when each interface requires separate proficiency. Without standardization, operational knowledge becomes fragmented and difficult to maintain, and vendors cannot be everywhere all the time to provide expertise on the system or repair when needed. Scalability should not be complex because a city cannot deploy bespoke systems for each event. Without a single COP, C-UAS remains a collection of unscalable efforts.

Path Forward: Scalable, Secure, Integrated C-UAS

The path forward is straightforward. What is needed is a single, integrated operational environment—one that fuses detection, identification, tracking, and mitigation into a shared common operating picture. That environment must provide policy-aware decision support aligned with SLTT legal authorities, enabling operators and commanders to understand not just what is happening in the air, but also which actions are permissible in the moment. It must also employ logical network segmentation and role-based access controls that satisfy cybersecurity requirements without degrading operational effectiveness. Critically, the architecture must scale across multiple sites and jurisdictions, supporting coordinated operations rather than isolated systems. Finally, it must standardize data models, symbology, and alert logic across all sensors and effectors, ensuring that information is consistent, actionable, and immediately understandable at every level of command. Until such an environment exists, agencies will remain reactive—good at talking compliant operators down but poorly positioned to defeat autonomous or adversarial systems. Operators should not be expected to mentally fuse chaos under stress; technology must do the heavy lifting.

Lastly, and potentially of the utmost importance, one of the most critical challenges in C-UAS operations is the compressed time and expansive spatial environment in which operators must act. Small drones move quickly, unpredictably, and in three-dimensional airspace that often overlaps with crowded urban areas, critical infrastructure, and multiple simultaneous events.

Operators are required to detect, identify, track, and mitigate threats across this dynamic space in seconds, while simultaneously accounting for airspace restrictions, operator compliance, and collateral risk. When multiple sensor systems are disconnected, the mental effort needed to fuse spatially distributed information in real time becomes overwhelming. Anyone who has attempted to do this in real-time, live environments will attest to the complexity. Decisions that demand split-second accuracy across both time and space are slowed, degraded, or occasionally impossible, creating operational gaps that adversaries can exploit. Additionally, those in the decision seat want to have the confidence backed by technology support to make the best decision they can when detection and ultimately defeating tools are used to protect people and events from nefarious drone activity. In essence, the time-compressed, multi-dimensional operational environment transforms fragmented tools into a critical vulnerability, not just an inconvenience—and every second spent mentally fusing chaos is a second the public is exposed.

Conclusion

In conclusion, the challenges facing C-UAS operations are not rooted in a lack of sensors, authorities, or emerging technologies. They stem from a failure to treat C-UAS as a true command-and-control problem. Over time, we have layered capability on top of capability—radar, RF, EO/IR, analytics, and mitigation tools—without requiring them to function as a coherent operational system. The result is not superiority, but complexity. Operators are surrounded by data yet deprived of clarity, empowered by tools yet constrained by fragmentation. This fragmentation carries real consequences. It slows decision-making in time-compressed environments, transfers integration risk to the human operator, and limits scalability across jurisdictions and events. It also creates a false sense of progress, where incremental sensor improvements mask structural weaknesses in how information is fused, visualized, and acted upon. In an era of autonomous, evasive, and increasingly sophisticated threats, this approach is not merely inefficient—it is unsafe.

The path forward does not require waiting for a perfect product or a revolutionary sensor. It requires architectural discipline and operational intent. A single pane of glass—implemented as a true common operating picture—must become the baseline expectation, not an aspirational feature. Detection, identification, tracking, mitigation, airspace context, policy constraints, and decision support must live in the same operational environment, governed by standardized data models, consistent symbology, and secure but connected networks. AI should not add noise to this picture, but reduce it—performing fusion, prioritization, and reasoning at machine speed so humans can decide with confidence. Most importantly, this shift is about respecting the human at the center of the system.  Operators should not be expected to mentally fuse chaos under stress, reconcile conflicting data streams, or shoulder institutional risk through improvisation. Technology exists to shoulder that burden—if we demand that it be integrated, disciplined, and operationally coherent. C-UAS has reached its inflection point. As mitigation authorities expand, threats evolve, and public exposure increases, the margin for fragmented operations disappears. We are not late to this problem, but no longer early enough to afford half measures. Delay is no longer an option when proven data fusion solutions exist. Information is scattered across systems, and decision-making fractures at the worst possible moment. We must integrate, standardize, and operationalize C-UAS in a single pane of glass—because the next adversary will not respond to phone calls. The next adversary will not overwhelm us with better drones; they will exploit our inability to see, decide, and act as one.

Operators should not be expected to mentally fuse chaos under stress, reconcile conflicting data streams, or shoulder institutional risk through improvisation. Technology exists to shoulder that burden—if we demand that it be integrated, disciplined, and operationally coherent. C-UAS has reached its inflection point. As mitigation authorities expand, threats evolve, and public exposure increases, the margin for fragmented operations disappears. We are not late to this problem, but no longer early enough to afford half measures. Delay is no longer an option when proven data fusion solutions exist. Information is scattered across systems, and decision-making fractures at the worst possible moment. We must integrate, standardize, and operationalize C-UAS in a single pane of glass—because the next adversary will not respond to phone calls. The next adversary will not overwhelm us with better drones; they will exploit our inability to see, decide, and act as one.