A Primer on Artificial Intelligence for Military Leaders

Stoney Trent and Scott Lathrop

SWJ Editor’s Note - This work is the result of the authors’ numerous engagements with senior leaders explaining what Artificial Intelligence (AI) is, what it is not, and why there is such hype currently surrounding it for military applications. The lead author is currently leading the effort for the DoD CIO in standing up DoD’s Joint AI Center.

Artificial Intelligence (AI) is increasingly popular, but not deeply understood by military leaders. It is being used in many industries to improve processes, services and products. Senior military leaders are beginning to recognize the importance and potential value of leveraging AI, but are not sure how to proceed. The Congressional Research Service recently released a report that summarizes DoD efforts and adversarial Nation goals for AI.1 The authors emphasized the importance of military leaders evaluating AI developments and exercising appropriate oversight of emerging approaches. Unfortunately, AI evangelists have a great deal to gain by overselling or misleading government decision makers. Although a centralized push for AI may result in a big win, poorly conceptualized AI applications could easily result in epic fails.2 The reality of advanced technologies often fails to match early promises, but innovators recognize unforeseen applications which result in paradigm changing breakthroughs.3 Accordingly, military leaders must understand what AI is, what it is not, and how best to implement AI systems.

What is AI?

AI is a concept for improving the performance of automated systems for complex tasks. Today these tasks include perception (sound and image processing), reasoning (problem solving), knowledge representation (modelling), planning, communication (language processing) and autonomous systems (robotics). Within academic settings, AI is most commonly considered a subfield within Computer Science, where the research focuses on solving computationally hard problems through search, heuristics, and probability. In practical applications, however, AI also draws heavily from mathematics, philosophy, linguistics, psychology, and neuroscience. Although some AI systems rely on large amounts of data, AI does not necessarily entail the volume, velocity and variety usually associated with “Big Data Analytics”. Likewise, Big Data Analytics do not necessarily incorporate AI approaches. It is also important to remember that what is considered AI today may not be considered “intelligent” tomorrow. In the 1980s, a grammar checker seemed intelligent, however such algorithms are taken for granted in today’s word processing software. Similarly, web search and voice recognition are integrated into many commonly used technologies.

AI systems often model the human perceptual, cognitive, and motor processes that are represented in the Observe-Orient-Decide-Act model of decision making. For example, Amazon’s AlexaÔ perceives a verbal question through a machine learning (ML) model that decodes a verbal message to an internally encoded knowledge representation; decides how to answer that question through knowledge search; and then encodes and delivers an audio response. Although impressive, Alexa does not always answer questions correctly, and it is easy to mislead or confuse her. A human must assess Alexa’s answer and determine whether to accept it as consistent with the individual’s world view.

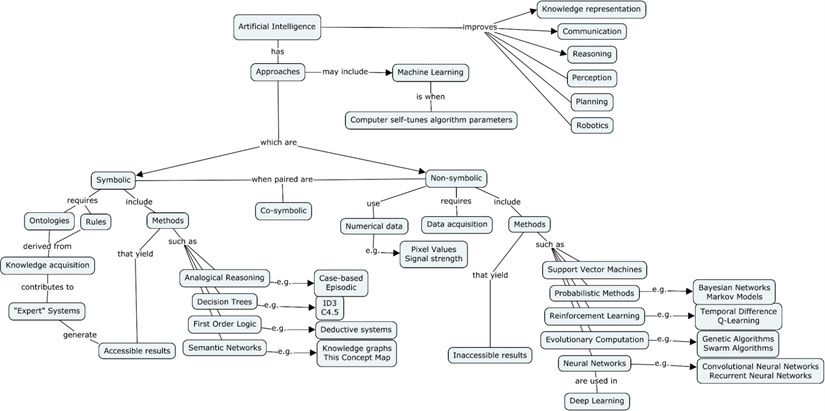

AI approaches can be categorized into one of two classes, symbolic and non-symbolic, as illustrated in Figure 1. As the name implies, symbolic approaches use symbols (e.g. words) to represent concepts, objects, and relationships between objects. Symbolic approaches generally rely on ontologies4 and a set of rules, or productions, to process information. Symbolic AI system developers must create ontologies and rules based on the cognitive work in the problem domain. This effort requires considerable knowledge acquisition to understand the problem domain. However, it can yield results that are readily explainable to humans because they are derived from human knowledge models and encoded using symbols that humans understand.

Figure 1 – AI Concept Map

Non-symbolic AI approaches are computationally intensive (i.e. linear algebra and statistical methods) where the input and knowledge representation is numerical (e.g. image pixels, audio frequencies), and only the output is (sometimes) encoded as a symbol (e.g. “it is 90% likely that this image contains a cat”). Whereas symbolic systems require considerable knowledge acquisition, non-symbolic ML systems generally require significant data acquisition and data curating for the system to make accurate classifications. Both symbolic and non-symbolic approaches have been around since the beginning of AI (~1950s) with symbolic systems (e.g. expert systems) being the preferred approach until recently (~2010) when deep learning systems demonstrated significant advances. The key point is that AI systems may employ a number of approaches, to include hybrid symbolic/non-symbolic approaches (or co-symbolic), each with their own tradeoffs.

As David Kelnar relates, most recent advances in AI have occurred with non-symbolic ML.5 ML provides computers with the ability to classify information without being explicitly programmed with knowledge of the problem domain. Non-symbolic ML reduces the problem associated with programming the large amount of tacit knowledge that humans have. The recent non-symbolic ML successes with image classification, audio recognition, and defeating the world’s “Go” champion6, can be attributed to: (1) greater availability of data created by the Internet, and (2) increased computational power from better hardware and scalable, distributed cloud storage and computing services.

ML approaches differ from traditional algorithmic processing in that they allow computers to tune their algorithmic parameters (e.g. variable weights) to improve outcomes. Algorithmic tuning, or learning, may occur offline or online. Offline learning involves training the system outside of its operating context, before it is deployed to the production system (e.g. training an autonomous car in a simulated environment, or a surveillance system with many images). Alternatively, online learning is when the AI system uses continuous data from its operating environment to refine the algorithm’s parameters. Online learning often entails a mix of symbolic and non-symbolic approaches. Such co-symbolic approaches are continuing to be explored as the symbolic system can provide context to the non-symbolic system.

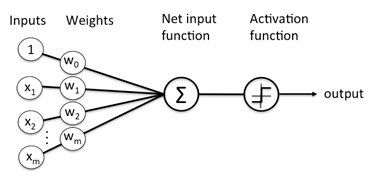

The most successful non-symbolic, supervised ML technique today is Deep Learning, which uses a many-layered neural network (NN). A NN is an algorithm that can categorize or cluster data. They can be used to search for features within data sets in order to infer labels, patterns, or predictions. As depicted in Figure 2, a NN accepts data and prioritizes it with weighting coefficients. The sum of the weighted inputs is compared to a correct answer to determine whether to accept or discard the solution. The AI system adjusts the weight of the coefficients until the correct answer results. Training of these algorithms is supervised in the sense that each training example has a labeled output (e.g. that is Jack’s face/voice/handwriting) that the NN is trying to match. Thus, the NN “learns” from these examples. Deeper NNs (i.e. more layers) require more training data, and more data necessitates more processing power, storage and bandwidth. Once the system is trained, the NN can be deployed in a production environment. As long as the data in that environment is consistent with the data used for training, the NN will perform well. Other non-symbolic approaches, such as probabilistic methods, have been successfully applied to problems in which the knowledge engineering required for symbolic AI is not feasible. However, with both NN and probabilistic methods, issues of trust arise as the human operators may not understand how the system is obtaining its results.

Figure 2- A diagram of a single-layer neural network. Inspired by brain neurons, most neural networks are a series of linear algebra or partial derivative equations. Deep Learning systems use at least three layers7

Brynjolfsson and McAfee have summarized the strengths and weaknesses of AI for business leaders.8 As they note, most recent AI success stories in business have been ML applications for perception and problem solving. Speech and image recognition have improved dramatically with the use of large neural networks. Supervised learning systems, or computers that are given many examples of a correct answer, have outperformed humans at certain games and process optimization. Machine Learning has driven change in business tasks (automated recognition), business processes (workflow optimization), and business models (changes to the type and manner of service delivery). In these areas, success depends on having a great deal of data about previous behaviors or successful outcomes. Unfortunately, current AI-enabled tools are generally brittle and successful only in narrowly-defined problem spaces.

AI Shortcomings

Success in narrow problem solving does not equate to broad understanding, nor does it ensure success in complex, dynamic situations. While computers have improved for supervised learning situations, humans are unmatched for unsupervised learning, and will remain so for many years. Today, computers are very good at answering specific questions for which they have been trained but are poor at posing them. Deductive reasoning (inferring a definitive conclusion) has always predominated computing. Inductive reasoning (inferring a likely conclusion) and abductive reasoning (inferring preconditions for a set of observations) have improved dramatically with AI approaches. Unfortunately, Pablo Picasso’s 1964 observation of computers (“They only give you answers.”) remains accurate today.

AI has a variety of other shortcomings that should temper and guide its employment. Foremost is interpretability. Esoteric mathematics and unwieldy data sets underpin the most successful applications. Consequently, diagnosing errors is extraordinarily difficult. Complicating the interpretability of non-symbolic ML approaches is the unspecified nature of training data sets. These sets may include underlying biases (e.g. sampling errors or intentional manipulation) that become internalized by the AI system.9 Specially-skilled AI scientists are necessary to select and tailor AI approaches, but may lack the operational perspective necessary for effective implementation. AI does not cope well with novel situations, and when AI systems present unexplainable results (even when they are optimal), trust in the automation will erode.10

Cybersecurity vulnerabilities related to the complexity of AI systems are also becoming evident. David Atkinson describes a number of vulnerability classes, a few of which are summarized here.11 Due to the resource intensive nature of AI, malware that misallocates computational resources can constrain AI systems such that they provide malformed solutions. In other words, the malware could distract the AI system enough for it to generate an incorrect answer. In multi-agent systems, such as UAV swarms, alteration of one UAV could trigger cascading changes across the entire swarm. Mathematicians refer to this as the Butterfly Effect, where small changes in a complex system can have dramatic effects over time.12 Alternatively, because most AI systems are designed to optimize multiple goals, cyber attackers could induce goal conflicts that result in undesirable behaviors. A military example of this would be a logistics management system, which optimizes three goals – maximum operational rate, minimum parts stockage, and minimum risk to resupply missions. Increasing the weighting of either of the last two goals could result in decisions to delay or divert critical parts.

Implementing AI in the Military

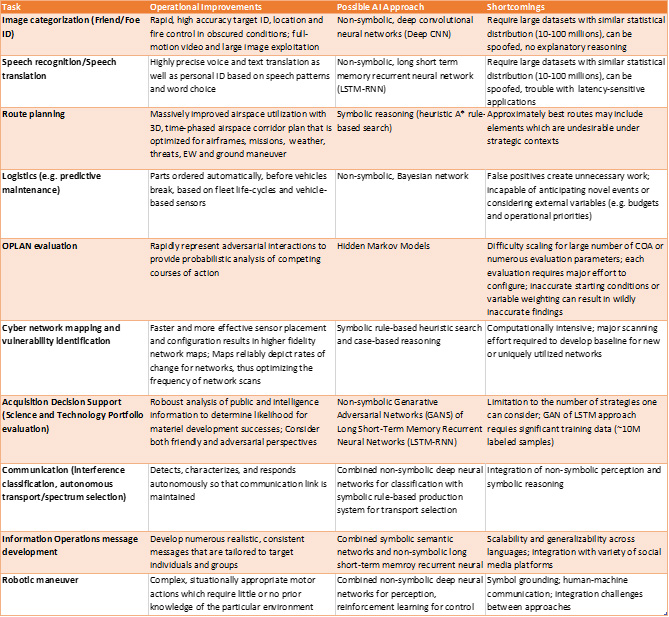

Although the current shortcomings present challenges to military adoption of AI, there are many military applications that can take immediate advantage of current approaches. Intelligence support tools are already benefiting from deductive inference algorithms for massive data sets. For example, Project Maven has illustrated the benefits of AI-enabled image recognition for aerial surveillance.13 These approaches could be implemented in fire control systems to reduce fratricide and improve target identification. Route and logistics planning tools have been part of military systems since the early 90s. Such tools could be improved with Bayesian methods, which would detect deviations and optimize routes and processes under variable conditions. Planning support tools could improve war plan evaluations with probabilistic comparisons of complex courses of action. Sensemaking in cyberspace operations, particularly network mapping and vulnerability identification and patching, could benefit from automated rule-based, reasoning approaches such as those recently demonstrated in DARPA’s Cyber Grand Challenge. AI systems could improve major acquisition decisions by monitoring and synthesizing information about world-wide science and technology investments and breakthroughs. Marketing successes in commercial sectors demonstrate the potential of AI approaches for Information Operations. Communication systems can be improved with deep neural networks for classifying interference and optimizing spectrum usage. Finally, robotics and autonomous vehicles will rely on AI for route planning, perception, and tactical maneuver. Table 1 describes how a variety of AI approaches could be used to improve tasks in the military. It also highlights some important shortcomings for each possible AI approach.

Implementation of any of these applications entails much more than purchasing commercially available software. Each AI system is unique and requires significant research and development. The design of the AI system will depend on its ultimate operating environment (physical/virtual, controlled/uncontrolled) and the nature of the data to be analyzed (discrete/continuous, structured/unstructured). A first order problem for non-symbolic ML is obtaining useful data.

A data strategy that addresses gathering, curation, storage and protection of data sources is critical. Locating and establishing access to useful data sets can involve many decision makers who have little incentive to cooperate with an AI program. Policies, regulations and laws limit who can access certain types of data and how it must be stored. Consequently, most AI applications will involve data stewardship for both training and operational data. Documenting the nature of training data and comparing it to production system data will be important for determining the fit of the AI system.

AI applications are significant information systems that require development, testing, maintenance, and specially-skilled users. Network engineers and architects, security engineers, and data/computer scientists must work together to design these systems. System Security Plans document the hardware, software, connectivity and controls which will protect the system, and are the basis for an Authorizing Official to approve the system to operate. Cloud computing solutions and cybersecurity services require contracts and memorandums of agreement. Although most data for AI systems are unclassified, the analysis can often become classified. Therefore cross-domain solutions are often needed to accommodate moving data between classified and unclassified infrastructures. Unfortunately, current test and evaluation methods are time and labor intensive, and inadequate for software that learns and adapts.14 Because AI systems evolve, sustained test and evaluation as a part of regular operations will be necessary to ensure that the system remains within desired performance parameters.

Table 1 - Possible AI Approaches for Military Tasks or Applications

Finally, AI systems are not just collections of hardware and software, but rather supervisory control systems. Such systems must be observable, explainable, directable, predictable, and direct the attention of their human supervisors when warranted by the situation.15 Even the relatively mature technologies in spelling checkers, Internet search engines and voice recognition applications make mistakes that require human awareness and intervention. So-called fully autonomous systems will still have some person who is responsible for ensuring the system is behaving properly, and who ultimately serves as the source of resilience in the face of surprise.

For these reasons, it is important to realize that humans will work in conjunction with these AI machines. A strength of such a human-machine teaming approach is that humans can provide the AI its purpose, goals, and some context to focus the machine’s reasoning. However, for teaming to succeed, trust must prevail. One important concept for trust in automation is a reliable, known performance envelop. Users must understand the conditions for which their system has been designed and have sufficient feedback mechanisms to determine where the system is in the performance envelop and when it is about to violate it. Toward this end, DARPA is currently working on explainable AI approaches to improve the interpretability of machine learning.16 However, all of this suggests the need for interdisciplinary translators within an AI program who can interpret operational needs and technical possibilities.

Conclusion

In business settings, the bottlenecks for further success with AI are management, implementation, and imagination.17 Military settings add dangerous, uncertain environments, which are contested by persistent adversaries in search of an Achilles’s Heel. AI applications offer the potential to expedite data interpretation, and free humans for higher level tasks.18 However, technologists and military leaders must consider the immutable nature of supervisory control systems. Despite regular concern in the popular media for AI replacing humans, there is no support for this replacement myth. Rather, new technologies change and create new roles for humans to accomplish their goals. As with other technologies, AI applications must support the inherently human endeavor of goal setting.

AI is creating new, and changing existing, paradigms in business and warfare. Because success or failure can be existential for the Nation, military leaders must be conversant enough with AI to invest in, and use, these technologies wisely. In July 2017, China announced plans to become the world leader for AI.19 In September 2017, Vladimir Putin told students that the AI leader “will become the ruler of the world”.20 Other countries, such as Australia, are already including advanced technology (including AI) literacy into their military education.21 We cannot afford to allow a knuckle-dragging aversion to math and science misdirect our own investments.

This paper reflects the views the authors. It does not necessarily represent the official policy or position of Department of Defense or any agency of the U.S. Government. Any appearance of DoD visual information or reference to its entities herein does not imply or constitute DoD endorsement of this authored work, means of delivery, publication, transmission or broadcast.

End Notes

1 Daniel Hoadley and Nathan Lucas, “Artificial Intelligence and National Security”, Congressional Research Service (26 April 2018)

2 Jason Matheny, Director Intelligence Advanced Research Program Agency as cited in Daniel Hoadley and Nathan Lucas, “Artificial Intelligence and National Security”, Congressional Research Service (26 April 2018), pp 21.

3 Hargadon, Andrew. How Breakthroughs Happen: The Surprising Truth About How Companies Innovate (HBR Press, 2003).

4 An ontology is a model of how concepts within a subject area relate to each other.

5 David Kelnar, “The fourth industrial revolution: A primer on Artificial Intelligence (AI)”, Medium.com (2 December 2016), accessible at: https://medium.com/mmc-writes/the-fourth-industrial-revolution-a-primer-on-artificial-intelligence-ai-ff5e7fffcae1

6 David Silver, Aja Huang, Chris Maddison, et. al. “Mastering the game of Go with deep neural networks and tree search”, Nature, 529(7587), 484–489, (2016).

7 See https://deeplearning4j.org/neuralnet-overview

8 Erik Brynjolfsson and Andrew McAfee, “The Business of Artificial Intelligence”, Harvard Business Review, (July 2017), accessed at: https://hbr.org/cover-story/2017/07/the-business-of-artificial-intelligence

9 Ibid.

10 Nadine Sarter, David Woods, and C. Billings, “Automation Surprises”, In Handbook of Human Factors and Ergonomics, Second Edition, G. Salvendy (Ed.), Wiley (1997).

11 David Atkinson, “Emerging Cyber-Security Issues of Autonomy and the Psychopathology of Intelligent Machines”, Foundations of Autonomy and Its (Cyber) Threats: From Individuals to Interdependence, 2015 AAAI Spring Symposium (2015).

12 Edward Lorenz, “Predictability: Does the flap of a butterfly’s wings in a Brazil set off a tornado in Texas?”, American Association for the Advancement of Science, (29 December 1972).

13 Gregory Allen, “Project Maven brings AI to the fight against ISIS,” The Bulletin of the Atomic Scientists, (21 December 2017) accessed at: https://thebulletin.org/project-maven-brings-ai-fight-against-isis11374

14 Defense Science Board, “Summer Study on Autonomy”, Department of Defense, Washington, DC (June 2016), pp 29.

15 David Woods and Erik Hollnagel, “Joint Cognitive Systems: Patterns in Cognitive Systems Engineering,” Taylor and Francis (2006), pp 136-137.

16 See https://www.darpa.mil/program/explainable-artificial-intelligence

17 Erik Brynjolfsson and Andrew McAfee, “The Business of Artificial Intelligence”, Harvard Business Review, (July 2017), accessed at: https://hbr.org/cover-story/2017/07/the-business-of-artificial-intelligence

18 Michael Horowitz, “The promise and peril of military applications of artificial intelligence”, The Bulletin of the Atomic Scientists, (23 April 2018), accessed at: https://thebulletin.org/military-applications-artificial-intelligence/promise-and-peril-military-applications-artificial-intelligence

19 Paul Mozur, “Beijing Wants A.I. to Be Made in China by 2030”, New York Times (20 July 2017), accessed at: https://www.nytimes.com/2017/07/20/business/china-artificial-intelligence.html

20 Radina Gigova, “Who Vladimir Putin thinks will rule the world”, CNN, (2 September 2017), accessed at: https://www.cnn.com/2017/09/01/world/putin-artificial-intelligence-will-rule-world/index.html

21 Mick Ryan, Commander, Australian Defence College, E-mail correspondence (1 June 2018).

About the Author(s)

Comments

I have been developing my…

I have been developing my small business for more than a year. Outsourced managed IT services https://itoutposts.com/managed-it-services-provider/ help me a lot. They ensure the smooth operation of our company's IT infrastructure through continuous monitoring and rapid response to any problems. This mitigates risks and reduces the likelihood of failures, which can lead to temporary or even permanent loss of data and revenue.