Weapons of Automated Destruction and the Moral Duty to Protect Warfighters

Lyle D. Burgoon

In 2050 automated weapons systems (AWS; i.e., human out of the loop automated weapons systems), driven by artificial intelligence, will be the norm. The initial deployment of these systems, pre-2050, will be for anti-access/area denial (A2/AD), serving primarily as fixed-station or mobile patrol sentries in areas where civilians are not allowed (e.g., the Korean Demilitarized Zone). However, by 2050, these weapons systems may be ready to be deployed as mobile platforms accompanying units of warfighters. The ethical questions today are: 1) are AWS ethical weapons systems and 2) under what circumstances is it ethically acceptable for fully automated weapons systems to be used in just wars (jus ad bellum) and in ways that protect humanitarian interests (jus in bello).

The deployment of automated weapons systems (AWS) poses significant ethical challenges, especially jus in bello. Chief among them is the ethical question of whether we should possess weapons that can decide which humans to kill. Although this certainly saves lives of the just warfighter, it raises the jus in bello concerns of how well an AI weapons system discriminates between combatant and non-combatants.

In this analysis I will describe the ethical obligation of commanders to utilize AWS once technologically feasible. I will then examine each of the ethical objections one might raise to the use of AWS and offer a response. In the end, a set of algorithmic and mathematical criteria, collectively called “policies”, for the ethical operation of AWS in combatant situations will be produced.

The Ethical Obligation of Commanders to Use AWS Technology

Military commanders have a moral duty to protect their warfighters. Strawser (2010) termed this the Principal of Unnecessary Risk, which is the idea that commanders must protect their subordinates from engaging in actions that may expose the subordinates to lethality when a suitable alternative exists. This principle also exists explicitly in the US Department of Defense Law of War Manual (2015) and the UK Law of Armed Conflict Manual (2013).

This principle, encoded in these war law manuals, makes it clear that commanders have a responsibility to avoid the reckless deployment of their warfighters. The United States has historically invested significantly in research, development, test, and evaluation (RDT&E) to maintain an overmatch capability that ensures the asymmetric advantage of US forces. By employing these technologies, commanders have options to meet their moral duty to protect their warfighters. It would be reckless and irresponsible for commanders to unnecessarily expose their warfighters to lethality. Thus, commanders have an obligation to use AWS to replace warfighters in conducting risky operations or actions whenever feasible.

For instance, consider the act of breeching a door in an urban operation. Warfighters who have performed this action know that it is one of the most risky parts of a breech and clear. Doors may be trapped. Belligerent actors on the other side of the door may be prepared for the breech. The warfighter breeching the door is relatively unprotected, standing in an open doorway after the door has been breeched. By replacing the breeching warfighter with an AWS that could subdue hostile actors on the other side of the door, the commander would limit the risk of lethality to their subordinates.

Another area where AWS would limit potential risk for warfighters is in the enforcement of A2/AD. Perimeter use of AWS at a forward operating base (FOB) would allow warfighters to focus on other duties, while limiting their risk at the FOB perimeter.

Objections to the Use of AWS

Objection 1: AWS will make war more easily justified, leading to more wars.

This is the notion that AWS will make it easier for societies to justify jus ad bellum. This could arise from two different points: 1) AWS will change the threshold for what qualifies as jus ad bellum and 2) AWS will lower society’s threshold for accepting war as an option due to a perception of less warfighter risk.

Response: This challenge could be raised for all technologies that lead to asymmetry and overmatch. The criteria for jus ad bellum do not change when technology advances, when asymmetry becomes greater, or when there is greater overmatch. Society’s appetite for risk, especially warfighter lethality, is not a consideration for jus ad bellum.

It is instructive to consider a different type of asymmetry – stealth fighters and bombers. Stealth fighters and bombers have certainly led to decreased lethality for warfighters, specifically pilots and the ground forces that they directly/indirectly support. This has led to a significant asymmetry and overmatch capability for the US military in the 20th and early 21st Centuries. The development and use of these technologies have not changed the factors that determine jus ad bellum. Nor have these capabilities led to a sudden increase in wars.

Now consider if during a war the US decided to not use stealth fighters and bombers. In this situation, the US would use non-stealth aircraft to destroy enemy aircraft and anti-aircraft batteries in order to “own the sky.” In this scenario, the US would either 1) likely experience a significant loss of aircraft and pilots or 2) not “own the sky.” In the latter scenario, there would be a significant loss of warfighter lives on the ground due to a lack of air support, making the situation potentially unwinnable. In the former scenario, if the US were able to degrade enemy air defenses and degrade the enemy air forces, it would be at significant loss of US lives and weapon system investments.

The US military asymmetry through investment in RDT&E, and the ability to protect our warfighters is an integral part of our defense posture and our deterrence capability. By not taking advantage of, in this case, stealth technology, the US involvement in future campaigns would be unsustainable. The use of stealth technology did not change the threshold for jus ad bellum.

Given the commander’s duty to protect warfighters, the fact that the asymmetry can yield warfighter protection, and the fact that asymmetry for warfighter protection does not actually change the criteria for jus ad bellum, then it is not only permissible but a duty of the commander to use these technologies.

Similarly, as the use of AWS does not change the criteria for jus ad bellum, and the use of AWS would confer an asymmetric advantage that protects warfighters, the commander has a duty to use AWS technologies.

Objection 2: Humans have a moral intuitiveness that algorithms cannot achieve in determining who should die and who should not in times of war. Furthermore, the Law of Armed Conflict bans weapons that kill indiscriminately. How can we be certain that an AWS will not kill indiscriminately?

Response: When it comes to combat situations, assuming jus ad bellum, the Law of Armed Conflict provides significant guidance to warfighters and commanders about the use of lethal force. Among these are the fact that lawful combatants have, by the nature of being lawful combatants, the proportional rights to kill enemy combatants, and the right to be killed by enemy combatants.

However, lawful combatants also have certain expectations of their behavior under the Law of Armed Conflict. Again, assuming jus ad bellum, lawful combatants are expected to minimize impacts of military operations on civilian populations.

Complicating things is the fact that operational conditions in a conflict are not always as straightforward as we would like. In irregular warfare, when warfighters are not clearly discernable from the civilian population, it can be difficult for just and authorized warfighters to determine who is a civilian and who is a combatant. This can make it difficult for a human combatant to know who is a legal target. When illegal combatants engage lawful combatants within civilian built environments, such as buildings and homes, they may also use civilians as hostages or human shields. Commanders on the ground will have to make determinations based on existing rules of engagement on how to handle these fluid situations. These are daunting for humans – it is difficult to imagine how effective an AWS may be in these situations.

How do we balance the needs for AWS to adhere to the Law of Armed Conflict? How do we balance the need for civilian protection, minimize harm to the civilian built environment, while balancing the need to engage illegal combatants?

This is actually rather straightforward – algorithms.

The combatant/civilian targeting tradespace boils down to the same tradespace we generally face when using artificial intelligence for classification problems, and it’s the same tradespace we have in medical diagnostic tests – how do we balance sensitivity and specificity. Sensitivity is the true positive rate – it reflects the ability of the AI to classify enemy combatants as enemy combatants. Specificity is the true negative rate – it reflects the ability of the AI to classify non-enemy combatants as non-enemy combatants. It is well-known in classification problems that increasing either sensitivity or specificity comes at a loss of the other. So, if we maximize our sensitivity, then we will likely have a corresponding decrease in our specificity, and vice versa. We call this the “no free lunch theorem for supervised learning.” Depending upon our goals in the classification problem, we either want to maximize the sensitivity or the specificity.

Let’s consider a medical diagnostic challenge – a first line cancer screening diagnostic. To be an effective first line cancer screen, we do not want to miss anyone who has cancer. And since this is a first line screen, the first one we would give someone, we are willing to include people who do not have cancer as positives in the screen, because they will get a second screen that is more specific (i.e., does a better job at saying which people don’t have cancer). By taking this approach, we can make sure everyone who has cancer is likely to be moved into treatment, while balancing that against a smaller number of people who don’t have cancer getting a secondary screen and avoiding unnecessary treatment. This illustrates the importance of the balance between sensitivity and specificity.

The Law of Armed Conflict is clear that for jus ad bellum we need to protect civilians and civilian built environment under the rule of proportionality. It is important to note that the rule of proportionality does allow for some collateral damage, including civilian loss of life or property, if the military objective justifies it. It is important to note that some civilian loss of life or property is unfortunate but inevitable.

Bringing this back to sensitivity and specificity, it is clear that for use of an AWS to be jus ad bellum, it must have high specificity – that is, it must have a high false negative rate. In other words, the AWS must be very specific in that it does not misclassify civilians as enemy combatants, even if this means that the AWS misclassifies enemy combatants as civilians.

It would be unethical for an AWS to be used if it had a low level of specificity, even if it had a high sensitivity. What this means is that it would be unethical for the AWS to be highly accurate at predicting enemy combatants at the expense of classifying civilians as enemy combatants. This situation would result in civilians being killed, due to their miscategorization as enemy combatants.

Objection 3: It is immoral for an AWS to take a human life.

The underlying argument here is that a human must always be in the loop of firing a weapon for the taking of a life to be jus ad bellum.

Response: The key objection here appears to be the fact that only a person should make the decision to take a life. That is to say, that an AWS that is capable of lethal force is malum en se (evil in itself).

However, medical ethics has already addressed the issue of algorithms making the decision to take a life. Today algorithms make life or death decisions about which patients receive life saving care when performing triage in an emergency room or mass casualty context. Also, algorithms decide which organ transplant patients receive an organ before others (Reichman 2014). In these cases, society has decided that computers should be allowed to make life or death decisions.

One may object and state that a human may at any time overrule the triage or transplant decision. However, part of the justification for using an algorithm, as opposed to a human decision-maker, in triage and transplant decisions is the perception that computers are more objective than humans, making the organ match process more fair (Reichman 2014).

A reasonable counterpoint to this argument is that in these medical ethics cases, the patient was terminally ill – meaning, they were going to die. So in effect, the algorithm only decides who is going to live, not who is going to die. However, given that the ethical issue is the rationing of life-saving medical care, denial of life-saving care by use of the algorithm is actually sentencing someone to death.

To be clear, the algorithm in these medical rationing cases is using criteria, developed by humans, encoded as an algorithm (which may be implemented by a person or in software). The algorithm decides who lives and who dies. Even in the case where a machine learning algorithm is used to train a model, humans are responsible for the validation of the model and ensuring the model performs appropriately in the real world.

This process of developing criteria for performance, validation of the models, and ensuring the AWS performs appropriately is no different from the validation and testing performed for other weapons systems. Thus, in most ways, implementation of an AWS is not effectively different from implementation of conventional weapons systems.

Consider the following scenario. There is a conflict environment where two sides will battle. The commander for one side decides to employ AWS. In so doing, this human commander has now made the determination that the conditions are appropriate for the use of an AWS. Although a human is out of the loop for executing the actual firing of any projectile (i.e., there is no human pulling a trigger), a human is still in the command loop making the decision to use AWS. This means that there is still a human responsible for the operation of an AWS – the concept of Command Responsibility under the Law of Armed Conflict still applies.

Objectively this has the same effect as a commander giving the order to fire a projectile from a cannon or issuing an order to fire a ballistic missile. A human commander has given an order, based on their determinations that it is justifiable to use lethal force in a form that is proportional to the military objective. Once the projectile or ballistic missile leaves, the commander does not have the ability to stop the projectile or missile from hitting its target. At the point that the weapon is deployed, control is lost.

In some ways this is similar to an AWS with one large caveat – the commander can always stop an AWS after being deployed prior to it making a decision to act. This means that the AWS is less like a cannon, and more like a warfighter. There is still the ability to stop a warfighter prior to them firing their weapon. Likewise, there is still the ability to stop an AWS prior to it deciding to fire its weapon.

Mathematically, the risks posed by an AWS to a civilian population are no different from those of conventional weapons systems under certain constraints that must be employed (these constraints will be discussed in a later section). Even with conventional weapons systems, the risks of collateral damage is a function of the percentage of the built environment and population that is civilian within a conflict zone. Less precise weapons will have a higher risk of killing civilians than more precise weapons, given the same targeting information and percentage of the population in the conflict zone that are civilians. For more on this concept, see the next section.

So now, the concern really becomes the ability of the AWS to properly discern civilian from enemy combatant and proportionality. Proportionality of response is a policy consideration that can be made by the commander and selected as a parameter prior to deployment of the AWS. Or the AWS could be limited in terms of proportionality of response by constraining what it can and cannot do. We will discuss additional algorithmic policies in the next section.

Algorithmic Policies to Ensure the Ethical Use of AWS

Algorithmic policies provide the perimeter of what is and is not acceptable behavior for the AWS. The most important policies address the jus in bello concept of discrimination.

I recommend that in future warfare, AWS designers use Bayesian decision analysis (BDA) to provide a regulatory safeguard for discrimination. BDA uses Bayes rule to compare the probabilities that a subject is an enemy combatant versus the probability that the subject is a civilian. BDA uses the prior knowledge of the numbers of enemy combatants and civilians in an area from the Intelligence Preparation of the Battlefield (IPB) products. These numbers can be used to estimate the probability that a random subject encountered is an enemy combatant or a civilian. Consider the following example:

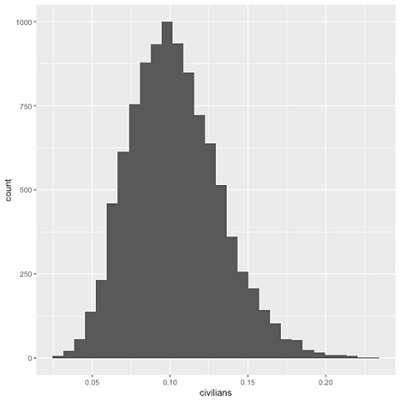

An AWS has a specificity of 99% and a sensitivity of 90%. Based on information from the IPB process, we estimate that most of the civilian population has vacated, leaving approximately 10% of the remaining population as civilian. We have some uncertainty in this estimate, and we represent our uncertainty using a Beta(11.5, 100) distribution (Figure 1).

Figure 1: Civilian population estimate from IPB with uncertainty. We estimate that approximately 10% of the remaining population is civilian, however we have some uncertainty in our value. Our uncertainty places the lower bound at 5%, and the upper bound around 20%. However, we believe most strongly that roughly between 5-15% of the remaining population is civilian. This is modeled using a Beta(11.5,100) distribution.

Given our estimate and uncertainty for the civilian distribution, we can also estimate the enemy combatant distribution. This is modeled as 1 – Beta(11.5, 100) distribution (Figure 2).

Figure 2: Enemy combatant population estimate from IPB with uncertainty. We estimate that approximately 90% of the remaining population are enemy combatants, however we have some uncertainty in our value. Our uncertainty places the lower bound at 80%, and the upper bound around 95%. However, we believe most strongly that roughly between 85-95% of the remaining population are enemy combatants. This is modeled using a 1 – Beta(11.5, 100) distribution.

Next, we would create models that calculate the probability that a subject is a civilian and a model that the subject is a terrorist.

Scenario 1: Subject is classified as a civilian

The AWS has classified the subject as a civilian and has a 99% specificity for classifying civilians. Based on the IPB, we are using the Beta(11.5, 100) model to represent the population that is likely civilian. What is the probability that the subject is actually civilian given our intelligence information about the number of civilians in the area?

In this example, we use P(Civilian|-) to denote the probability that the target actually is a civilian given that the model says the target is a civilian. Note that we use the plus (+) sign for the model’s prediction that someone is an enemy combatant (enemy) and a minus (-) sign for the model’s prediction that someone is a civilian.

If we don’t use any uncertainty, and just assume that 10% of the remaining population is civilian, we can use Bayes Rule to estimate P(Civilian|-) directly:

Thus, given the current intelligence, and the model’s specificity, we have a 91.7% probability that the target is actually civilian.

We can also incorporate our uncertainty about the P(Civilian) and P(Enemy). To do this, we use R to simulate the Beta(11.5, 100) distribution for P(Civilian) and the 1-Beta(11.5, 100) distribution for P(Enemy).

Given our uncertainty, the current intelligence, and the model’s specificity, we now have a 95% credibility interval for our P(Civilian|-) which spans from 85.8% to 94.8%, with the most likely probability (i.e., the median of the distribution) being 91.8%.

Scenario 2: Subject is classified as an enemy combatant

The AWS has classified the subject as an enemy combatant and has a 90% sensitivity for classifying enemy combatants. Based on the IPB, we are using the Beta(11.5, 100) model to represent the population that is likely civilian. What is the probability that the subject is actually civilian given our intelligence information about the number of civilians in the area?

In this example, we use P(Enemy|+) to denote the probability that the target actually is an enemy combatant given that the model says the target is a civilian. Note that we use the plus (+) sign for the model’s prediction that someone is an enemy combatant (enemy) and a minus (-) sign for the model’s prediction that someone is a civilian.

If we don’t use any uncertainty, and just assume that 90% of the remaining population is enemy combatants, we can use Bayes Rule to estimate P(Enemy|+) directly:

Thus, given the current intelligence, and the model’s specificity, we have a 98.8% probability that the target is actually an enemy combatant.

We can also incorporate our uncertainty about the P(Civilian) and P(Enemy). To do this, we use R to simulate the Beta(11.5, 100) distribution for P(Civilian) and the 1-Beta(11.5, 100) distribution for P(Enemy).

Given our uncertainty, the current intelligence, and the model’s specificity, we now have a 95% credibility interval for our P(Enemy|+) which spans from 98.0% to 99.3%, with the most likely probability (i.e., the median of the distribution) being 98.8%.

Operational Constraints on AWS Use As Algorithm Policies

Policymakers need to decide what a reasonable limit of confidence is before the AWS can engage the target. Given jus in bello considerations, civilian lives must be protected. This means that misclassifying enemy combatants as civilians is much less of a concern than misclassifying civilians as enemy combatants. In practice, this means that the calculation in Scenario 1 is less important than the calculation in Scenario 2, above.

Thus, to protect civilians, we need to set the threshold for engagement for P(Enemy|+) very high – in other words, we need to have high confidence that the model’s prediction that someone is an enemy combatant is quite high. Since the P(Enemy|+) + P(Civilian|+) = 1, then the P(Civilian|+) = 1 – P(Enemy|+). So the question becomes, what level of P(Civilian|+) – that is, the probability of the model saying someone is an enemy combatant when they are actually a civilian – are we willing to accept?

The answer really depends and is the heart of the principle of proportionality. Interestingly, the P(Civilian|+) will increase as the civilian percentage of the population within a conflict zone increases. Likewise, the P(Civlian|+) will decrease as the sensitivity of the AWS increases.

We see this same concept play out with respect to weapons systems of all kinds. As the civilian percentage of the population within a conflict zone increases, so does the risk of killing civilians. As we use more targeted weapons systems, the risk of collateral damage, given good targeting information, goes down.

So far we have only dealt with targeting and discerning civilians from enemy combatants. There is also the question of targeting accuracy. If an AWS is a “better shot” than a human, and if it were able to take environmental conditions into consideration, then there may be less risk to civilians overall, simply due to the AWS being a better shot than a human. We can quantify how effective a shot the AWS is compared to humans, and we can include that in our Bayesian Decision Analysis.

The key to ensuring these policy considerations are executed is to have them coded into the AWS as part of its decision-making. This will ensure that the AWS applies the policies appropriately, and only acts under those situations where the situation applies. For instance, this can be as simple as stating in the decision logic that for lethal force to be applied, the AWS must have a P(Enemy|+) > x, where x is some socially acceptable probability that normally applies at times of war. Ultimately, just as for other warfighters, the commander that deploys the AWS is ultimately responsible for its actions under the Law of Armed Conflict.

The most significant technical challenge at this time with AI visual identification is adversarial images – those images which fool the AI into performing a misclassification. When one thinks of adversarial images, they typically do not envision an AWS, but rather a facial recognition software that takes in images. However, as the AWS will need to operate on images, generally from an onboard camera, the AWS is still susceptible to adversarial images. Although an extensive treatment is beyond the scope of this article, it should be noted that in the future AI will need to become robust to adversarial images to be effective. Else, it would be possible for a legal enemy combatant to wear textiles that made them appear to be classified as a civilian. This would decrease the utility of the AWS.

The challenge with adversarial images is that this would not necessarily be a violation of the jus in bello concept of discrimination. If it were possible for a human to still discern that the subject is an enemy combatant, but for the AWS to think it was a civilian, that is a perfectly reasonable asymmetry. This would not be significantly different from using camouflage. So now the challenge becomes ensuring that an AWS does not inadvertently classify a civilian as an enemy combatant. This situation is less likely if the AWS is primarily trained to detect a small class of individuals as enemy combatants and everything else as not enemy combatant. Again, this is where the decision rules based on the Bayesian statistics and the real-world knowledge of the number of civilians is crucial. Thus, I would argue that it is highly unlikely, given these constraints, that an AWS would be in violation of jus in bello.

Conclusions

AWS are the future of warfare. Their use is a moral imperative for commanders, ensuring that commanders are able to safeguard the lives of their warfighters. The moral arguments against the use of AWS are overcome by policy considerations that become part of the software code, and ensure the AWS is operating within the Law of Armed Conflict. I have shown how Bayesian Decision Analysis can be used, in concert with data from the IPB process, to ensure the AWS makes appropriate decisions and takes actions that are in line with the commander’s intent and the Law of Armed Conflict.

Thus, for the AWS to be operationally effective, and to ensure jus in bello, the AWS needs to favor protection of civilians. This means that the commander that employs AWS needs to understand that the AWS must allow some enemy combatants to live to minimize civilian casualties. This means that AWS may not be the best option when fighting non-state actors or irregular militaries that do not adhere to concepts of discrimination under jus in bello.

Ultimately, just as with other warfighters, the commander that deploys an AWS will be responsible for its decisions and actions under the Law of Armed Conflict. This will help safeguard society from abuse by the AWS and ensure civilian control through the chain of command.

References

Ministry of Defense. 2013. “Manual of the Law of Armed Conflict (JSP 383) - GOV.UK.” August 2013. https://www.gov.uk/government/collections/jsp-383.

Reichman, Trevor W. 2014. “Bioethics in Practice - A Quarterly Column About Medical Ethics: Ethical Issues in Organ Allocation for Transplantation – Whose Life Is Worth Saving More?” The Ochsner Journal 14 (4):527–28.

Strawser, Bradley Jay. 2010. “Moral Predators: The Duty to Employ Uninhabited Aerial Vehicles.” Journal of Military Ethics 9 (4):342–68. https://doi.org/10.1080/15027570.2010.536403.

US Department of Defense. 2015. “Department of Defense Law of War Manual.” June 2015. https://www.defense.gov/Portals/1/Documents/law_war_manual15.pdf.