Overcoming the Death of Moore’s Law: The Role of Software Advances and Non- Semiconductor Technologies in the Future Defense Environment

Stuart Vanweele and Ralph Tillinghast

Introduction

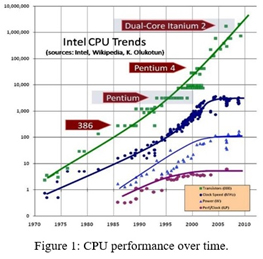

The spectacular growth of computers and other electronic devices created from silicon based integrated circuits has swept all competing technologies from the marketplace. Moore’s Law, which states that the density of transistors within an integrated circuit, and therefore its speed, will double every two years, is expected to end before 2025 [1] [2] [3] as the minimum feature size on a chip bumps into the inherent granularity of atoms and molecules. While there are a number of promising technologies that may move clock speeds into the 10s of GHz, switching speeds in silicon will eventually reach a limit. Figure 1 shows that clock speed has already plateaued [4]; performance improvements are now dependent on better instruction pipelines and multicore architecture.

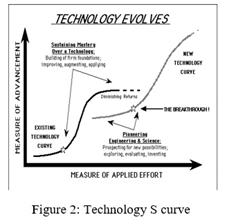

The end of Moore’s law can be seen as another example of a typical technological growth curve (Figure 2) [5]; it is now time to move on to new technologies. Current semiconductor technology is inherently fragile. Semiconductor circuits can only operate within a fairly narrow temperature range and are susceptible to Electro Magnetic Pulse (EMP), as well as physical and radiation damage; shrinking feature sizes down to the nm range increases these vulnerabilities. Devices can be hardened by shock isolation, shielding, and incorporating redundant circuits, however, this adds cost and complexity, as well as increasing size and weight.

This paper reviews ways to extend semiconductor technology in the near term, as well as technologies which have been sidelined, but are worth revisiting as a means of sidestepping the limitations of semiconductor technology. Technology recommendations will be put forth as lead, shape, or watch. The Army should lead core technologies when it is imperative and, when only the Army will or can lead, shape other technologies by leveraging industrial and academic work to meet Army specific applications and watch developing technology trends which may impact the Army mission. The following sections expand on technology areas that will have the potential to overcome the end of Moore’s Law.

More Efficient Software

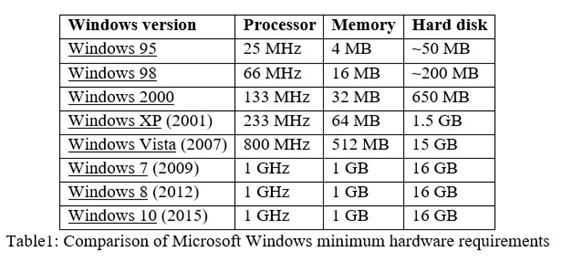

Faster, more efficient hardware has shifted the software developer’s focus away from writing efficientcode. Wirth’s law, a corollary to Moore’s law, states that software is getting slower more rapidly than hardware is getting faster [6]. While this may be a bit of hyperbole, software bloat has detracted from hardware performance gains. Table 1 illustrates software bloat between different versions of Microsoft Windows [7]. While features have been added to each version, it would be difficult to argue that usability improvements have compensated for an almost one thousand fold increase in minimum hardware requirements.

Modern programing environments contribute to the problem of software bloat by placing ease of development and portable code above speed or memory usage. While this is a sound business model in a commercial environment, it does not make sense where IT resources are constrained. Languages such as Java, C-Sharp, and Python have opted for code portability and software development speed above execution speed and memory usage, while modern data storage and transfer standards such as XML and JSON place flexibility and readability above efficiency. The Army can gain significant performance improvements with existing hardware by treating software and operating system efficiency as a key performance parameter with measurable criteria for CPU load and memory footprint. The Army should lead by making software efficiency a priority for the applications it develops. Capability Maturity Model Integration (CMMI) version 1.3 for development processes should be adopted across Army organizations, with automated code analysis and profiling being integrated into development. Additionally, the Army should shape the operating system market by leveraging its buying power to demand a secure, robust, and efficient operating system for devices. These metrics should be implemented as part of the Common Operating Environment (COE).

Improved Algorithms

Hardware improvements mean little if software cannot effectively use the resources available to it. The Army should shape future software algorithms by funding basic research on improved software algorithms to meet its specific needs. The Army should also search for new algorithms and techniques which can be applied to meet specific needs and develop a learning culture within its software community to disseminate this information.

Parallel Computing

Parallel computing technology is well established. Massively parallel systems are already in use as the IT backbone of Google, Amazon, and other internet companies. Likewise, modern supercomputers are massively parallel machines. The most capable supercomputer, located at the National Supercomputing Center in Wuxi, China, has over ten million computing cores operating in parallel [8]. This machine is notable for also using components designed and manufactured within the People's Republic of China (PRC), indicating that the PRC has reached parity with the West in semiconductor design and fabrication [9]. Parallel computer systems designed for use as servers are tailored to handling a high throughput of simultaneous tasks, such as Google search requests. Parallelism in High Performance Computing (HPC) is tailored to solving hard problems by splitting the problem into small pieces which can be solved in parallel. If a problem can be solved by techniques suitable for “embarrassingly parallel computing”, then massive speedups can be realized. Image processing is within this class, allowing GPUs to speed rendering times by multiple specialized processor cores. Other software techniques suitable for embarrassingly parallel computing include brute force searches, Monte Carlo simulation, and evolutionary algorithms. Amdahl's law, and its successor, Gustafson’s law, defines the upper limits a task can be accelerated when a portion of the task is parallelized [10]. Parallel computers are constructed using the same technology as other semiconductor devices, so they have the same vulnerabilities and shortcomings. The Army should shape the outcome by requiring that the software be developed to efficiently use parallel hardware. Often this is as simple as applying libraries and compiler optimizations that take advantage of parallelism.

Field Programmable Gated Arrays (FPGA)

FPGAs are integrated circuits which can be dynamically configured to implement a logic circuit “in the field.” To quote from Xilinx, a major FPGA manufacturer, “Field Programmable Gate Arrays (FPGAs) are semiconductor devices that are based around a matrix of configurable logic blocks (CLBs) connected via programmable interconnects. FPGAs can be reprogrammed to desired application or functionality requirements after manufacturing. This feature distinguishes FPGAs from Application Specific Integrated Circuits (ASICs), which are custom manufactured for specific design tasks. Although one-time programmable (OTP) FPGAs are available, the dominant types are Static Random Access Memory (SRAM) based which can be reprogrammed as the design evolves.” [11] This ability to be reconfigured allows FPGAs to fill a gap between dedicated logic circuits and general purpose CPUs. FPGAs have become increasingly popular as a replacement for ASICs where the production quantity does not justify the expense of developing a custom chip. FPGAs are currently embedded into reprogrammable military hardware such as avionics, smart munitions, encryption devices, and digital programmable radios. FPGAs are also increasingly used in supercomputers, servers at datacenters, and in data mining applications. In one instance, Microsoft is using FPGA as part of the page ranking system for the Bing search engine [12]. The FPGA-CPU hybrid system has twice the throughput of a conventional system, giving Microsoft a 30% cost savings. In the near term, much of the encryption required by both data at rest and data in transit should be moved to FPGAs, making the encryption / decryption process transparent to the rest of the computing system. As time progresses, more computing services, such as the communications stack, all of the navigation services, etc. should be moved to FPGAs, freeing CPU resources. The Army should continue to shape new developments by building an in house competency developing products having FPGAs.

Photonics and Optical Computing

Optical computing has the promise of speeds orders of magnitude faster than the semiconductor devices; however, realizing those promises has been a daunting task. Proposals for optical computing had been put forth as early as 1961 [13], however, optical computing remained a theoretical concept until suitable lasers, fiber optics, and non-linear optics were developed. A resurgence in interest of optical computing came about in the late 1980s, spurred on by a concern that semiconductor logic could not be extended to higher frequencies and an overly optimistic view of non-linear optics [14] [15] [16]. Unexpected roadblocks and the failure of optical computing research to produce a workable general-purpose computer discouraged researchers and soured investors. The article “All-Optical Computing and All-Optical Networks are Dead” [17], co- written in 2009 by a venture capital investor and an Association for Computing Machinery (ACM) Fellow, stated that without a mechanism to regenerate optical signals, large scale optical computing could not be done. To quote from the article “The past two decades have seen a profusion of optical logic that can serve as memories, comparators, or other similar bits of logic that we would need to build an optical computer. Much like traditional silicon logic, this optical logic suffers from signal loss—that is, in the process of doing the operation or computation, some number of decibels is lost. In optics, the loss is substantial—an optical signal can traverse only a few circuits before it must be amplified. We know how to optically amplify a signal. Indeed, optical amplification is one of the great innovations of the past 20 years and has tremendously increased the distances over which we can send an optical signal. Unfortunately, amplifying a signal adds noise. After a few amplifications, we need to regenerate the signal: we need a device that receives a noisy signal and emits a crisp clean signal. Currently the only way to build regenerators is to build an OEO (optical-electronic-optical) device: the inbound signal is translated from the optical domain into a digitized sample; the electronic component removes the noise from the digitized sample and then uses the cleaned-up digitized sample to drive a laser that emits a clean signal in the optical domain. OEO regenerators work just fine, but they slow us down by forcing us to work at the speed of electronics.” In comparison, semiconductor logic gates regenerate the signal each time it passes through a gate, eliminating signal degradation between stages. A search for optical regenerators did not indicate that there have been any breakthroughs between 2009 and the time of this writing. Development seems to have shifted to “optically assisted” devices, which use optical processing to speed computationally intensive operations, while accepting delays generated by conversions from electrical to optical signals and back again [18] [19]. These hybrid electro-optical circuits are being deployed for packet switching within the internet backbone. Given the strong financial incentives to increase bandwidth, there will be a constant push for increasingly capable optical processing devices. The Army should watch for improvements in optical communications and apply commercial developments to its internet backbone where appropriate. The Army should also shape the development of optical encryption / decryption devices to speed secure communications over WIN-T and other components of the tactical internet.

Optical interconnections between devices within a vehicle or fixed location would have the advantage of extremely high bandwidth with no ground loops, interference, EMP, or TEMPEST concerns. TOSLINK, an abbreviation of Toshiba Link, is a digital optical interconnect for home entertainment systems which was introduced in the 1980s and has been a successful competitor in the consumer electronics market for over 30 years. The Army should lead the development of optical interconnections for tactical application by establishing open standards for these connections and devices which will meet MIL-STD-810, as well as E3 and other relevant defense standards. The Army should shape the adoption of devices with optical interconnects by requiring optical interconnections using the Military Standard on new data processing equipment.

Image processing is a natural application for optical computing. A number of applications of potential use by the Army have already been developed. “Non-uniform image de-blurring using an optical computing system” [20] is an example of a technique which could be transitioned from a laboratory study to improved optics in the field. Unfortunately, the research was conducted in Tsinghua University, China; a potential adversary to the United States. The United States military and homeland defense establishment should aggressively search for improved image processing techniques using non-linear optics and lead development to keep our nation competitive in this area.

Quantum Computing and Code Breaking

Quantum computing has generated significant interest for its potential to solve Non-Deterministic Polynomial-time (NP) hard problems in cryptology and other endeavors in polynomial time. Shor’s algorithm, presented in a paper entitled “Polynomial-Time Algorithms for Prime Factorization and Discrete Logarithms on a Quantum Computer” [21], if successfully implemented, would effectively destroy Rivest–Shamir–Adleman (RSA) and other public key cryptographic systems, rendering much of the world’s IT infrastructure vulnerable to attack. In 2016, Shor’s algorithm was implemented using an ion-trap quantum computer to factor 15 into 5 and 3 [22]. While this is a trivial problem, it marks an important milestone, demonstrating that a quantum computer can be constructed and used to implement Shor’s algorithm. MIT, the university where the experiment was conducted, questioned whether this marked the beginning of the end for encryption schemes [23]. D-Wave, a startup company offering quantum computers, claims to have a 1,000-qubit system available [24]. If these claims are true, and the D-Wave computer can implement Shor’s algorithm, then RSA and other public key encryption algorithms may be on the verge of being broken. The Army should lead, acting proactively to ensure that digital assets are safe if and when public key encryption is broken. The Army should also factor a loss of reliable encryption by allied forces and by civilian employees and contractors within the defense establishment into its strategic plans.

Technological advances often “gives with one hand, to take away with the other”. Quantum computing provides the means to break public key encryption, which relies on solving NP problems. The field of post-quantum cryptography searches for algorithms which resist attack against a non-deterministic Turing machine, a mathematical model for a quantum computer. A number of candidate algorithms have been proposed, however the field is in a state of flux. [25] The National Institute of Standards and Technology (NIST) has issued a call for proposals for new quantum-resistant cryptographic protocols to be used as a public key cryptographic standard [26]. Other groups are looking at replacing internet protocols with quantum-resistant protocols. [27] The Army should watch for developments of post-quantum cryptographic algorithms it can field. The Army should also make allowances for changing encryption systems by either adding additional capacity to the cryptographic subsystems of computers, radios, and other data processing devices, or modularize the cryptographic subsystem with the expectation that it will become obsolete and need to be replaced over the life of the unit.

Nanotechnology-based Computers

Nanotechnology-based computers using some form of mechanical logic such as “rod logic”, have been proposed [28], however, there are no techniques currently available to manufacture such a device. Supplying energy to such a device, as well as heat dissipation, are also unsolved problems. While nanoparticles and other nanostructures are transforming chemistry, biology, and materials science, there does not appear to be any effort to construct nano-machines which could act as a nano-computer. The Army should watch for developments if and when they appear.

Biologically Inspired Computing

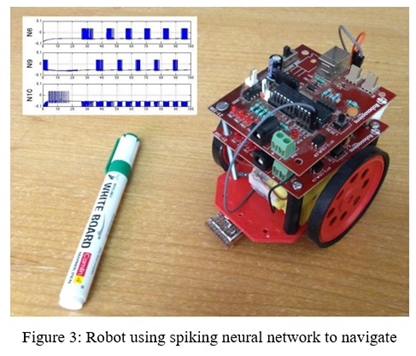

Biologically inspired computing covers a broad set of computing and robotics disciplines which take inspiration from nature. Artificial neural networks were first conceived in the 1950’s as perceptrons, which acted as simple binary classifiers. Since that time, a litany of approaches to modeling biological networks have been attempted, with varying degrees of success. A particularly ambitious project has been the complete mapping of the nervous system of Caenorhabditis elegans, a common round worm [29] [30]. This research, coupled with advances in modeling spiking neural networks [31] [32] [33], offered the possibility of recreating small biological brains inside a computer. Such artificial neural networks hold the promise of being more robust and capable than current robotic AI. Robots demonstrating spiking neural networks are being built [34]. Figure 3 illustrates a simple line following robot which uses a spiking neural network for navigation control. The Army should watch spiking neural network developments, implementing advances as they become available.

Evolutionary algorithms have been in use since their discovery in the 1970’s. It has also been proven by the No Free Lunch theorem [35] that “black box” optimization including evolutionary algorithms and synthetic annealing will not perform any better than brute force search. However, evolutionary algorithms can exhibit impressive performance if the algorithm is tuned for the task. The Army should watch for developments in evolutionary algorithms which could be of benefit to national security.

One issue that hampers biologically inspired computing techniques is a lack of procedures for formalizing a design, then verification and validation of the finished product. It is not clear how to conduct testing on a program that has been evolved or grown, rather than been coded in the traditional sense. The Army Material Command should lead the effort to develop processes for accepting and fielding software developed by non-traditional means, such as neural networks, evolutionary algorithms, and machine developed code.

Fluidics and Microfluidics

Fluidic computers use the flow of a gas or fluid as a means to perform digital operations. While the operation of these computers is generally limited to the kilohertz range, the computer itself is extremely robust and is not affected by EMP or radiation, making it ideal for backup or failsafe equipment which must operate no matter the circumstance. Motive power for such a system can be compressed gas, while a range of sensors and actuators have been developed making a fully fluidic control system feasible. In the past, the Army had an active fluidic program, fielding systems with fluidic components; “Fluidics-Basic Components and Applications”, a report from Harry Diamond Laboratories, a predecessor to the Communications-Electronics Research, Development, and Engineering Center (CERDEC), outlined the state of military fluidics in the late 1970’s [36]. Fluidic research ended when microprocessors became readily available in the 1980s, making fluidic computers obsolete. Recently, however, there has been a renewed interest in fluidics and microfluidics, as low cost micromachining techniques have made it possible to create small and capable fluidic computers which could be directly coupled to chemical and biological systems. [37] [38]. The Army should shape the development of microfluidic computers for analysis of chemical and biological threats with the goal of having rapidly fieldable detection systems which can be operated in a remote location with minimum training and support. The Army should also lead in the development of emergency backup systems using microfluidics to provide degraded operation in case of a High Altitude EMP (HEMP) event or other incident.

Conclusion

The nine technology areas outlined above have the potential to circumvent the end of Moore’s law, keeping the Army’s lead in strategic technology. Each area can make a contribution, but, if combined, a much larger impact could be achieved. China is making alarming strides in key technologies such as parallel and optical computing. The Army should develop a unified research and development roadmap to ensure that the United States remains dominant in these strategic technologies.

Author Information

Dr. Stuart Vanweele, Mortar Fire Control, Armament Research Development Engineering Center (ARDEC), U.S. Army, Picatinny Arsenal, N.J.

Ralph C. Tillinghast, Lab Director, Collaboration Innovation Lab, Armament Research Development Engineering Center (ARDEC), U.S. Army, Picatinny Arsenal, N.J.

References

|

[1] |

"Moore’s Law Is Dead. Now What?," 13 May 2016. [Online]. Available: https://www.technologyreview.com/s/601441/moores-law-is-dead-now-what/. [Accessed 12 July 2016]. |

|

[2] |

M. M. Waldrop, "More than Moore," Nature, vol. 530, pp. 145-147, 11 February 2016. |

|

[3] |

The Economist, "Technology Quarterly - After Moores Law," 12 March 2016. [Online]. Available: http://www.economist.com/technology-quarterly/2016-03-12/after-moores-law. [Accessed 15 July 2016]. |

|

[4] |

H. Sutter, "The Free Lunch Is Over: A Fundamental Turn Toward Concurrency in Software," 20 January 2010. [Online]. Available: https://www.cs.utexas.edu/~lin/cs380p/Free_Lunch.pdf. [Accessed 15 July 2016]. |

|

[5] |

G. Satell, "Facebook, Instagram and the Singularity," 18 April 2012. [Online]. Available: http://www.digitaltonto.com/2012/facebook-pinterest-and-the-singularity/. [Accessed 15 July 2016]. |

|

[6] |

N. Wirth, "A Plea for Lean Software," Computer, vol. 28, no. 2, pp. 64-68, February 1995. |

|

[7] |

Wikipedia, "Software Bloat," 19 June 2016. [Online]. Available: https://en.wikipedia.org/wiki/Software_bloat. [Accessed 18 July 2016]. |

|

[8] |

J. Clark and I. King, "World's Fastest Supercomputer Now Has Chinese Chip Technology," 20 June 2016. [Online]. Available: http://www.bloomberg.com/news/articles/2016-06-20/world-s-fastest- supercomputer-now-has-chinese-chip-technology. [Accessed 23 August 2016]. |

|

[9] |

"Top 500 Supercomputer Sites June 2016," June 2016. [Online]. Available: https://www.top500.org/lists/2016/06/. [Accessed 18 July 2016]. |

|

[10] |

J. Gustafson, "Reevaluating Amdahl's Law," Communications of the ACM, vol. 31, no. 5, pp. 532- 533, May 1988. |

|

[11] |

Xilinx Inc, "Field Programmable Gate Array (FPGA)," 2016. [Online]. Available: http://www.xilinx.com/training/fpga/fpga-field-programmable-gate-array.htm. [Accessed 18 July 2016]. |

|

[12] |

T. P. Morgan, "How Microsoft Is Using FPGAs To Speed Up Bing Search," 3 September 2014. [Online]. Available: http://www.enterprisetech.com/2014/09/03/microsoft-using-fpgas-speed- bing-search/. [Accessed 18 July 2016]. |

|

[13] |

L. C. Clapp, "High speed optical computers and quantum transition memory devices," in western joint IRE-AIEE-ACM computer conference, 1961. |

|

[14] |

A. Huang, "Architectural considerations involved in the design of an optical digital computer," Proceedings of the IEEE, vol. 72, no. 7, pp. 780-786, 1984. |

|

[15] |

L. C. West, "Picosecond integrated optical logic," Computer;, vol. 20, no. 12, pp. 34-46, 1987. |

|

[16] |

J. Gourlay, T.-Y. Yang, J. Dines, J. F. Snowdon, and A. C. Walker, "Development of free-space digital optics in computing," Computer, vol. 31, no. 2, pp. 38-44, 1998. |

|

[17] |

C. Beeler and C. Partridge, "All-Optical Computing and All-Optical Networks are Dead," ACM Queue, vol. 7, no. 3, pp. 10-11, 2009. |

|

[18] |

A. E. Willner, S. Khaleghi, M. Reza Chitgarha and O. Faruk Yilmaz, "All-optical signal processing," Journal of Lightwave Technology, vol. 32, no. 4, pp. 660-680, 2014. |

|

[19] |

D. Woods and T. J. Naughton, "Optical computing," Applied Mathematics and Computation, vol. 215, no. 4, p. 1417–1430, 2009. |

|

[20] |

T. Yue, J. Sou and Q. Dai, "Non-uniform image deblurring using an optical computing system," Computers & Graphics, vol. 37, no. 8, pp. 1039-1050, 2013. |

|

[21] |

P. W. Shor, "Polynomial-time algorithms for prime factorization and discrete logarithms on a quantum computer," SIAM review, vol. 41, no. 1, pp. 303-332, 1999. |

|

[22] |

T. Monz, D. Nigg, E. A. Martinez, M. F. Brandl, P. Schindler, R. Rines, S. X. Wang, I. L. Chuang and R. Blatt, "Realization of a scalable Shor algorithm," Science, vol. 351, no. 6277, pp. 1068-1070, 2016. |

|

[23] |

J. Chu, "The beginning of the end for encryption schemes," 3 March 2016. [Online]. Available: http://news.mit.edu/2016/quantum-computer-end-encryption-schemes-0303. [Accessed 20 July 2016]. |

|

[24] |

D-Wave, "The D-Wave Quantum Computer," 20 March 2016. [Online]. Available: http://www.dwavesys.com/sites/default/files/D-Wave-brochure-Mar2016B.pdf. [Accessed 20 July 2016]. |

|

[25] |

D. J. Bernstein, "Introduction to post-quantum cryptography," in Post-quantum cryptography, Berlin Heidelberg, Springer , 2009, pp. 1-14. |

|

[26] |

D. Moody, "Post-Quantum Cryptography: NIST's Plan for the Future," 2016. [Online]. Available: http://csrc.nist.gov/groups/ST/post-quantum-crypto/documents/pqcrypto-2016-presentation.pdf. [Accessed 21 July 2016]. |

|

[27] |

J. W. Bos, C. Costello, M. Naehrig and D. Stebila, "Post-quantum key exchange for the TLS protocol from the ring learning with errors problem," in IEEE Symposium on Security and Privacy, SAN JOSE, CA , 2015. |

|

[28] |

R. C. Merkle, "Two types of mechanical reversible logic," Nanotechnology, vol. 4, no. 2, pp. 114- 131, 1993. |

|

[29] |

The C. elegans Research Community, "WormBook," [Online]. Available: http://www.wormbook.org.. [Accessed 22 July 2016]. |

|

[30] |

Albert Einstein College of Medicine, "Wormatlas," [Online]. Available: http://www.wormatlas.org/. [Accessed 22 July 2016]. |

|

[31] |

E. M. Izhikevich, "Which Model to Use for Cortical Spiking Neurons?," IEEE TRANSACTIONS ON NEURAL NETWORKS, vol. 15, no. 5, pp. 1063-1070, 2004. |

|

[32] |

M. Suzuki, T. Tsuji and H. Ohtake, "A model of motor control of the nematode C. elegans with neuronal circuits," Artificial Intelligence in Medicine, vol. 35, no. 1, pp. 75-86, 2005. |

|

[33] |

S. Furber and S. Temple, "Neural systems engineering," Journal of the Royal Society interface, vol. 4, no. 13, pp. 193-206, 2007. |

|

[34] |

C. Shetty, S. Nitchith, R. Rishabh , S. R. Nandakumar, P. Shah, S. Kulkarni and B. Rajendran, "Live demonstration: Spiking neural circuit based navigation inspired by C. elegans thermotaxis," in IEEE International Symposium on Circuits and Systems (ISCAS), Lisbon, Portugal, 2015. |

|

[35] |

D. H. Wolpert and W. G. Macready, "No free lunch theorems for optimization," IEEE transactions on evolutionary computation, vol. 1, no. 1, pp. 67-82, 1997. |

|

[36] |

J. W. Joyce, "Fluidics: basic components and applications," [Online]. Available: http://www.dtic.mil/dtic/tr/fulltext/u2/a134046.pdf. [Accessed 22 July 2016]. |

|

[37] |

W. Thies, J. P. Urbanski, M. Cooper, D. Wentzlaff, T. Thorsen and S. Amarasinghe, "Programmable microfluidics," 2007. [Online]. Available: http://groups.csail.mit.edu/cag/biostream/talks/microfluidics-stanford-07.pdf. [Accessed 22 July 2016]. |

|

[38] |

W. H. Minhass, P. Pop, J. Madsen and T.-Y. Ho, "Control synthesis for the flow-based microfluidic large-scale integration biochips," in In Design Automation Conference (ASP-DAC), 2013. |

About the Author(s)

Comments

Embracing Software Advances…

Embracing Software Advances and Non-Semiconductor Technologies: Paving the Way for the Future Defense Environment. Discover how software development services are instrumental in overcoming the limitations of Moore's Law. This article explores the evolving landscape of defense technology, highlighting the critical role of software advancements and non-semiconductor technologies. Uncover innovative solutions that empower the defense sector to adapt and thrive in a post-Moore's Law era

"There is no doubt that…

"There is no doubt that software is applicable in almost every cases of our life. That's why software development is peaking at the highest of its abilities. Saas applications have been touching the maximum amount of people with its simplified solutions" says, one of the developer at Techmango, a custom software development firm based out of united states.

In addition, I was looking…

In addition, I was looking for truly universal and specialized help in various areas, including visual basic homework Now I use only this service. Specialists can help with anything related to Visual Basic, from programming tasks and assignments of any type, level and complexity to academic tests.

When searching for to jot…

When searching for to jot down a rhetorical evaluation, an important query college students commonly ask is, “how do you write a rhetorical evaluation essay?” Well, this is rather simple. Just like every essay, a rhetorical evaluation should have an define. The rhetorical analysis outline define should consist of the creation, frame, and conclusion. The creation have to give vital historical past statistics at the textual content to be analyzed. Information such as the author’s name, style, and title of the textual content must be covered in the advent. The creation should additionally deal with the cause for the evaluation of the text. This is how to begin a rhetorical evaluation essay as a way to obtain your preferred aim.

Software is very important…

Software is very important for any industry. For small businesses it is important to have an application like this pest control software, and for large companies it is important to have a lot of software that automates everything. Fortunately, now everything is developing and you can keep track of all things right from your smartphone in your pocket.

Microsoft 365 is a widely…

Microsoft 365 is a widely used service, but its many different applications make backup complicated. Despite the level of reliability provided by Microsoft, data loss in Office 365 may happen due to human error, viruses on the user’s side or other failures. Microsoft provides some mechanisms in Office 365 to prevent data loss. With nakivo office 365 backup solution you can be sure in safety of all your data.