Three Emerging Issues to Keep You Up at Night

Richard Kaipo Lum

Introduction

Anxieties about a nuclear-tipped confrontation with North Korea. A revanchist and aggressive Russia undermining the Post-Cold War order. An ascendant and confident China seeking increased control over its own population and pursuing new accesses to regional and global resources, powered by a narrative of reclaiming its “rightful place.” Semi-amorphous non-state actors digital and physically challenging the Westphalian system and inciting terrorism around the world. A US foreign policy that is unpredictable and volatile, framed in traditionally isolationist-style rhetoric yet filled with threats of direct military action.

The future of international security hasn’t been this… exciting since the formative days of the Cold War.

While the above-mentioned challenges and other rising security issues are enough to keep anyone from sleeping well these days, there are, unfortunately, an expanding host of emerging issues of which decision makers should take note. Doing good foresight work means never shying away from the deeply disturbing stuff, and in that spirit, this article introduces three emerging issues that promise to have deep impacts on the future. As emerging issues, they are in various stages of evolution; they are not yet part of the new normal. Collectively, they are part of the rapidly expanding challenge set that, in part, will define our new security era.

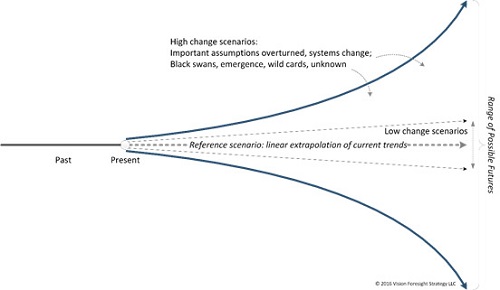

Figure 1: Emerging Issues Help Explore the Bands of High Change

Three Emerging Issues

Unlike trend work, in which we are identifying important historical changes and extrapolate their trajectory into various futures, with emerging issues we work to anticipate things that may have an important future role if they continue to mature. Since “the future” does not actually exist yet, uncertainty is inherent in all our discussions about “it.” Emerging issues are thus about the possible, novel, threats and opportunities that we need to account for in our foresight work.

The following emerging issues were developed from some of our recurring work with foresight and defense professionals mapping the futures of conflict. They include: Involuntary Militia; AI Irregular and Mercenary Forces; and Chimeric Pandemic.

Involuntary Militia

With the digitization of society and the emergence of cyber as an attractive “battlespace” for activities, the civilian realm will become a preferred domain for competition and conflict. At the same time, cheap and pervasive robotics and AI will drive the creation of physical and digital swarms of companions for everyday citizens, performing a variety of personal functions – security, mentoring, and mental health. As civilian populations and infrastructure are increasingly targeted by an expanding array of state and non-state actors, these personal digital entourages of citizens will begin to engage in active personal defense and even counter-IO. This recurring – if often indirect – interaction between bad actors and civilians will further blur the lines between civilian and combatant, creating engaged civil combatant status: civilians who are involuntarily – and sometimes unknowingly – engaged in the defense of themselves and their communities against myriad bad actors.

This emerging issue is what we call a “disruptive combination.” By combining two or more discrete emerging issues, we can explore their intersection and examine the less obvious second order disruptions that may emerge. Involuntary Militia is thus a disruptive combo of two issues identified in the 2017 EI4CS report: Everyone’s Got an Entourage and Conflict Shifting to a Civilian Center of Gravity.

AI Irregular and Mercenary Forces

The role of machines is expanding daily. From botnets used to conduct cyber disinformation campaigns to tiny robots that can search debris to find survivors after an earthquake to agricultural robots that monitor and harvest crops, machines are already being used as a workforce aid for a variety of industrial, societal and military purposes. As artificial intelligence and machine cognition continue their rapid advance, humans will increasingly rely on them to gain competitive advantage in these spaces. This includes the battlefield where both state and non-state actors seek to leverage various forms of AI networks as part of mercenary or irregular forces to conduct independent and semi-autonomous operations to include targeting human combatants. The evolution of cyberspace as a critical operational domain, the global conversion of everything to IoT, and the proliferation of machine-controllable devices and mobile platforms will make the elevation of AI as a (hopefully subordinate) combat arm almost irresistible.

Chimeric Pandemic

Stoking the fears of technophobes everywhere, the mainstreaming of powerful genetic tools like CRISPR raises the very real specter of amateur bioengineers inadvertently setting loose dangerous new synthetic pathogens. At the same time, more credentialed researchers are advancing the use of genomic manipulation to create animal and plant chimeras, breeding existing lifeforms that carry foreign genes and express transplanted traits. This leads down a variety of agnostic, beneficial and malignant paths to include industrial transplant organ farming of bioengineered animals, synthetic and highly valuable biogenetic traits for human performance enhancement, and new weapons such as synthetic viruses and genetically modified animals for use on the battlefield. Legislatively suppressed in the West but government supported and jealously guarded by rising competitors, the black market for synth-chimeras quickly evolves from a sprawling cottage industry to a somewhat more consolidated market in less regulated parts of the world. Eventually, chimeric creatures and bio modified living organisms threaten to “infect” and permanently transfigure existing ecosystems in ways we cannot imagine.

This emerging issue is another disruptive combo, this time combining DIY Bio Pandemic (see, EI4CS 2017) and Chimeric Menagerie (Global Security Forecast 2018, forthcoming).

Implications

These emerging issues may strike some as science fiction, yet each is extrapolated from real-world developments and experiments that are occurring right now. While the future may not end up producing these three case studies in the exact way they are forecast here, the determined push toward these issues should raise very real concerns among those responsible for preparing us for tomorrow. At the very least, they pose a number of questions that need to be explored.

Involuntary Militia: the American tradition of civil defense has its roots in organizing civilians in wartime – today it is employed largely to respond to natural disasters; how could civil defense be reimagined in an era in which everyone is an engaged civil combatant? What pressures do these changes put on the traditional American divides between civilian and military, law enforcement and military?

AI Irregular and Mercenary Forces: what is Joint doctrine on how to employ AI irregular forces? How should US forces distinguish between “friend,” “foe,” and “neutral” AI forces? How will states be able to keep up with private organizations in developing effective AI forces? What kinds of conflicts will cheap and affordable AI-for-hire enable across the military and private realms?

Chimeric Pandemic: what new conflict actors will chimeric markets attract? Which traditional actors will be drawn to them? Just as machines are seen as levelers, as was firearms before them, what potential is there for biology to level conflict playing fields? What should be US policy concerning the augmentation of soldiers with chimeric body parts? How do we begin to prepare for chimeric or synthetic viruses and gene-specific targeting?

A very real challenge is that we will not face these issues one-by-one, in neatly compartmented silos. Nor will each evolve in a vacuum. The real stressors arise from having to operate in a world that features all of these and more. Considering futures that feature multiple such issues, we have to explore additional questions: when will we be ready to engage opponents that are augmented by both machines and biology? How do nations deal with super-enabled individuals leveraging AI irregular forces across international boundaries? How will AI change how humans operate in society and in times of peace and conflict? How do humans make sure they maintain control of AI to prevent it from being abused or misused and what does that look like? Just how deep will we need to make changes to how our institutions sense and adapt to change in order to compete effectively? Which competitors across the landscape stand to gain the most from embracing these emerging developments?

If those observers who calculate that we are in the midst of exponential technology change are correct, then some of our traditional tools for forecasting technology and future threats such as these are going to be insufficient for the task. As the diversity of futures expands before us, we need a broader range of tools and approaches to develop the insight we need to shape and prepare for the future.

Critical Foresight

There are many different methods for thinking about the future; different questions that we ask of it. Some of these approaches are what we call telescopic, that is, they try to see a more specific thing, more clearly, at a much farther distance. Other approaches are panoramic, they try to see more of the entire vista that opens up before us. The telescopic is trying to get the future right. The panoramic, in contrast, is trying to identify more of the threats and opportunities that might manifest in the future. While both approaches have value, good foresight work is more often than not about the panoramic, typically called on to help decision makers wrestle with complex and open-ended issues that don’t commend themselves to point forecasts.

The three emerging issues presented here are not whole futures unto themselves; they are building blocks of more coherent forecasts. They represent one of the many methods we can use to take a more panoramic view of the future and better explore those bands of high change scenarios. As change along certain dimensions accelerates, we need to explore many more of these possible futures, to engage with futures much more aggressively than in the past.

The US will not better prepare itself for “the future” by trying harder and harder to narrow things down to a single clear future (which does not exist). It needs to do the opposite: identify more of the possibilities that logically may emerge from those spaces of high change. It is only in those places that we will find the truly disruptive threats we haven’t anticipated and the truly game changing opportunities that do not presently exist. In the end, our longer-term futures are more likely to be defined by those emerging issues than resemble the familiar world of today.