The Role of Judgment (Part 1)

Carey W. Walker and Matthew J. Bonnot

Introduction

The Army is very liberal in its use of the word judgment within doctrine. You can find it listed 29 times in ADRP 6-22, Army Leadership, and 31 times in ADRP 6-0, Mission Command. The Army places great emphasis on the importance of judgment, but does little to explain the concept. The purpose of this two-part series is to demystify the concept of judgment. What is it? How do you develop it? Why does it fail? And, most importantly, how do you get better at it? We illustrate the two-part article with a series of examples the readers can easily relate to, both military and civilian.

Part one in the series answers the first three questions, what is judgment? How does judgment work? Why does judgment fail? We define judgment as an informed opinion that uses intuitive and deliberate thinking (System 1 and System 2) to evaluate situations and draw conclusions. We next discuss the judgment process. We examine the two categories of intuition, expert (skilled) and heuristic. Then we look at the relationship between intuitive and deliberate thinking. Finally, we conclude with discussions on how judgment can backfire.

“Your Judgment is All Jacked Up”

These words echoed through my mind as my company commander railed against me for our less than perfect performance on the IG inspection of our company field sanitation kit. It seems the hand pressure sprayer had some moisture in it. As a brand new second lieutenant assigned the extra duty of field sanitation officer, I was more than happy just to find the sanitation kit with its associated pieces and parts. I never thought to open the sprayer and look inside. I was mortified when the NCO inspector tipped it over and some gunky fluid dripped out.

Okay, the sprayer was dirty. I saw it. But did that really mean my judgment was “jacked up?” I did not know anything about sprayers, but I did know how to clean my weapon. A dirty barrel on an M16 would not pass muster and I should have known a gunky sprayer was no better. Why didn’t I look inside it? Maybe my judgment was bad.

This was my first introduction to the concept of judgment within the military. I had little understanding of the word back then and it is easy to see why people still struggle with its meaning today. Military doctrine does an excellent job of highlighting the importance of judgment – Success in operations demands timely and effective decisions based on applying judgment to available information and knowledge[1] – but comes up conspicuously short on explaining the concept. We hope to remedy that shortfall with this paper. Our purpose is to demystify the concept of judgment. What is it? How do we develop it? Why does it fail? And, most importantly, how do we get better at it?

What is Judgment?

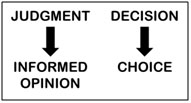

People tend to use the words judgment and decision interchangeably. When we state someone used poor judgment, we typically mean he or she made a bad decision that resulted in a poor outcome. By using the words synonymously, we tend to confuse process with outcome. Judging is a cognitive process that involves evaluating a situation and drawing a conclusion, resulting in an opinion.[2] The output of a judgment, therefore, is an informed opinion, not a decision. When a person makes choices from among several options, you then have a decision (see Figure 1).[3] Consequently, judgment (opinion) and decision (choice) have a symbiotic relationship. When you combine the two, you have decision making.

Figure 1. Source: The Authors

Given these definitions, let’s return to our earlier example of the failed IG inspection and take a closer look at my actions. When the company commander designated me, the new guy, as the unit field sanitation officer, I quickly concluded I was the first officer in the company to perform this duty in a long time (probably since the last IG inspection). Subsequently, there was no one to walk me through my responsibilities. The supply sergeant, however, had a hand receipt and I used it to inventory the field sanitation kit. To my relief, I found all the associated components on hand and everything in working order to the best of my knowledge (which was limited). Finally, I read the existing field manual on unit field sanitation to ensure I was conversant on the topic and ready for the inspection.

Based on this information, how did I apply judgment and what was my decision? Where did I mess up and why? My guess is you have already come to some conclusions, but let’s dig a little deeper into the judgment process before answering these questions.

How Does Judgment Work?

People form opinions (i.e., make judgments) using two kinds of thinking, one that is fast and intuitive and the other that is slow and deliberate. Researchers call this perspective the Dual Processing Theory, which traces its origins back to the 1970s and 1980s.[4] Since the early 2000s, the cognitive and social psychology communities have used the terms System 1 and System 2 to describe these two approaches to thinking.[5] These two terms entered popular culture in 2011 with the publication of the best-selling book, Thinking, Fast and Slow, by Nobel laureate and cognitive psychologist Daniel Kahneman.[6]

According to Kahneman, System 1 is the fast, effortless, and often emotionally charged form of thinking governed by habit.[7] In common vernacular this kind of thinking goes by many names such as intuition, instinct, hunch, feeling, inspiration, gut reaction, and sixth sense. Many people like to attribute mystical powers to intuition as if it is some type of psychic skill when in fact it is nothing more than a form of recognition.[8] Here is how it works.

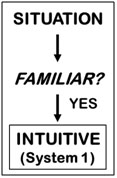

When we assess an event or situation to form a judgment, we begin with System 1 thinking. It is the default setting for reasoning because it is automatic, fast, and very good at short-term predictions.[9] The one prerequisite System 1 has for jumping into a situation and taking charge of thinking is that it must detect some level of familiarity with the existing situation or event (see Figure 2). If this occurs, its opposite partner, System 2 or deliberate thinking, is happy to remain “in a comfortable low-effort mode, in which only a fraction of its capacity is engaged.[10] The reason for System 2’s reluctance to get involved is because it takes purposeful action to begin the deliberate thinking process. Thinking hard requires time, effort, and brainpower. As a result, we tend to save our deliberate thought for moments of exigency when demands are great. Because of this diffidence toward hard thinking, System 2, which still oversees all judgments, is typically content with accepting plausible explanations generated by intuitive thought.[11]

Figure 2. Source: The Authors

When we describe System 1 as “automatic,” we mean it generates these plausible explanations below the level of consciousness. In other words, you do not realize it is happening. You simply are aware of the judgment or decision as a moment of clarity when the thought “pops” into your mind. If you could look inside your subconscious thought process, this is what you would see. When an event or activity occurs and you start to evaluate the situation, your mind immediately begins a recognition process based on relevant cues or indicators in the environment. These cues act as sensory signals that connect the activity to information stored in your memory, i.e., a past experience. Your brain responds to this pattern recognition with a chemical reaction that generates emotions such as fear, anxiety, happiness, or satisfaction. If your subconscious mind recognizes the relevant cue and associates it with a related experience, your body responds with a positive emotion. Your “gut” or “sixth sense” tells you that you have seen this situation before and you know what to do (see Figure 3).[12]

While System 1 is helping you form your judgment, System 2 is in observation mode. Kahneman explains:

…Systems 1 and 2 are both active whenever we are awake. System 1 runs automatically and… continuously generates suggestions for System 2…When all goes smoothly, which is most of the time, System 2 adopts the suggestions of System 1 with little or no modification…When System 1 runs into difficulty, it calls on System 2 to support more detailed and specific processing that may solve the problem of the moment (see Figure 3).[13]

Figure 3. Source: The Authors

When does your System 1 call for help? When it is surprised and does not know what to do. Surprise forces your mind into a deliberate and conscious thinking mode with System 2 actively searching your memory for a story that helps make sense of the unexpected event.[14] What causes the surprise? Usually, it is a lack of experience. You are seeing something new or novel for the first time. When this occurs in your initial assessment and System 1 fails to recognize any cues, your thinking immediately shifts to System 2 for a deliberate judgment or decision. In addition to a lack of experience, System 1 calls for help when it finds itself in a complex, confusing, or uncertain operating environment. When your intuition cannot make immediate sense of the surroundings, it bails out and System 2 steps in to correct intuitive predictions (see Figure 4).[15]

Figure 4. Source: The Authors

To summarize what we have covered so far, applying judgment means using one’s cognitive skills to come to an informed opinion. We use the word “informed” because judgment involves evaluating a situation and drawing a conclusion using both intuitive (System 1) and deliberate (System 2) thinking. System 2, as the conscious portion of cognition, is the “watchdog” for the overall judgment and decision making process though it often sits in the background and takes little action beyond acknowledging the work of System 1, the automated and mostly subconscious portion of thinking. As you can see, System 1 wields a lot of influence so it is in our interest to gain a better understanding of how intuition works.

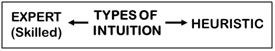

Expert Intuition. The intuitive predictions of System 1 fall into two broad categories, expert (also known as skilled) and heuristic (see Figure 5). The driver of expert intuition is the experience base of the individual. Malcolm Gladwell notably described expert intuition in his book, Outliers, when he discussed the “10,000 hour rule.”[16] He cited the work of Anders Ericsson, a researcher at Florida State, who found that deliberate practice, combined with coaching, regular feedback, and a stable environment leads to the development of expertise if conducted over a span of eight to ten years (i.e., 10,000 hours).[17] Gladwell used numerous examples of dedicated practitioners to illustrate the 10,000 hour rule to include Mozart, the Beatles, and Bill Gates.

Figure 5. Source: The Authors

In Gary Klein’s book on expert intuition, Sources of Power, he eschewed experimentation and studied professionals in natural work environments. In his examination of long-serving firefighters, he discovered that when assessing a situation, forming a judgment, or making a decision, senior firefighters selected the first strategy that worked, a process known as satisficing. They did not look for the best course of action. They did not attempt to optimize the situation by comparing alternatives. They drew on their extensive experience base and looked for the first plausible solution.[18]

In the first phase of this “expert” approach to intuition, firefighters, using System 1, recognized cues or patterns in the environment. The cues gave these highly skilled practitioners access to information stored in memory (this is the “emotion link” mentioned above), and the information provided a potential answer to the problem or challenge. If the cues indicated the situation was typical and familiar, the firefighters developed a tentative plan by an automatic (i.e., unconscious) function of associative memory. In other words, they immediately knew what to do based on past experience. In the second phase of expert or skilled intuition, the firefighters shifted from intuitive thinking to a deliberate thinking mode (i.e., System 2) to assess the intuitive judgment.[19] Klein calls this deliberate thinking process for evaluating a single course of action mental simulation.[20] The purpose for conducting a mental simulation is to validate the intuitive recommendation by assessing its strengths and weaknesses, and making modifications if necessary. Based on this final assessment, which typically occurs very quickly, skilled practitioners either accept or reject the course of action. If rejected, they automatically return to the first step of expert intuition and select the next course of action that will work, i.e., the concept of satisficing.

Klein illustrates expert intuition in his book with a story titled, “The Sixth Sense.” Firefighters are engaging a simple house fire in a kitchen. The lieutenant leading the hose crew sprays water on the fire, but it has little effect. He does it again to similar results. He senses something is not right and orders his men out of the building. Moments later the kitchen floor collapses. The fire was burning in the basement below, not the kitchen. The cues the lieutenant expected to see in this routine kitchen fire were not present. The fire did not respond as expected as firefighters dosed it with water. The lieutenant felt anxiety as the chemical reactions in his brain told him something was wrong. It was time to back off and select the next course of action.[21]

The expert judgment and decision making process typically occurs so quickly the user is challenged with trying to explain its occurrence. From the user’s perspective, it simply happens, which adds to the general mystery of intuition. This process of skilled intuition also begs other questions. At what point does an individual cross over to the “expert” realm of thinking? The 10,000 hour rule is an attention-grabbing idiom, but how does one actually measure it? What about the vast majority of the population that will never come close to achieving “expert” credentialing? Is their intuitive thinking of a lower quality or grade? Do the majority of people operate with “regular” intuition while the experts speed along with “premium grade” intuitive thinking?

Clearly, we are not all experts and most of us would be hard pressed to validate our credentials as military leaders using the 10,000-hour measuring stick. Yes, we might have the years of experience, but chances are we would come up short on the eight to ten years of focused and dedicated practice in a specific field of study. Military officers tend to be generalists, not specialists. Luckily, our System 1 mode of thinking does not require “expertise” to operate. It simply requires some level of experience to enable the intuitive brain to recognize a situation and develop a response to the question of what to do. When the brain lacks a deep well of expertise, it formulates alternative procedures known as heuristics “that help find adequate, though often imperfect, answers to difficult questions.”[22] Therefore, even though we might lack the expertise to qualify as “skilled” intuitors, our System 1 mode of thinking offsets this shortfall by using heuristic intuition.

Heuristic Intuition. Think of cognitive heuristics as mental shortcuts developed by the brain to formulate opinions, make decisions, and answer tough questions. The key word is “shortcuts.” Everyone makes a myriad of decisions throughout the day. Our brains, as well as our patience, would quickly become overwhelmed if we resorted to System 2 thinking and implemented a concurrent-option comparison decision-making process (think MDMP) every time we formed a judgment or made a decision such as what to eat for breakfast or who to hire as a new office worker. Instead, we use heuristics, which are “rules of thumb” that guide thinking to solve difficult problems. They typically work by simplifying a problem and replacing a difficult question with an easier one, a concept known as attribute substitution.[23] Heuristics exist in the subconscious mind for a single reason – they have worked in the past. More importantly, they remain embedded in System 1 thinking until they no longer help us form judgments or make decisions. Much liked skilled intuition, people use heuristics to identify the first conclusion or course of action that supports their cognitive framework (i.e., satisficing).[24]

Heuristics fall into three broad categories according to Kahneman:[25]

- Representative. This category of heuristics involve making judgments or decisions based on your stereotype or mental model of the person, situation, or activity.[26] For example, you are a battalion commander interviewing perspective company commanders. You expect these young officers to have character traits as well as personality traits similar to your own. You were very successful as a company commander and have experienced continued success in your career. You believe you are “what right looks like.” As a result, you have simplified the interview process by using yourself as the standard for assessing other officers.

- Anchoring and Adjustment. This category relies on a recent and readily available number or event anchored or embedded in one’s memory, which is immediately used as a guide for estimating another number or activity. For example, when projecting organizational budgets, instead of formulating detailed projections for spending, we typically start with the budget from the previous year and use that as the benchmark or anchor for the new budget year.[27]

- Availability. This category is associated with easily recallable memories such as dramatic events (i.e., plane crashes, mass shootings, terrorist attacks) or vivid personal experiences.[28] For example, the division commander requires every new lieutenant to spend two days shadowing him during their first month in the division. The general had a similar leader development experience as a young lieutenant that he fondly recalls and decided to continue the practice once he became a general officer despite the severe demands on his time.

In the years since Kahneman first published his work, academics have greatly expanded on these initial categories. While the growing research is impressive, it is more important to understand the pervasiveness of heuristics; they are part of everything we do from our decisions on what to wear in the morning to our personal judgments during the MDMP process. Consider another example, this one involving new parents shopping for their infant daughter. They agree their child deserves the best in life and decide to put this belief into practice by buying nothing but the highest quality items for her. They adopt a “take the best” heuristic[29] from the representative category and develop a “quality” cue based on highest price to identify the best items to purchase. Both parents share the mental model that cost and quality have a direct correlation. Using this cognitive shortcut is expedient for them and aligns with their personal beliefs. Does it ensure they are buying the highest quality? They believe so…until proven otherwise.

As you can see with these examples, heuristics expedite our thinking by making difficult problems more manageable and manageable problems second nature. As we alluded to earlier, however, heuristics have a darker side. Shortcuts in thinking can lead to very poor results if done in the absence of logic, probability, or rational thought.[30] Additionally, System 1 can exaggerate the effectiveness of these shortcuts with its overconfident nature, treating the quality of a heuristic intuition exactly the same as a skilled intuition.[31] This self-assurance can become a problem because System 2 cannot differentiate an expert or skilled intuition from a heuristic intuition.[32] It simply receives a supremely confident input from System 1, and typically endorses the recommendation with little afterthought. Consequently, there is much goodness with the use of heuristics, but also much risk as well.

Given this short examination of skilled and heuristic System 1 thinking, one might logically ask, is one form of intuition better than the other? It is a fair question. We obviously would like the extensive experience base of the skilled intuitor with the uncanny ability to select the first workable solution (satisficing) and then validate it using System 2’s mental simulation process. We know experts excel in stable environments that allow them to confront problems and challenges repeatedly. As they learn, develop, and grow, they expand their experience base and associated expertise. Unfortunately, we face many problems without the luxury of expert intuition. What happens when we have limited experience? Does our brain immediately shift to System 2 thinking to address these issues? No, it does not. Using System 2 thinking is a deliberate decision. The brain’s automatic setting is to use intuitive thinking, whether we have a lot of experience (skilled intuition) or just a little (heuristic intuition). The point is your brain will always use intuition first unless you consciously make the decision to initiate a deliberate decision-making process. So, again, is one form of intuition better than the other? Logic and reasoning (System 2) tell us yes; we want to use skilled intuition, but our unconscious mind does not care. It will use whichever one is available.

Now that we have a better understanding of how judgment works, let’s return to the field sanitation kit story. How did I apply judgment to the situation? Though I would like to admit otherwise, I did not shift to a deliberate thinking process to optimize my solution. I clearly was operating in the realm of satisficing by looking for the first solution that would work. But my use of satisficing was done without an extensive experience base. I had a rudimentary understanding of hand receipts and inventories. I also knew the importance of properly maintaining equipment. What I did not know was anything about field sanitation. Given this background, my brain defaulted to the use of cognitive heuristics to help me simplify what was for me a vexing situation. My mental model for handling Army equipment, which was already drilled into my head as a junior officer, focused on the importance of property accountability. I was operating with the representative heuristic and success for me meant full accountability of the field sanitation kit. Therefore, I inventoried the kit and met my objective…and we flunked the inspection. My judgment obviously failed me.

Why Does Judgment Fail?

As we have discussed, we form our judgments or informed opinions using both intuitive (System 1) and deliberate (System 2) reasoning. We default to the intuitive side of our brain when we apply judgment but can consciously move to deliberate thinking when System 1 is surprised, stumped, or confused. Under either circumstance, our System 2 reasoning, acting as a cognitive watchdog, has the final say on the judgment process. We use our judgment to evaluate situations and draw conclusions, which form the basis for an informed opinion. When our intuition misfires and our deliberate reasoning goes into hiding, our judgment suffers, and the informed opinion we seek quickly transforms into uninformed conjecture. In other words, our judgment becomes “all jacked up.” How does this happen?

Expert Judgments. Let’s start with expert judgment. When are experts no longer experts? Consider the city firefighter facing his first forest fire or the special operator with extensive urban close combat experience sitting on a mountain top in Afghanistan for the first time. Some skills clearly translate to new environments, but many do not. When the firefighter and operator conduct mental simulations to validate their assessments, conclusions, or courses of action, do they recognize and acknowledge the changes in the operational environment or does overconfidence – the Jekyll and Hyde attribute of intuitive thinking – blind the deliberate reasoning of System 2? Klein and Kahneman, in a joint article, use the term “fractionated expertise” to describe the challenges of experts working outside their comfort zone.[33] Excessive confidence in one’s experience can jeopardize the effectiveness of skilled intuition by encouraging the user to ignore anomalies in the environment.

The issue of anomalies is important. Skilled intuitors look for cues that indicate patterns in the environment. Based on these patterns, experts anticipate future events. When these future events do not occur or the cues do not appear, experts consider this an anomaly – a violation of expectations.[34] As part of the mental simulation process, skilled operators question the anomalies and address discrepancies in behavior or actions. In the “Six Sense” story, the lieutenant was momentarily stumped by the anomaly of the fire not immediately responding to the spray, and elected to back out of the house to reassess the situation. A failure in judgment can occur when the individual decides to ignore the anomaly (“There isn’t time to take every field order apart”) or deems the differences in expectations as insignificant (“Our approach is close enough”) without actually reflecting on the situation.

A final pitfall associated with expert or skilled intuition involves the lack of situational awareness, a former doctrinal term that means knowing what is going on around you. Having situational awareness or SA is fundamental to conducting an assessment, the first step to forming a judgment or making a decision. Though we rarely obtain all the information we desire, experts as a minimum must be able to detect the critical cues needed to identify patterns and take action.[35] Imagine commanders who never leave their command post. How will they build situational awareness, develop situational understanding, and envision an operational approach? Again, the specter of System 1’s unrealistic confidence can raise its head and convince commanders they have adequate information to see the battlefield, apply judgment, and make decisions when they do not.

Heuristic Judgments. As opposed to skilled intuitors who base judgments on an extensive experience base, heuristic intuition relies much more on what the user believes rather than what they have experienced, which could be limited. As a result, these mental shortcuts that define heuristics can generate cognitive biases, i.e., errors in reasoning, if practitioners are not wary.

How do these cognitive biases arise? Usually from a confluence of factors beginning with the personal beliefs and mental models of the individual. In our earlier example, the battalion commander decided to use his own behavior and personality traits as the benchmark for evaluating new company commanders. Is this an example of egocentric bias or good judgment based on readily available and thoroughly examined evaluation criteria? What about the division commander directing all new lieutenants in the division shadow him for two days? Is this simply a rosy retrospection of his youth or will there be real benefits for the young officers?

A second factor, which we also referenced with expert intuition, is the power of System 1 thinking to generate a feeling of supreme confidence. As Kahneman states, “It is natural for System 1 to generate overconfident judgments, because confidence, as we have seen, is determined by the coherence of the best story you can tell from the evidence at hand.”[36] As a result, we use confidence as an evaluation criteria – higher is better – when there is little correlation between confidence and accuracy with intuitions that originate in heuristics.[37] The best way to evaluate the probable accuracy of a judgment, according to Kahneman and Klein, is by assessing the environment.[38] If the environment is sufficiently regular to be predictable (i.e., easily discernable cause and effect relationships), then the judgment has a good chance of being valid. [39] Conversely, if you are operating in a complex and uncertain environment, predictions are of little value because causal relationships are only discernable in retrospect.[40]

A third factor that can spawn cognitive biases in heuristic judgments is our natural tendency to jump to conclusions. When we begin to assess a situation using System 1 thinking, our brain gathers the available evidence and transforms the data into a logical and coherent story. System 1 is not concerned with the amount or the quality of the evidence. It believes all the evidence it observes is all the evidence that is available.[41] Its focus is on creating a seamless and believable story. Consider the battalion commander who is notified by his staff duty officer (SDO) late on a Friday night that two Soldiers from two different companies have been arrested for drunk and disorderly behavior. After a moment of reflection he tells the SDO to initiate the alert roster. He wants a battalion formation in two hours. His intent is to stop the drunken behavior before it spreads throughout the organization. Is this good judgment or a precipitous jump to conclusion?

Deliberate Judgments. System 2, despite its reputation as the panacea for errors in System 1 thinking, faces its own challenges when it comes to exercising logic and reasoning, the most significant being its unwillingness to get involved. System 1 induces complacency in System 2 thinking because of its confident nature, which provides the perfect breeding ground for the status quo bias. “Good enough” becomes the rallying cry for System 2 as it cruises along in endorsement mode of System 1. While this statement is somewhat facetious, it drives home the point that it is incredibly hard for System 2 to step in and make a correction to System 1 without a specific call for help. As Kahneman states in his book, “Constantly questioning our own thinking would be impossibly tedious and System 2 is much too slow and ineffective to serve as a substitute for System 1 in making routine decisions.”[42] Luckily, System 1, when surprised or confused, does call for help. Once this occurs and System 2 begins the deliberate thinking process, it faces additional challenges.

Conducting a comparative analysis to optimize the best solution for a problem is not easy. It takes time and effort, whether we do it individually (think of Commander’s Visualization) or as part of a group. From a military perspective, deliberate planning typically is a joint effort requiring extensive preparation and research either in ad hoc working groups or as part of a collective decision-making process (again, think of MDMP). Additionally, System 2 is just as susceptible to cognitive biases as System 1. The great advantage of System 2 thinking, however, is the transparency of the process, especially when done collectively. Members can step forward and identify errors in thinking, which allows the group to correct its behavior. Of course, self-correction will not occur in the organization without a conducive climate for learning that allows followers to voice their concerns. Nor will it occur if the organization weighs unit cohesion and reduced anxiety over the need for innovation and change.[43] When this occurs, group think typically reigns and deliberate thinking becomes perfunctory in nature.

Now that we have reviewed why judgments fail from multiple perspectives, let’s return one last time to the story of the field sanitation kit. Why did my judgment fail? I think the answer is very simple. I lacked experience, though my heuristic intuition worked surprisingly well given my limited skills. The crux of the issue came down to my decision not to clean every component of the kit as if it were part of a china service setting at a battalion dining in. I would not learn this sacrosanct lesson of maintenance (i.e., being clean enough to eat off of) until my time as a company commander. Why didn’t my System 2 thinking step in and save the day? It was because I did not think I needed the help. I thought I had everything under control. In other words, System 1, the confidence-making machine, was working just like it was supposed to.

While my field sanitation kit story might be an excellent vehicle for explaining the judgment process, its relevance today is somewhat dated. A more pressing example that seems to surface several times a year is the ethical behavior of senior officers, usually involving cases of sexual impropriety or travel card abuse among other transgressions. Why do so many of the military’s senior leaders use such poor judgment? The answer we hear most often from superiors is that they lack character. Our leadership manual, ADRP 6-22, seems to endorse this conclusion with the following statement: “Character, a person’s moral and ethical qualities, helps a leader determine what is right and gives a leader motivation to do what is appropriate, regardless of the circumstances or consequences.”[44] While this is a nice clean-cut answer, we need to look deeper. Everyone has character flaws, which is why we are charged as leaders with the development of our subordinates’ character in the first place. If we assume no one is perfect, what other factors contribute to the poor judgment of these senior leaders?

One factor is the System 1 – System 2 relationship. When we are involved in a deliberate thinking process, we expend an incredible amount of mental energy. When System 2 is focused on cognitive effort, researchers have found that the task of self-control often falls to the wayside. Kahneman explains, “People who are cognitively busy are also more likely to make selfish choices, use sexist language, and make superficial judgments in social situations…Self-control requires thought and effort.”[45] With System 2 tied up, System 1 steps in and self-control is not on its agenda. This phenomenon of losing self-control, which is known as ego depletion,[46] does not excuse or justify the loathsome behavior of these senior leaders, but it does highlight the risk leaders face when operating under cognitively demanding conditions. When System 2 runs out of gas and System 1 is shoved to the forefront, mental shortcuts become tenuous and cognitive biases have a field day as miscreant leaders justify their behavior with various arguments ranging from “no one will find out” (illusion of control bias) and “I have seen other senior leaders act this way” (confirmation bias) to “I am not hurting anyone” (framing bias). Clearly, there are better ways to address this phenomenon.

End Notes

[1] Army Doctrine Reference Publication (ADRP) 5-0, The Operations Process (Washington, DC: Government Printing Office [GPO], May 2012), para. 1-31.

[2] The word “judgment” has multiple uses to include an act (“demonstrating good judgment”), an opinion (“What is your judgment?”), and a discretionary ability (“a man of sound judgment”). As a result, we did a fair amount of “cherry picking” from various dictionaries to form a definition that best aligns with common military usage of the term.

[3] Keith J. Holyoak and Robert G. Morrison, “Thinking and Reasoning: A Reader’s Guide,” in the Cambridge Handbook of Thinking and Reasoning. Edited by Keith J. Holyoak and Robert G. Morrison (Cambridge: Cambridge University Press, 2005), 2.

[4] Jonathan St. B. T. Evans and Keith E. Stanovich. “Dual-Processing Theories of Higher Cognition: Advancing the Debate,” Perspectives of Psychological Science, (2013), 8(3), 223-41.

[5] Keith E. Stanovich and Richard F. West, “Individual Differences in Reasoning: Implications for the Rationality Debate?” Behavior and Brain Sciences, (2000) 23:5, 645-727.

[6] Daniel Kahneman, Thinking, Fast and Slow (New York: Farrar, Straus, and Giroux, 2011), 13.

[7] Daniel Kahneman, “A Perspective on Judgment and Choice: Mapping Bounded Rationality,” American Psychologist, September 2003, 697-720.

[8] Kahneman, Thinking, Fast and Slow, 239.

[9] Kahneman, Thinking, Fast and Slow, 25.

[10] Kahneman, Thinking, Fast and Slow, 24.

[11] Kahneman, “A Perspective on Judgment and Choice,” 699.

[12] Nasir Naqvi, Baba Shiv, and Antoine Bechara, “The Role of Emotions in Decision Making,” Current Directions in Psychological Science, (2006), Vol 15—No. 5, 260-64.

[13] Kahneman, Thinking, Fast and Slow, 24.

[14] Ibid, 24.

[15] Ibid, 192.

[16] Malcolm Gladwell, Outliers, (New York: Little, Brown and Company, 2008), 35-68.

[17] K. Anders Ericsson, Michael J. Prietula, and Edward T. Cokely, “The Making of an Expert,” Harvard Business Review, July-August 2007, 115-21.

[18] Gary Klein, Sources of Power (Cambridge, MA: MIT Press, 1999), 20.

[19] Kahneman, Thinking, Fast and Slow, 237.

[20] Klein, Sources of Power, 89-109.

[21] Ibid, 32-35.

[22] Kahneman, Thinking, Fast and Slow, 98.

[23] Daniel Kahneman and Gary Klein, “Conditions for Intuitive Expertise: A Failure to Disagree,” American Psychologist, September 2009, 515-26.

[24] Gerd Gigerenzer, “Why Heuristics Work,” Perspectives on Psychological Science, Vol 3—No. 1, 2008, 20-9.

[25] Amos Tversky and Daniel Kahneman, “Judgment Under Uncertainty: Heuristics and Biases,” Science, Vol 185, 1974, 1124-31.

[26] Kahneman, Thinking, Fast and Slow, 149-153.

[27] Ibid, 119-128.

[28] Ibid, 137-145.

[29] Gigerenzer, 24.

[30] Daniel Kahneman, “A Perspective on Judgment and Choice,” 707.

[31] Kahneman, Thinking, Fast and Slow, 194.

[32] Daniel Kahneman and Gary Klein, “Conditions for Intuitive Expertise,” 522.

[33] Ibid, 522.

[34] Klein, Sources of Power, 148.

[35] Klein, Sources of Power, 274. Klein does not use the term situational awareness, but does discuss how the lack of information is a major factor in poor decisions.

[36] Kahneman, Thinking, Fast and Slow, 194.

[37] Ibid, 220.

[38] Daniel Kahneman and Gary Klein, “Conditions for Intuitive Expertise,” 522.

[39] Kahneman, Thinking, Fast and Slow, 240.

[40] David J. Snowden and Mary E. Boone, “A Leader’s Framework for Decision Making,” Harvard Business Review, November 2007, 69-76.

[41] Kahneman, Thinking, Fast and Slow, 86-88.

[42] Ibid, 28.

[43] Carey Walker and Matthew Bonnot, “Improving While Operating: The Paradox of Learning,” Army Press Online Journal, August 29, 2016, APOJ 16-35, http://armypress.dodlive.mil/2016/08/29/improving-while-operating-the-paradox-of-learning/.

[44] Army Doctrine Reference Publication (ADRP) 6-22, Army Leadership (Washington, DC: Government Printing Office [GPO], August 2012), para. 1-30.

[45] Kahneman, Thinking, Fast and Slow, 41.

[46] Ibid, 42.

About the Author(s)

Comments

Dating Advice: Living…

Dating Advice: Living Together

How to Gently Break Off a Relationship

Turning Fear Into Fun - 8 Essential Etiquette of a Great Date

Essential Etiquette of a Great Date

4 Ways to Strengthen Your Marriage and Reduce Risk of Divorce

What Does Facebook Know About Your Love Life?