Shaping Perceptions: Processes, Advantages, and Limitations of Information Operations

Taylor A. Galanides

Introduction

[Analysts] construct their own version of ‘reality…’ This process may be visualized as perceiving the world through a lens or screen that channels and focuses and thereby may distort the images that are seen… To achieve the clearest possible image, analysts need more than information… They also need to understand the lenses through which this information passes.

-- Richards Heuer, Psychology of Intelligence Analysis[1]

The value of manipulation and influence as part of the greater effort of achieving specific military and non-military goals has long been appreciated. Information operations are but one aspect of national statecraft and seek to influence targeted audiences – like foreign leaders or civilian populations – to gain a competitive military or political edge. Partially rooted in fields like cognitive psychology, electronic and cyber warfare, and operations security, information operations are employed to shape the perceptions of specific targets to ultimately achieve a specific end, thereby influencing their decision-making and behavior.[2]

Recent and extensive developments in technology, media, communications, and culture – such as the advent of social media, 24-hour news coverage, and smart devices – allow populaces to closely monitor domestic and foreign affairs. This “ability to share information in near real time,” is an asset to the Nation and its military.[3] However, these advances have also facilitated the convergence of new vulnerabilities to individual and international security, as seen with the rise of computer hacking over the past decade, as well as with Russia’s attempts to influence the 2016 U.S. presidential election.[4] With more information available than ever before, coupled with an increase in the speed of interactions, intelligence analysts and decision-makers will have to form judgments and make decisions even more quickly. Cognitive biases, which are natural tendencies or mental shortcuts that the mind subconsciously uses to shorten the decision-making process, could be further exacerbated. These cognitive tendencies can then be leveraged by adversaries through the use of deception, thus influencing and shaping perceptions for the benefit of a political or military end. However, there are strategies and techniques that can be employed when analyzing information and intelligence, forming judgments, and making decisions, that can increase objectivity and decrease the effects of cognitive biases.

Control of mass communications and the manipulation of information have become key elements in the pursuit of influencing foreign and domestic audiences, as “people can only perceive from the data that they receive.”[5] This importance of perception-shaping capabilities will persist in the coming decades as technology increasingly advances, and it will be ever more imperative for the Nation and its military to prepare and defend against adversarial information operations in order to maintain geopolitical superiority. An examination of the evolution of Russia’s information campaign, grounded in the Soviet doctrine of maskirovka and reflexive control theory, as well as China’s recent focus on winning ‘informationized local wars,’ can aid in highlighting potential implications for the Nation and the Army.

Role of ‘Information’

In order to understand the processes of perception, decision-making, and information operations, comprehension of the information environment as well as the differences between ‘data,’ ‘information,’ and ‘intelligence,’ is necessary.

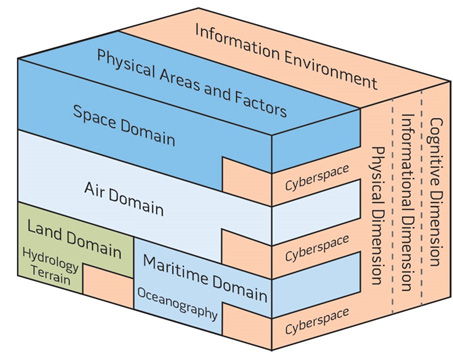

Figure 1. Illustration of the Operational Environment.[6]

To begin, the operational environment is the combination of the conditions, circumstances, and influences that affect and determine the use of military forces, which help a commander form decisions, and is partly composed of the information environment (Figure 1).[7] The information environment consists of everything that collects, processes, disseminates, or acts on information.[8] It consists of the physical dimension, which includes the tangible, such as human beings and technology like laptops; the informational dimension; and lastly, the cognitive dimension, which “encompasses the minds of those who transmit, receive, and respond to or act on information,” and “refers to individuals’ or groups’ information processing, perception, judgment, and decision-making.”[9] Joint Publication 3-13 further asserts that:

These elements [of the cognitive dimension] are influenced by many factors, to include individual and cultural beliefs, norms, vulnerabilities, motivations, emotions, experiences, morals, education, mental health, identities, and ideologies. Defining these influencing factors in a given environment is critical for understanding how to best influence the mind of the decision maker and create the desired effects. As such, this dimension constitutes the most important component of the information environment.[10]

Additionally, a differentiation of key terms is necessary to aid this discussion about the importance of information within the operational environment. Specifically, ‘data’ can be described as the raw and objective facts and observations drawn from the operational environment; when it is organized and processed, the data is thus put into a meaningful context by interpretation and can be called ‘information.’[12] When information is analyzed and compared against other information and past experiences to form conclusions and predictions, ‘intelligence’ is produced.[13] Specficially, “intelligence allows anticipation or prediction of future situations and circumstances, and it informs decisions by illuminating the differences in available courses of action.”[14] Accurate information, and thus accurate intelligence, are necessary in order to make accurate decisions.

Psychology and Information Operations

Because much of information operations and intelligence analysis are rooted in psychology, an examination of the processes of perception, neural information processing, and the formation of judgments through cognitive biases – and how these processes connect to deception – would prove useful in understanding how successful information operations work.

Structure of Perception

Dr. Barton Whaley, a pioneer in military deception strategy, created a typology for organizing the structure of perception. According to former CIA officer Richards Heuer in his groundbreaking Psychology of Intelligence Analysis, perception “is a process of inference in which people construct their own version of reality on the basis of information provided through the five senses.”[16] Through the process of perception, we organize, test, and interpret incoming information against a constructed network of associations and patterns.[17] If the new information somehow conforms to previously-made associations, then we usually accept it as accurate.[18] As such, human minds depend greatly upon previous assumptions and preconceptions.[19]

Alternatively, deception is a form of misperception, in that it is the deliberate manipulation of a perceived reality.[20] Deception can then be broken down into dissimulation, or “hiding the real,” and simulation, or “showing the fake.”[21]

Deception

The operation of deception can be defined as “the deliberate misrepresentation of reality done to gain a competitive advantage”[22] and is often intertwined with the operation of denial, or “the attempt to block information which could be used by an opponent to learn some truth.”[23] For example, accurate or truthful information must be obscured or ‘denied’ to the target, in order to deceive an adversary about one’s own intentions or objectives.[24] The products of deception and denial include disinformation, or the deliberate use of misleading information,[25] and propaganda, which includes any type of communication that spreads or reinforces specific beliefs for political purposes.[26] Deception can also be seen as “the process of influencing the enemy to make decisions disadvantageous to himself by supplying or denying information…” and inducing him “to accept a false or distorted presentation of their environment.”[27]

Perceptions of reality are important because they influence decisions and outcomes, which is often described in the field of sociology as the Thomas theorem: “‘If men define situations as real, they are real in their consequences.’”[28] Furthermore, the social construct of reality theory posits that as people and their corresponding groups interact in society, they create “a mental representation of each other’s actions” over time.[29] Comprehending the perspective through which adversaries and populations view the world, as well as understanding the decision-making shortcuts that those individuals are inclined to use, are both imperative for the successful manipulation of information and deception of those adversaries.

There are three primary objectives for the employment of deception. Immediately, deception seeks to condition a specific target’s beliefs; its second aim is to affect the target’s behavior; the final and overall aim is to benefit in some way from the target’s actions.[30]

How Deception Works: Cognitive Biases

Higher-order or executive cognitive functions are evolutionarily recent, and as a result, the human mind has limitations when completing logical or analytical tasks.[31] It does not cope well with ambiguity, uncertainty, risk, and the sometimes overwhelming complexity of the world.[32] To overcome these limitations, the mind has evolved in such a way that allows for large amounts of information to be processed through the use of mental shortcuts known as ‘mental models,’ ‘mind-sets,’ or ‘cognitive biases.’[33] Cognitive biases have been extensively researched, especially by Kahneman and Tversky,[34] and the term refers to the systematic pattern of deviations from the norm in decision making; that is, perceptions can be formed or drawn in an illogical or irrational manner because of cognitive biases.[35]

Cognitive biases are unconscious strategies or rules of thumb that allow people to make quick judgments when faced with new or overwhelming information.[36] However, a trade-off exists in this process: in exchange for the potentially quicker formation of conclusions and the effortless ability to process an almost infinite amount of data from the environment, these natural cognitive predispositions are imperfect, sometimes irrational, and can limit the accuracy of our judgments.[37] When humans make decisions, “they essentially make a choice among several alternatives.”[38] When decisions are based on false elements of a manipulated reality, and thus are based on inaccurate perceptions, alternative explanations are often ignored or not taken into account and deception can occur.[39] Psychologists have identified a large number of these decision-making tendencies which create vulnerabilities for decision-makers to be manipulated or deceived.

To begin, there are some common tendencies and cognitive biases that decision-makers and analysts often fall victim to, though the following list is not exhaustive. Humans tend to perceive what is expected, a notion known as confirmation bias.[40] As Heuer notes, “Events consistent with these expectations are perceived and processed easily, while events that contradict prevailing expectations tend to be ignored or distorted in perceptions.”[41] Further, biases tend to be quickly formed but are resistant to change.[42] People also have a tendency to assimilate or incorporate new data into their preconceptions.[43] The availability bias occurs when the brain attaches more weight or importance than is appropriate to information based on how easily it is retrieved or recalled.[44] Gaps in information are often filled through the use of assumptions or stereotypes, where a fixed representational image is applied to all new similar situations.[45] With mirror-imaging, gaps are filled with a person’s own knowledge by assuming that an adversary would likely act in a specific way because that is how the United States would respond.[46] Finally, with the anchoring bias, incorrect judgments made from ambiguous information tend to persist even after more accurate information is obtained because more information is required to reject a hypothesis than to form an initial impression.[47] Humans have no problem grasping new ideas, but it is inherently difficult to change such judgments or perceptions once established.[48] In most of these cases, alternative explanations or hypotheses are discounted.

With regards to deception, Heuer has highlighted that “it is far easier to lead a target astray by reinforcing the target’s existing beliefs, thus causing the target to ignore the contrary evidence of one’s true intent, than it is to persuade a target to change his or her mind.”[49] The cognitive dissonance theory provides a cogent explanation for why it is easier to reinforce already existing beliefs: “in order to avoid emotional conflict or dissonance, humans tend to seek information that confirms, and avoid information that refutes their beliefs.”[50] Statistically, deception is often successful despite alertness or awareness of the possibility of deception.[51] Deception is thus an important element of information operations, as Heuer aptly emphasizes that it provides competitive advantages “to the actor who seizes the initiative, rather than to the reactor who seeks to parry the initiatives of others.”[52]

As more information than ever before is available through technological advancements, decision-makers have access to an overwhelming amount of information that could promote the development of even more subconscious biases or shortcuts. Knowledge about the nature of perceptions and inherent limitations of the human mind have significant implications for the successful employment of information operations, as well as for the successful avoidance of adversarial efforts to influence and manipulate.

It is important to note that there are ways to minimize the influence of cognitive biases and the possibility of being deceived, which is addressed in Part VI. The Tradecraft Primer: Structured Analytic Techniques for Improving Intelligence Analysis, based on Heuer’s work and prepared by the U.S. government, is one such tool that provides effective methods for increasing objectivity and accuracy when making assessments.

U.S. Army Perspective of Information Operations

The U.S. Army views information operations as an instrumental part of the broader effort to maintain an operational advantage over adversaries. ‘Information operations’ are specifically defined by the U.S. Army as “…the integrated employment, during military operations, of information-related capabilities in concert with other lines of operation to influence, disrupt, corrupt, or usurp the decision-making of adversaries… while protecting our own,” and while targeting adversaries’ wills to fight.[53] Joint doctrine of the U.S. Armed Forces states that information operations may also be employed by the military during Phase 0 and Phase I of military operations by supporting “civil authorities, peace operations, noncombatant evacuation, foreign humanitarian assistance, and nation-building assistance,” all of which can “fall outside the realm of major combat operations represented by Phases II through V”.[54]

It is important to recognize that the Army specifies their use of information operations during times of conflict or crisis, or against foreign populations. These restrictions on the use of information operations are typical for Western nations, as transparency and loyalty to their respective populations are particularly emphasized.

Additionally, the convergence of a multitude of emerging technologies, such as those used for the collection and sharing of information, the character of warfare – or the expression of warfare or conflict – has arguably changed. Specifically, Chief of Staff of the U.S. Army, General Mark Milley, has written extensively about the absolute necessity for the United States to accurately predict the consequences of the changing character of warfare:

Technology, geopolitics, and demographics are rapidly changing societies, economies, and the tools of warfare… For example, the proliferation of effective long-range radars, air defense systems, long-range precision weapons, and electronic warfare and cyber capabilities enables adversary states to threaten our partners and allies. Conflict will place a premium on speed of recognition, decision, assembly, and action. Ambiguous actors, intense information wars, and cutting-edge technologies will further confuse situational understanding and blur the distinctions between war and peace, combatant and noncombatant, friend and foe – perhaps even humans and machines.[56]

Moreover, military operations and actions will often occur below the threshold of armed conflict in a competitive ‘gray zone,’ and warfare itself will be faster, cover greater distances, and potentially be more destructive.[57] These features partially comprise the notion of ‘ambiguous’ or ‘hybrid’ warfare, or ‘non-linear’ warfare in Russian military thinking: warfare is now best characterized as being ‘blurred,’ where the state of war between nations is ambiguous, or the distinction between combatants and non-combatants is similarly unclear.[58] As a result of these various changes, “The decision-making process will require much greater speed; information and intelligence will need to be quickly gathered and assessed so that commanders can make decisions at increasingly rapid rates.”[59] As stated previously, this increased speed of interactions could potentially intensify the cognitive tendencies that humans naturally possess, and measures will need to be taken in order to reduce the possibility of manipulation, misperception, and deception by adversaries.

Employing defensive and counter information operations to maintain information superiority – or “the ability to see the battlefield while your opponent cannot” – will be key.[60] The recent establishment of the U.S. Army’s Futures Command was a groundbreaking decision that will prove invaluable for assuring the modernization of the Army, as well as its ability to stay ahead of the changing character of warfare.

Foreign Information Operations

It is important that the United States and the Army continues to heed the threat that Russia poses, though China is projected to overtake the Nation’s current pacing threat of Russia by 2035.[61] For example, General Milley stated in a speech for the Association of the United States Army in 2016:

In Europe, we see a revanchist Russia who has modernized their military and pursued an aggressive foreign policy in Georgia, Crimea, Ukraine and elsewhere… The world economic center of gravity [is] moving from the North Atlantic to the North Pacific, [which is] resulting in a rapidly rising China as a great power, with a revisionist foreign policy, backed up with an increasingly capable military.[62]

Adversarial nations are taking advantage of advancements in technology in order to try to gain a competitive edge over the West and the United States. For example, Russia and China are in the process of modernizing their armed forces by developing “long-range, precision, smart, and unmanned weapons and equipment.”[63] Additionally, growing attention to the cognitive and information dimensions of warfare is evident in the advancement of the information operations of Russia and China. For example, U.S. Air Force General and former NATO Supreme Allied Commander Phillip Breedlove has stressed that the most impressive part of Russia’s contemporary non-linear warfare is the informational aspect.[64] Breedlove further emphasized that “the Russians are able to exploit a conflict situation and turn it into a false perception” in order to “deceive their opponents as well as public opinion.”[65]

The power of information and the increasing emphasis that nations are placing on the information sphere can further be illustrated through Russia’s perspective of the ‘Arab Spring,’ as well as their opinion about the so-called ‘color revolutions.’ The revolutions occurred in the early 2000s in Georgia, Ukraine, and Kyrgyzstan, among others, and resulted in various regime and government changes. Igor Panarin, from the Diplomatic Academy of the Ministry of Foreign Affairs, whose academic work helped lay the foundations for Russia’s information security doctrine – has stated that both the color revolutions and the Arab Spring “were product[s] of social control technology and information aggression from the United States.”[66] Specifically, the color revolutions “are viewed in Russia as ‘artificial processes plotted in the West aimed at destabilizing entire regions in the post-Soviet area,’ and as ‘socially the most dangerous form of encounter between intelligence services.’”[67]

Awareness of the perspectives through which the Nation’s adversaries view the world, as well as their specific information operation strategies, is necessary for effectively combatting their influence and manipulation attempts. For instance, it is important to note that both Russia and China view information operations through a broad confrontational lens in which the competitive struggle to control the flow of information is continuous, regardless of peacetime. Understanding the information operation strategies of Russia and China, as well as ways to exploit their vulnerabilities and defend against their influence and deception attempts, continues to be an important method for maintaining national and military strength.

Russian Federation

Complications in identifying and defining the specific elements of Russia’s contemporary military strategies arise from the outset due to the very manner in which concepts and strategies are defined, perceived, and interpreted by the United States and Russia. Different terms are used by each nation to describe Russia’s strategy, with Western analysts emphasizing the ‘hybrid’ or ‘new generation’ elements of Russian warfare.[68] Additionally, the “Russians have adapted Western theories, technologies, and solutions, adjusting them to their own concepts and their own ‘ideological and cultural system of concepts and terms.’”[69] Russia’s military has also been recognizing the trend towards the changing character of warfare in recent years. For example, Chief of the General Staff of the Armed Forces of Russia, General Valery Gerasimov, noted the blurring of the line between peace and conflict, as well as the increased use of nonmilitary methods, covert operations, information operations, and asymmetric methods;[70] he asserted that “… the principal tactic within this set of developments is noncontact or remote engagement, since information technology has greatly reduced the spatial and temporal distances between opponents.”[71]

Much of their information operations and deception warfare are based on the doctrines of maskirovka and reflexive control. The most recent iteration of their deception doctrine blends old military thoughts with new avenues with which to pursue their concepts, and also takes into account modern technological advancements.[72] Some Russian military thinkers further divide their overall information operation strategy into the categories of information-technical, which includes cyber and electronic warfare, and information-psychological, which focuses on “the battle of wills,”[73] or influencing and controlling targets through deceptive measures.[74] Additionally, Russian military strategists have explicitly specified that the nature of their information operations is defensive.[75] They have used this excuse to create uncertainty and foster credible deniability of their actions.[76]

Furthermore, the Russians define their ‘information operations’ in terms of an ‘information confrontation,’ where they perceive broader applications for information operations that encompass both peacetime and war, whereas the United States and its Army confine the operation to times of crisis or conflict.[77] Prominent military leaders, like General Gerasimov, have expressed that the nation seeks to pervasively integrate and coordinate deception, influence, and information operations throughout every level of its federal government and military continuously and without regard to peacetimes, against both external and internal threats.[78] Current Russian military doctrine further asserts that “information war is merely a confrontation for damaging information systems, processes, and resources to undermine the political, economic, and social systems, as well as brainwashing the populace for destabilizing the opponent’s society and state.”[79] This increased focus on evolving their information operations is illustrated by retired General-Lieutenant S. A. Bogdanov and Reserve Colonel S. G. Chekinov’s assertion that “the first to see will be the first to start decisive actions.”[80]

Authoritarian regimes like Russia and China experience no moral or ethical reservations in employing information operations or deception to influence their own populations. In fact, it has been suggested that “the prize for Russia’s rulers is not the Baltic or Aleppo, but control of their own people,” acknowledgement of “the greatness of Russia – defined by its rejection of the West – and the exposure of Western weakness and decadence.”[81] For instance, the Russian government seeks to limit civil unrest and foster popular support by spreading propaganda that blames the West for the difficult state of affairs in Russia.[82] Through the use of manipulated narratives and outright lying, Russia:

… Helps shore up faith in the government, which sees an existential threat to its rule everywhere and thus continually searches for support… Unable to deliver economic growth, the Kremlin needs to cook up reasons to keep the population in a constant state of mobilization against external threats. The way the propagandists tell it, Russia is surrounded by enemies and can only be defended by Mr. Putin. The past is reshaped to fit this story.[83]

Understanding the Russians’ current use of both maskirovka and reflexive control as part of their broader information and deception strategies is necessary to combat such attempts to deceive, influence perceptions, and affect behavior.

Maskirovka

The Soviet-era doctrine of maskirovka is a form of deception, and specifically is the deliberate misleading of targets by creating and presenting a false reality to conceal the truth.[84] This concept was originally employed by the Soviets to take advantage of the element of surprise, but Russia’s contemporary deception doctrine now focuses on the promotion of doubt, chaos, plausible deniability, and controlling the reactions of adversaries in order to enable “Russian freedom of maneuver.”[85] Additionally, maskirovka consists of four forms: concealment, simulation, demonstrations, and disinformation. Concealment promotes the formation of false perceptions by hiding the truth from adversaries.[86] Simulations and imitations attempt to make artificial objects, positions, and activities appear real.[87] Demonstrations are overt or deliberate actions to disguise one’s true intentions.[88] Disinformation is the last form of maskirovka, which works through the dissemination of propaganda, manipulated information, and outright lies to mislead.[89]

Reflexive Control

Reflexive control seeks to interfere with the decision-making process.[90] Specifically, reflexive control involves providing specifically prepared information to a target that causes him to react in a predetermined way without recognizing that he is being manipulated or deceived.[91] It occurs when the target:

…Comes up with a decision based on the idea of a situation which he has formed. Such an idea is shaped above all by intelligence and other factors, which rest on a relatively stable set of concepts, knowledge, ideas, and finally, experience. This set is usually called the ‘filter,’ which helps a commander separate necessary from useless information, true data from false, and so on.[92]

Thus, the filter is comprised of the adversary’s preferred military strategies and methods, and the perspective through which they view the world, which includes their psychological profile and biases.[93] Timothy Thomas, a prolific analyst at the U.S. Foreign Military Studies Office, states, “The chief task of reflexive control is to locate the weak link of the filter and exploit it.”[94] The “moral, psychological…” and “personal characteristics” of analysts and decision-makers, as well as their “biographical data, habits, and psychological deficiencies” can then be exploited through the employment of deception.[95] In turn, the actor who best utilizes ‘reflex,’ or “the side best able to imitate the other side’s thoughts or predict its behavior, will have the best chances of winning,” because that actor effectively studied their adversary’s filter and exploited it for their own benefit.[96]

Currently, Russia uses maskirovka and reflexive control to influence and manipulate its adversaries’ “sensory awareness of the outside world;” in turn, their adversaries’ perceptions about Russia’s actions and intents are based on inaccurate cognitions from “the material world.”[97]

Georgia and Crimea

Russia’s invasions of Georgia and Crimea effectively serve as cautionary examples of the far-reaching effects of Russia’s evolving and adapting information operation campaign.

To begin, during their short conflict in 2008, Russia and Georgia strove to manipulate and control the flow of information to the international community.[98] The Russians successfully used cyberattacks in concert with military methods, and also spread propaganda about supposed Georgian atrocities and narratives about Russia’s ‘defensive’ actions.[99] However, Georgia was more successful in controlling the informational sphere by launching their own disinformation campaign that limited the dissemination of Russian news, reported Russian attacks on civilians, and effectively garnered the sympathy of the international community.[100]

As a direct result, Russia subsequently revised its information operation strategy, and effectively learned from its mistakes in the Russo-Georgian conflict by the time it acted in Crimea. Purported ‘unidentified’ armed men (Russian troops) occupied strategic buildings and locations in eastern Ukraine in February of 2014, followed by the formal annexation of Crimea by Russia in March.[101] The Russians sought to create a “hallucinating fog of war” in an attempt to alter the analytical judgments, perceptions, and resulting actions of its adversaries, particularly the international community.[102] Russia’s cyberespionage efforts, as compared with the Georgian conflict, were much more devastating, as telecommunication infrastructures, important Ukrainian websites, and cell phones of key officials were jammed or shut down prior to the Russian invasion.[103]

Furthermore, maskirovka and reflexive control were successfully employed during the Crimean campaign. For example, with the additional help of computer hackers, bots, trolls, and television broadcasts, the Russian government was able to create a manipulated version of reality that claimed Russian intervention in Crimea was not only necessary, but humanitarian, in order to protect Russian speakers.[104] Through the use of large demonstrations called ‘snap exercises,’ the Russians were able to mask military buildups along the border, as well as its political and military objectives.[105] Russia further disguised their intentions by claiming adherence to international law, while also claiming victimization from the West’s attempts to destabilize and subvert their nation.[106] They denied any involvement or military engagement in Crimea until after the annexation was complete.[107] By distorting the facts surrounding the situation and refraining from any declaration of war, Russia effectively infiltrated the international information domain and shaped the decision-making process of NATO countries to keep them out of the conflict;[108] NATO nations ultimately chose minimal intervention despite specific evidence of Russia’s deliberate intervention in order to keep the conflict de-escalated.[109] It was not until the end of 2015 that Putin admitted to invading Crimea;[110] while the United States acknowledges Russia’s incursions into Ukraine, it does not recognize their annexation of Crimea, and officials have recently stated that sanctions imposed on Russia will not be lifted until the peninsula is returned to Crimea.[111]

To conclude, Russia’s information operation strategy has evolved to focus on sowing discord and influencing its adversaries through the use of maskirovka and reflexive control.[112] It could be argued that Russia achieved its objectives of expanding its sphere of influence while weakening Western influence and considerably affecting important geopolitical events.[113] At the same time, Russia kept its largest threats from intervening by promoting chaos and de-escalating kinetic conflict, which ultimately enabled a referendum in which its target voted to join the Federation.[114] Despite the West’s refusal to acknowledge the annexation of Crimea,[115] Russia has still achieved what it set out to do by maintaining military flexibility and adapting their strategies.[116] Ultimately, “…Russia’s new propaganda is not [only] about selling a particular worldview, it is about trying to distort information flows and fueling nervousness among [Western] audiences.”[117]

People’s Republic of China

The financial wealth, large population, and growing military might of the People’s Republic of China, together, have the potential to make the country the United States’ pacing threat as early as 2035.[118]

The Chinese Communist Party (CCP) has maintained its regime in large part because of the support of the Party’s armed forces, the People’s Liberation Army (PLA).[119] China’s primary national objectives include maintaining the rule of the CCP regime, defending the nation’s sovereignty and territory, and establishing a regional and national hegemony.[120] In order to achieve the military and geopolitical interests of the CCP, the Chinese recognized a need to modernize its armed forces after carefully observing the United States in the Balkans and during the Gulf War.[121] Chinese military thinking similarly underwent an evolution during the 1990s. Specifically, they recognized that the character of warfare was beginning to change,[122] and observed that “the pace and destructiveness of modern wars was such that even ‘local wars’ (or those not involving the mass mobilization of the nation and the economy) nonetheless could affect the entire country.”[123] It was also recognized that conflict was much more non-linear, intense, and constant.[124] After 1991, the PLA shifted its military strategy from expecting to “fight with massed air, land and sea forces in ‘local wars under modern conditions,’ to ‘local wars under modern, high-tech conditions,’ and is now focused on fighting and winning ‘informationized local wars.’”[125] Chinese military doctrine has also asserted that “informationized warfare will become the basic form of warfare in the 21st century.”[126]

China views their ‘informationized operations (xinxi hua zuozhan or xinxi hua zhanzheng),[127] which seek to comprehensively integrate information technology into the PLA in order to gain information dominance (zhixin xiquan),[128] as part of their broader military strategy of information operations (xinxi zuozhan).[129] PLA writings reveal that their information operations include electronic warfare, computer network (cyber) warfare, and political warfare.[130] China’s political warfare operations include psychological warfare, public opinion (media) warfare, and legal warfare.[131] Analysis of PLA doctrine shows that “the PLA expects to weaken and paralyze an enemy’s decision-making and also to weaken and paralyze the political, economic, and military aspects of the enemy’s entire war potential.”[132]

Influenced by traditions such as Confucianism and Daoism, Chinese strategists have “sought to portray China as an essentially pacifist state that privileges the civil over the military,” while similarly claiming that their military strategies are defensive and non-expansionist.[133] Additionally, the country perceives that the West is attempting to encircle its nation, undermine its authority, and ultimately end Chinese socialism and the rule of the CCP.[134] The discrepancies between the beliefs and justifications of the Chinese as compared to the reality of an expansionist, offense-oriented China with growing military capabilities, is evident.[135] Like Russia, such seemingly-bizarre claims promote confusion and doubt regarding the nation’s true intentions.

Perception Management and Stratagem

China’s use of deception is historically ingrained in their traditions and culture, and information operations and deception are used as pervasively and naturally as they are in Russia.[136] China’s efforts to influence domestic and foreign populations have been described by U.S. analysts with the Western term of ‘perception management,’ which is viewed as actions that provide or deny specific information to adversaries in order to influence their emotions, intentions, and analytical reasoning, with the ultimate goal of benefiting from the adversaries’ behavior.[137] The Chinese describe their influence methods in terms of ‘stratagem’ (mou lue), which are methods or strategies based on traditional military maxims.[138] “Suspect what is rarely seen, exercise often, and display the forces,” and “on many occasions, show the fake and then show the real, lead them to be unprepared,” are two examples of modern Chinese stratagem.[139]

Like Russia, China highly censors and controls the dissemination of information through the media to promote their national interests by influencing foreign and domestic populations to foster support. For example, the use of perception management for external purposes can be seen in the manner in which the Chinese have described their political objectives: before 2008, the Chinese described their goal of ascension to world power as a ‘peaceful rise,’ which was carefully changed to ‘peaceful development’ in order to promote a positive image of an unassuming and non-threatening China.[140] The PLA also engages in perception management through military posturing during demonstrations, such as when the Chinese fired ballistic missiles near the Taiwan Strait to intimidate, dissuade, and deter Taiwan’s moves towards democracy and independence in 1996.[141]

The CCP also continually influences its own population through the internal and highly-controlled use of propaganda. In an increasingly technological and information-rich world, the CCP has adapted its propaganda campaign in an effort to combat alternative information and ideas that counter the Party’s interests and doctrines. Furthermore, since the 1980s, the CCP has sought to consolidate and maintain its position in power by garnering popular support through the revitalization of the nation’s economy and through the use of persuasion.[142] The Chinese learned from the Cultural Revolution that “force is only a limited and short-term means of social control in a modern society; the most sustainable means of social control is persuasion.”[143] As a result, today, younger Chinese typically accept the whole propaganda campaign that the government pushes, whereas older citizens generally accept the basic policies of the regime but might hold views that may be different from the government’s.[144]

No matter the method, the Chinese are continually attempting to influence and deceive international and domestic perceptions about their objectives and intentions in order to achieve national interests.

Vulnerabilities and Suggestions

Both China and Russia pose serious threats to the United States and its global interests. They subversively attempt to influence the perceptions and decision-making of the Nation’s leaders as well as its civilian population by exploiting cognitive tendencies in the pursuit of gaining a competitive edge over the United States. The perspectives through which adversaries view and employ their information operation strategies in terms of psychology and ethics, and the way that the United States responds to their adversaries’ increasingly technological attempts to influence, are important points to consider when evaluating potential vulnerabilities of the information and national superiority of the United States. For example, the renewal of an effective interagency committee focused on identifying and countering adversarial disinformation and propaganda – similar to the Active Measures Working Group from the 1980s – might prove to be particularly useful in retaining a competitive edge against the United States’ adversaries.

Cognitive Biases

By taking advantage of common cognitive biases and specific perspectives, adversarial information operations can influence, manipulate, and deceive the American civilian population as well as its military personnel and decision-makers. The challenge in avoiding deception involves, on the one hand, balancing being too skeptical and thus not learning from experience or updating our judgments when circumstances change, and on the other, being too receptive or accepting of new information, and thus being heavily influenced by minor developments. Consequently, Heuer suggests:

As a general rule, we err more often on the side of being too wedded to our established views and thus too quick to reject information that does not fit these views, than on the side of being too quick to revise our beliefs. Thus, most of us would do well to be more open to evidence and ideas that are at variance with our preconceptions.[145]

Awareness of one’s cognitive biases is the first step towards more accurate conclusions and decisions. However, the crucial step for reducing the effects of our biases involves devoting time and attention to consciously explore alternative explanations prior to acting or making decisions. Heuer’s Tradecraft Primer provides a comprehensive approach to the assessment and judgment-making process that “considers a range of alternative explanations and outcomes [and] offers one way to ensure that analysts do not dismiss potentially relevant hypotheses and supporting information…”[146] For instance, periodically employing a ‘key assumptions check,’ which systematically reviews any hypotheses that have been accepted to be true, can ensure that subsequent assessments are not based on faulty premises.[147] If U.S. decision-makers and the military challenged their assumption, for example, that Japan would avoid a total war with the United States because of its military might during World War II, they potentially could have recognized that the Japanese viewed a preemptive first strike as the only way to strategically diminish America’s fighting potential.[148] Other strategies for reducing the effects of cognitive biases include building the best case for an alternative explanation of a strongly held belief through ‘devil’s advocacy,’ considering the ‘unthinkable’ in events with ‘high impact, but low probability,’ or looking at issues from an adversary’s perspective through ‘red team analysis.’[149]

By better understanding the diverse perceptual judgments that humans are inclined to make – like the tendency to often ignore solid but probabilistic information – analysts, military leaders, political leaders, and even the general population, can potentially compensate for, to some extent, these limitations of information processing.

Ethics

The United States and the Army have moral and ethical standards that restrict the use of information operations, unlike China and Russia – usually rightfully so. However, as adversarial nations increasingly employ influence and deceptive measures while lacking such moral compunctions, the United States and its Army could benefit from re-evaluating their own information operation strategies and develop alternative methods for maintaining an operational advantage and informational superiority against its adversaries.

The recent attempts by Russia to influence the 2016 U.S. presidential election exemplify such a need for the United States and the Army to effectively counter foreign propaganda, disinformation, and deception efforts that seek to undermine the Nation. Retired U.S. Army Colonel Larry Wortzel suggests that “the United States also must think through how it intends to respond to the PLA’s cyber operations. Defensive measures are important, but, increasingly, Congress and American companies are discussing the potential for offensive cyber operations designed to disrupt the networks of attackers and of ‘honeypots,’ or traps designed to lure in a hacker and either allow the attackers to extract bad information or to attack the hacker’s systems.”[150] The national vulnerability to influence and deception because of the pervasive use of information operations by the Nation’s adversaries means that taking a more aggressive stance against foreign disinformation is necessary to effectively combat such attempts.

Disinformation and Media

Both Russia and China can take advantage of the media by “inserting fabricated or prejudicial information into Western analysis and blocking access to evidence.”[151] The West’s free press will continue to be the primary counter to constructed narratives. With regards to the technology industry and social media, Alina Polyakova, a Rubenstein Fellow for Foreign Policy at the Brookings Institution, and Geysha Gonzalez, associate director of the Eurasia Center at the Atlantic Council, have recommended stricter government regulations for tech companies like Twitter, Google, and Facebook, who are operating in a fairly unregulated environment.[152] These companies’ voluntary efforts to limit the spread of disinformation have been largely ineffective.[153] As such, they should be subject to the same Federal Communications Commission content and advertising restrictions as traditional media – which would undoubtedly reduce the spread of falsified information.[154]

Additionally, extensive training of U.S. military and government personnel, in conjunction with educating our civilian population about Russia and China’s deceitful narratives may decrease the likelihood of perceptions being manipulated: “…If the Nation can teach the media to scrutinize the obvious, understand the military, and appreciate the nuances of deception, it may become less vulnerable to deception.”[155] Specifically, the U.S. Department of Education could re-evaluate its “civics education for the digital age” in order to teach our newest generations to be “critical consumers of information.”[156]

Finally, since much of Russian and Chinese media outlets are state controlled, promotion of social media for the dissemination of non-state news and alternative perspectives can also aid in educating foreign civilian populations. Other ways to exploit Russian and Chinese vulnerabilities could include taking advantage of poor operations security, as well as the use and analysis of geotags, which are electronic identification tags that assign geographical locations to items posted online, to refute and discredit Russian and Chinese propaganda narratives.[157] Encouraging technological research aimed at identifying deception and fabrication attempts might also prove useful.

Interagency Coordination of Information Operations

The employment of information operations to counter false information and influence adversaries is not a novel strategy in the United States. For example, from 1981 until its dissolution in 1992, the Active Measures Working Group (AMWG) was a U.S. interagency committee that sought to “identify and expose Soviet disinformation.”[158] The committee included personnel from the Departments of State, Defense, Justice, as well as the CIA, FBI, Defense Intelligence Agency, Arms and Control Disarmament Agency, and the (now defunct) Information Agency.[159] In a comprehensive review of the committee’s products:

The group successfully established and executed US policy on responding to Soviet disinformation. It exposed some Soviet covert operations and raised the political cost of others by sensitizing foreign and domestic audiences to how they were being duped. The group’s work encouraged allies and made the Soviet Union pay a price for disinformation that reverberated all the way to the top of the Soviet political apparatus. It became the US Government’s body of expertise on disinformation and was highly regarded in both Congress and the executive branch.[160]

The AMWG was disbanded in 1992 not because Russia stopped employing influence campaigns, but because Western attention shifted away from the former Soviet Union following its collapse.[161] New threats captured the United States’ attention, like Saddam Hussein’s invasion of Kuwait and the ensuing Operations Desert Storm and Desert Shield, as well as the rise of al-Qaeda and acts of terror.[162] However, the members of the AMWG were aware of the threat the ‘new’ Russia continued to pose, warning that “‘many large fragments of the Soviet active measures apparatus continue to exist and function, for the most part now under Russian rather than Soviet sponsorship.’”[163]

The use of information operations and tools of influence like deception are currently employed throughout every level of the Russian and Chinese states, and the regimes also exert internal control over their civilian populations and private sectors. The coordination that both nations possess for the dissemination of disinformation to foreign and domestic audiences is pervasive and exhaustive. Thus, coordination of information operations and counter-propaganda efforts is likewise important between the U.S. government, the Army, and the rest of the branches of the military. The passing of the Countering Foreign Propaganda and Disinformation Act, part of the Fiscal Year 2017 National Defense Authorization Act (NDAA),[164] was an imperative step towards effectively countering foreign information and influence operations that seek to manipulate the United States and its decision-makers and undermine its national interests. In 2016, the Global Engagement Center (GEC) was initially established for countering ISIS propaganda, but the 2017 NDAA bill expanded the mission of the center to also countering disinformation from Russia, China, Iran, and North Korea.[165] Increased coordination between the State and Defense Departments, as well as with Homeland Security (DHS), the FBI, allies, and non-governmental organizations is also anticipated.[166] However, government hiring freezes and issues with funding have stymied productive results from the center.[167]

Additionally, Polyakova and Gonzalez assert that responsibility for alerting the public about disinformation threats should fall to the DHS and the Federal Emergency Management Agency.[168] The DHS has recently announced the creation of the National Risk Management Center (NRMC), which will play a vital cybersecurity role in evaluating disinformation threats and responding appropriately to such threats and attacks.[169] Hopefully the government will soon overcome the challenges that the GEC is facing, and also include coordination with the NRMC, DHS, and the military for the effective defense and countering of foreign propaganda that seeks to manipulate the American population and its decision-makers.

Conclusion

At the Capitol Hill National Security Forum on June 21, 2018, General Milley asserted “that the nature of war hasn’t changed since ancient times: Humans use force to try to impose their political will on opponents. That can involve a number of additional factors like luck, friction, and fog of war, but the nature itself doesn’t change.”[170] However, it has been recognized by many countries that the character of warfare is changing due to numerous developments in emerging technologies, like hypersonic technology, cyber technology, and artificial intelligence,[171] and because of the growing importance of information in politics and warfare.

Moreover, such advancements in technology will open new avenues for the collection, dissemination, and denial of information; the means and methods to shape and manipulate the perceptions of populations will likewise progress in the coming decades. More information, coupled with alternative methods for spreading information, together have the potential for challenging the decision-making process even further by exacerbating cognitive biases that humans naturally possess.

Bibliography

Abrams, Steve. “Beyond Propaganda: Soviet Active Measures in Putin’s Russia.” Connections. 15, no. 1 (2016): 5-31, http://www.jstor.org/stable/26326426.

Anderson, Eric and Jeffrey Engstrom. China’s Use of Perception Management and Strategic Deception. Report prepared for U.S.-China Economic and Security Review Commission by the East Asia Studies Center of Science Applications International Corporation, 2009, https://www.uscc.gov/Research/china%E2%80%99s-use-perception-management-and-strategic-deception.

Berkebile, Richard E. “Thoughts on Force Protection.” Joint Force Quarterly. 2nd Quarter, no. 81 (2016): 140-148, http://ndupress.ndu.edu/Portals/68/Documents/jfq/jfq-81/jfq-81_140-148_Berkebile.pdf.

Bouwmeester, Han. “Lo and Behold: Let the Truth Be Told—Russian Deception Warfare in Crimea and Ukraine and the Return of ‘Maskirovka’ and ‘Reflexive Control Theory.” in Netherlands Annual Review of Military Studies: Winning Without Killing: The Strategic and Operational Utility of Non-Kinetic Capabilities in Crises, edited by Paul Ducheine and Frans Osinga, 125-153. The Hague, Netherlands: Asser Press, 2017.

Brady, Anne-Marie and Wang Juntao. “China’s Strengthened New Order and the Role of Propaganda.” Journal of Contemporary China. 18, no. 62 (2009): 767-788, doi: 10.1080/10670560903172832.

Cheng, Dean. “PLA Views on Informationized Warfare, Information Warfare and Information Operations.” In Chinese Cybersecurity and Defense, edited by Daniel Ventre, 55-80. Hoboken, NJ: John Wiley & Sons, 2014.

Cheng, Dean. “The Chinese People’s Liberation Army and Special Operations.” Special Operations. 25, no. 3 (2012): 24-27, http://www.soc.mil/swcs/SWmag/archive/SW2503/SW2503TheChinesePeoplesLiberationArmy.html.

Daniel, Donald, Katherine Herbig, William Reese, Richards Heuer, and Theodore Sarbin. Multidisciplinary perspectives on military deception. Monterey, California: Naval Postgraduate School, 1980, http://www.dtic.mil/dtic/tr/fulltext/u2/a086194.pdf.

Darczewska, Jolanta. The Anatomy of Russian Information Warfare: The Crimean Operation, a Case Study. Warsaw, Poland: Centre for Eastern Studies, 2014, https://www.osw.waw.pl/sites/default/files/the_anatomy_of_russian_information_warfare.pdf.

Darczewska, Jolanta. The Devil Is in the Details: Information Warfare in the Light of Russia’s Military Doctrine. Warsaw, Poland: Centre for Eastern Studies, 2015, https://www.osw.waw.pl/en/publikacje/point-view/2015-05-19/devil-details-information-warfare-light-russias-military-doctrine.

Dorell, Oren. “State Department's Answer to Russian Meddling is about to be Funded.” USA Today. Last modified February 13, 2018. https://www.usatoday.com/story/news/world/2018/02/13/state-department-answer-russian-meddling-funded/322992002/.

Fravel, M. Taylor. “China’s New Military Strategy: ‘Winning Informationized Local Wars.’” China Brief. 15, no. 13 (2015): 3-7, https://jamestown.org/program/chinas-new-military-strategy-winning-informationized-local-wars/.

Galindo, Gabriela. “White House: US Does Not Recognize Russia’s Claim on Crimea.” Politico. Last modified July 3, 2018. https://www.politico.eu/article/russia-claim-on-crimea-united-states-does-not-recognize/.

Godson, Roy. “Strategic Denial and Deception.” International Journal of Intelligence and Counter Intelligence. 13, no. 4 (2000): 424-437, https://calhoun.nps.edu/bitstream/handle/10945/43266/Wirtz_Strategic_Denial_and_Deception2013-11-20.pdf;sequence=4.

Grant, Thomas D. “Annexation of Crimea.” American Journal of International Law. 109, no. 1 (2015): 70-71, doi: 10.5305/amerjintelaw.109.1.0068.

Hall, Holly Kathleen. “The New Voice of America: Countering Foreign Propaganda and Disinformation Act.” First Amendment Studies. 51, no. 2 (2017): 49-61, doi: 10.1080/21689725.2017.1349618.

Harris, Gardiner. “State Dept. Was Granted $120 Million to Fight Russian Meddling. It Has Spent $0.” New York Times. Last modified March 4, 2018. https://www.nytimes.com/2018/03/04/world/europe/state-department-russia-global-engagement-center.html.

Heuer, Richards. “Tradecraft Primer: Structured Analytic Techniques for Improving Intelligence Analysis.” Prepared by the U.S. government, 2009, https://www.cia.gov/library/center-for-the-study-of-intelligence/csi-publications/books-and-monographs/Tradecraft%20Primer-apr09.pdf.

Heuer, Richards. The Psychology of Intelligence Analysis. Washington, D.C.: Center for the Study of Intelligence, 1999, https://www.cia.gov/library/center-for-the-study-of-intelligence/csi-publications/books-and-monographs/psychology-of-intelligence-analysis.

Hilbert, Martin. “Toward a Synthesis of Cognitive Biases: How Noisy Information Processing Can Bias Human Decision Making.” Psychological Bulletin. 138, no. 2 (2012): 211-237, doi:10.1037/a0025940.

Hutchinson, William. “Information Warfare and Deception.” Informing Science: The International Journal of an Emerging Transdiscipline. No. 9 (2006): 213-223, doi:10.28945/480.

Iasiello, Emilio. “Russia’s Improved Information Operations: From Georgia to Crimea.” Parameters. 47, no. 2 (2017): 51-63, http://ssi.armywarcollege.edu/pubs/Parameters/issues/Summer_2017/8_Iasiello_RussiasImprovedInformationOperations.pdf.

Inkster, Nigel. “Military Cyber Capabilities.” Adelphi Series. 55, no. 456 (2015): 83-108, doi: 10.1080/19445571.2015.1181444.

Johnson, Mark and Jessica Meyeraan. Military Deception: Hiding the Real – Showing the Fake. Norfolk, VA: Joint and Combined Warfighting School, 2003, http://www.au.af.mil/au/awc/awcgate/ndu/deception.pdf.

Kasapoglu, Can. “Russia’s Renewed Military Thinking: Non-Linear Warfare and Reflexive Control.” NATO Defense College. November 2015, no. 121 (2015): 1-12, http://www.jstor.org/stable/resrep10269.

Macdonald, Scot. Propaganda and Information Warfare in the Twenty-First Century. New York, NY: Routledge, 2007.

Maier, Morgan. A Little Masquerade: Russia’s Evolving Employment of Maskirovka. Fort Leavenworth, Kansas: School of Advanced Military Studies, 2016, https://archive.org/details/LittleMasquerade.

Milley, Mark. “Changing Nature of War Won't Change Our Purpose,” U.S. Army. Last modified October 4, 2016. https://www.army.mil/article/175469/changing_nature_of_war_wont_change_our_purpose.

Minton, Natalie. “Cognitive Biases and Reflexive Control.” University of Mississippi, 2017, http://thesis.honors.olemiss.edu/869/1/SMBCH%20Final%20Thesis.pdf.

Nash, A.J. “The Differences Between Data, Information, and Intelligence.” United States Cybersecurity Magazine. 5, no. 15 (2017), https://www.uscybersecurity.net/csmag/the-differences-between-data-information-and-intelligence/.

Nichiporuk, Brian. “U.S. Military Opportunities: Information-Warfare Concepts of Operation.” in Strategic Appraisal: The Changing Role of Information in Warfare, edited by Zalmay Khalilzad and John White, 179-215. Santa Monica, CA: RAND Corporation, 1999, https://www.rand.org/content/dam/rand/pubs/monograph_reports/MR1016/MR1016.chap7.pdf.

Nye, Joseph S. “China's Soft Power Deficit.” Wall Street Journal. Last modified May 8, 2012. https://www.wsj.com/articles/SB10001424052702304451104577389923098678842.

Polyakova, Alina and Geysha Gonzalez. “The U.S. Anti-Smoking Campaign Is a Great Model for Fighting Disinformation.” Washington Post. Last modified August 4, 2018. https://www.washingtonpost.com/news/democracy-post/wp/2018/08/04/the-u-s-anti-smoking-campaign-is-a-great-model-for-fighting-disinformation/?utm_term=.f5b5bfdcd092.

Santos, Laurie R. and Alexandra G. Rosati. “The Evolutionary Roots of Human Decision Making.” Annual Review of Psychology. 66, (2015): 321-347, doi:10.1146/annurev-psych-010814-015310.

Seely, Robert. “Defining Contemporary Russian Warfare.” Royal United Services Institute Journal. 162, no. 1 (2017): 50-59, doi:10.1080/03071847.2017.1301634.

Snegovaya, Maria. Putin’s Information Warfare in Ukraine: Soviet Origins of Russia’s Hybrid Warfare (Russia Report 1). Washington, D.C.: Institute for the Study of War, 2015, http://www.understandingwar.org/sites/default/files/Russian%20Report%201%20Putin%27s%20Information%20Warfare%20in%20Ukraine-%20Soviet%20Origins%20of%20Russias%20Hybrid%20Warfare.pdf

Spade, Jayson M. Information as Power: China’s Cyber Power and America’s National Security. Carlisle Barracks, Pennsylvania: U.S. Army War College, 2012, https://www.hsdl.org/?view&did=719179.

Thomas, Timothy. Dialectical versus Empirical Thinking: Ten Key Elements of the Russian Understanding of Information Operations. No. 98-21. Fort Leavenworth, Kansas: Foreign Military Studies Office, 1998, http://www.dtic.mil/dtic/tr/fulltext/u2/a434981.pdf.

—. Kremlin Control: Russia’s Political and Military Reality. Fort Leavenworth, Kansas: Foreign Military Studies Office, 2017, https://community.apan.org/wg/tradoc-g2/fmso/m/fmso-books/197266.

—. “Russia’s Military Strategy and Ukraine: Indirect, Asymmetric – and Putin-led. Journal of Slavic Military Studies. 28, no. 3 (2015): 445-461, doi:10.1080/13518046.2015.1061819.

—. “Russia’s Reflexive Control Theory and the Military.” Journal of Slavic Military Studies. 17, no. 2 (2004): 237-256, doi: 10.1080/13518040490450529.

—. “Russia’s 21st Century Information War: Working to Undermine and Destabilize Populations.” Defense Strategic Communications. 1, no. 1 (2015): 11-26, https://community.apan.org/wg/tradoc-g2/fmso/m/fmso-monographs/195076

—. “The Evolving Nature of Russia’s Way of War.” Military Review. July-August (2017): 34-42, http://www.armyupress.army.mil/Journals/Military-Review/English-Edition-Archives/July-August-2017/Thomas-Russias-Way-of-War/

Thornton, David. “Army Trying to Keep Up with ‘Changing Character of War.’” Federal News Radio. Last modified June 25, 2018. https://federalnewsradio.com/army/2018/06/army-tries-to-keep-up-with-changing-character-of-war/.

Thornton, Rod. “The Changing Nature of Modern Warfare: Responding to Russian Information Warfare.” Royal United Services Institute Journal. 160, no. 4 (2015): 40-48, doi: 10.1080/03071847.2015.1079047.

Tversky, Amos and Daniel Kahneman. “Judgment under Uncertainty: Heuristics and Biases.” Science. 185, no. 4157 (1974), https://www.jstor.org/stable/1738360.

U.S. Department of Defense. Information Operations. Joint Publication 3-13. 2014, http://www.jcs.mil/Portals/36/Documents/Doctrine/pubs/jp3_13.pdf.

U.S. Department of Defense. Joint Intelligence. Joint Publication 2-0. 2013, http://www.jcs.mil/Portals/36/Documents/Doctrine/pubs/jp2_0.pdf.

U.S. Department of the Army. Information Operations. FM 3-13. Washington, D.C: Army Publishing Directorate, 2016, https://armypubs.army.mil/epubs/DR_pubs/DR_a/pdf/web/FM%203-13%20FINAL%20WEB.pdf.

U.S. Department of the Army. The Operational Environment and Changing Character of Future Warfare. Fort Eustis, Virginia: TRADOC G-2, 2017, https://community.apan.org/wg/tradoc-g2/mad-scientist/m/visualizing-multi-domain-battle-2030-2050/200203.

U.S. National Intelligence Council. Assessing Russian Activities and Intentions in Recent US Elections. ICA 2017-01D. 2017, https://www.dni.gov/files/documents/ICA_2017_01.pdf.

Walker, Shaun. “Putin Admits Russian Military Presence in Ukraine for First Time.” The Guardian. Last modified December 17, 2015. https://www.theguardian.com/world/2015/dec/17/vladimir-putin-admits-russian-military-presence-ukraine.

Watts, Barry D. Countering Enemy Informationized Operations in Peace and War. Washington, DC: Center for Strategic and Budgetary Assessments, 2014, http://www.esd.whs.mil/Portals/54/Documents/FOID/Reading%20Room/Other/Litigation%20Release%20%20Countering%20Enemy%20Informationized%20Operations%20in%20Peace%20and%20War.pdf.

West Point Society of Washington and Puget Sound. “General Mark A. Milley: AUSA Eisenhower Luncheon.” November 2, 2016, http://wpswps.org/wp-content/uploads/2016/11/20161004_CSA_AUSA_Eisenhower_Transcripts.pdf (accessed June 22, 2018).

Wortzel, Larry. The Chinese People’s Liberation Army and Information Warfare. Carlisle, PA: U.S. Army War College Strategic Studies Institute, 2014, https://ssi.armywarcollege.edu/pubs/display.cfm?pubID=1191.

[1] Richards Heuer, The Psychology of Intelligence Analysis, (Washington, D.C.: Center for the Study of Intelligence, 1999), xxi-xxii, https://www.cia.gov/library/center-for-the-study-of-intelligence/csi-publications/books-and-monographs/psychology-of-intelligence-analysis.

[2] U.S. Department of Defense, Information Operations, Joint Publication 3-13, 2014, I-5, http://www.jcs.mil/Portals/36/Documents/Doctrine/pubs/jp3_13.pdf.

[3] U.S. Department of Defense, Information Operations, I-1.

[4] U.S. National Intelligence Council, Assessing Russian Activities and Intentions in Recent US Elections, ICA 2017-01D, 2017, 1-2, https://www.dni.gov/files/documents/ICA_2017_01.pdf.

[5] William Hutchinson, “Information Warfare and Deception,” Informing Science: The International Journal of an Emerging Transdiscipline, no. 9 (2006), 216, doi:10.28945/480.

[6] Richard E. Berkebile, “Thoughts on Force Protection,” Joint Force Quarterly, 2nd Quarter, no. 81 (2016), 143, http://ndupress.ndu.edu/Portals/68/Documents/jfq/jfq-81/jfq-81_140-148_Berkebile.pdf.

[7] U.S. Department of Defense, Information Operations, I-1.

[8] Ibid.

[9] Ibid., I-2 – I-3.

[10] Ibid.

[11] U.S. Department of Defense, Joint Intelligence, Joint Publication 2-0, 2013, I-2, http://www.jcs.mil/Portals/36/Documents/Doctrine/pubs/jp2_0.pdf.

[12] A.J. Nash, “The Differences Between Data, Information, and Intelligence,” United States Cybersecurity Magazine, 5, no. 15 (2017), https://www.uscybersecurity.net/csmag/the-differences-between-data-information-and-intelligence/.

[13] A.J. Nash, “The Difference Between Data, Information, and Intelligence.”

[14] U.S. Department of Defense, Joint Intelligence, I-2.

[15] Han Bouwmeester, “Lo and Behold: Let the Truth Be Told—Russian Deception Warfare in Crimea and Ukraine and the Return of ‘Maskirovka’ and ‘Reflexive Control Theory,’” in Netherlands Annual Review of Military Studies: Winning Without Killing: The Strategic and Operational Utility of Non-Kinetic Capabilities in Crises, ed. Paul Ducheine and Frans Osinga (The Hague, Netherlands: Asser Press, 2017), 132.

[16] Richards Heuer, The Psychology of Intelligence Analysis, 7.

[17] Han Bouwmeester, “Lo and Behold,” 133.

[18] Ibid., 133-134.

[19] Ibid.

[20] Han Bouwmeester, “Lo and Behold,” 132.

[21] Mark Johnson and Jessica Meyeraan, Military Deception: Hiding the Real – Showing the Fake, (Norfolk, VA: Joint and Combined Warfighting School, 2003), 4, http://www.au.af.mil/au/awc/awcgate/ndu/deception.pdf.

[22] Donald Daniel et al., Multidisciplinary Perspectives on Military Deception, (Monterey, California: Naval Postgraduate School, 1980), 5, http://www.dtic.mil/dtic/tr/fulltext/u2/a086194.pdf.

[23] Roy Godson, “Strategic Denial and Deception,” International Journal of Intelligence and Counter Intelligence, 13, no. 4 (2000), 425, https://calhoun.nps.edu/bitstream/handle/10945/43266/Wirtz_Strategic_Denial_and_Deception2013-11-20.pdf;sequence=4.

[24] Roy Godson, “Strategic Denial and Deception,” 425.

[25] William Hutchinson, “Information Warfare and Deception,” Informing Science: The International Journal of an Emerging Transdiscipline, no. 9 (2006), 215, doi:10.28945/480.

[26] Scot Macdonald, Propaganda and Information Warfare in the Twenty-First Century, (New York, NY: Routledge, 2007), 32.

[27] Han Bouwmeester, “Lo and Behold,” 130.

[28] Ibid.

[29] Ibid.

[30] Ibid., 130-131.

[31] Han Bouwmeester, “Lo and Behold,” 134.

[32] Ibid.

[33] Richards Heuer, The Psychology of Intelligence Analysis, 4.

[34] Amos Tversky and Daniel Kahneman, “Judgment under Uncertainty: Heuristics and Biases,” Science, 185, no. 4157 (1974), https://www.jstor.org/stable/1738360.

[35] Laurie R. Santos and Alexandra G. Rosati, “The Evolutionary Roots of Human Decision Making,” Annual Review of Psychology, 66, (2015), 322, doi:10.1146/annurev-psych-010814-015310.

[36] Richards Heuer, The Psychology of Intelligence Analysis, 9-10.

[37] Ibid., 5.

[38] Martin Hilbert, “Toward a Synthesis of Cognitive Biases: How Noisy Information Processing Can Bias Human Decision Making,” Psychological Bulletin, 138, no. 2 (2012), 211, doi:10.1037/a0025940.

[39] Han Bouwmeester, “Lo and Behold,” 134.

[40] Richards Heuer, The Psychology of Intelligence Analysis, 8.

[41] Ibid., 9.

[42] Ibid., 10.

[43] Ibid., 11.

[44] Donald Daniel et al., Multidisciplinary Perspectives on Military Deception, 64.

[45] Natalie Minton, “Cognitive Biases and Reflexive Control,” (University of Mississippi, 2017), 24, http://thesis.honors.olemiss.edu/869/1/SMBCH%20Final%20Thesis.pdf.

[46] Richards Heuer, The Psychology of Intelligence Analysis, 70.

[47] Ibid., 65-66.

[48] Ibid., 14.

[49] Donald Daniel et al., Multidisciplinary Perspectives on Military Deception, 60.

[50] Scot Macdonald, “Propaganda and Information Warfare in the Twenty-First Century,” 86.

[51] Donald Daniel et al., Multidisciplinary Perspectives on Military Deception, 88.

[52] Ibid.

[53] U.S. Department of the Army, Information Operations, FM 3-13, Washington, D.C: Army Publishing Directorate, 2016, 1-3, https://armypubs.army.mil/epubs/DR_pubs/DR_a/pdf/web/FM%203-13%20FINAL%20WEB.pdf.

[54] U.S. Department of Defense, Information Operations, II-1.

[55] Ibid., II-2.

[56] Mark Milley, “Changing Nature of War Won't Change Our Purpose,” U.S. Army, last modified October 4, 2016, https://www.army.mil/article/175469/changing_nature_of_war_wont_change_our_purpose.

[57] U.S. Department of the Army, The Operational Environment and Changing Character of Future Warfare, (Fort Eustis, Virginia: TRADOC G-2, 2017), 4-6, https://community.apan.org/wg/tradoc-g2/mad-scientist/m/visualizing-multi-domain-battle-2030-2050/200203.

[58] Rod Thornton, “The Changing Nature of Modern Warfare: Responding to Russian Information Warfare,” Royal United Services Institute Journal, 160, no. 4 (2015), 41, doi: 10.1080/03071847.2015.1079047.

[59] U.S. Department of the Army, The Operational Environment and Changing Character of Future Warfare, 14.

[60] Brian Nichiporuk, “U.S. Military Opportunities: Information-Warfare Concepts of Operation,” in Strategic Appraisal: The Changing Role of Information in Warfare, ed. Zalmay Khalilzad and John White (Santa Monica, CA: RAND Corporation, 1999), 181, https://www.rand.org/content/dam/rand/pubs/monograph_reports/MR1016/MR1016.chap7.pdf.

[61] U.S. Department of the Army, The Operational Environment and Changing Character of Future Warfare, 9.

[62] West Point Society of Washington and Puget Sound, “General Mark A. Milley: AUSA Eisenhower Luncheon,” November 2, 2016, 11, http://wpswps.org/wp-content/uploads/2016/11/20161004_CSA_AUSA_Eisenhower_Transcripts.pdf (accessed June 22, 2018).

[63] M. Taylor Fravel, “China’s New Military Strategy: ‘Winning Informationized Local Wars,’” China Brief, 15, no. 13 (2015), 4, https://jamestown.org/program/chinas-new-military-strategy-winning-informationized-local-wars/.

[64] Han Bouwmeester, “Lo and Behold,” 127.

[65] Ibid.

[66] Jolanta Darczewska, The Anatomy of Russian Information Warfare: The Crimean Operation, a Case Study, (Warsaw, Poland: Centre for Eastern Studies, 2014), 15, https://www.osw.waw.pl/sites/default/files/the_anatomy_of_russian_information_warfare.pdf.

[67] Jolanta Darczewska, The Anatomy of Russian Information Warfare, 17.

[68] Timothy Thomas, “Russia’s Military Strategy and Ukraine: Indirect, Asymmetric – and Putin-led,” Journal of Slavic Military Studies, 28, no. 3 (2015), 454, doi: 10.1080/13518046.2015.1061819.

[69] Jolanta Darczewska, The Devil Is in the Details: Information Warfare in the Light of Russia’s Military Doctrine, (Warsaw, Poland: Centre for Eastern Studies, 2015), 33, https://www.osw.waw.pl/en/publikacje/point-view/2015-05-19/devil-details-information-warfare-light-russias-military-doctrine.

[70] Timothy Thomas, “The Evolving Nature of Russia’s Way of War,” Military Review, July-August (2017), 36, http://www.armyupress.army.mil/Journals/Military-Review/English-Edition-Archives/July-August-2017/Thomas-Russias-Way-of-War/.

[71] Timothy Thomas, “The Evolving Nature of Russia’s Way of War,” 36.

[72] Jolanta Darczewska, The Anatomy of Russian Information Warfare, 34.

[73] Han Bouwmeester, “Lo and Behold,” 137.

[74] Timothy Thomas, Dialectical versus Empirical Thinking: Ten Key Elements of the Russian Understanding of Information Operations, No. 98-21, (Fort Leavenworth, Kansas: Foreign Military Studies Office, 1998), 3, 9-10, http://www.dtic.mil/dtic/tr/fulltext/u2/a434981.pdf.

[75] Jolanta Darczewska, The Anatomy of Russian Information Warfare, 12.

[76] Jolanta Darczewska, The Devil Is in the Details, 7, 16.

[77] Timothy Thomas, Dialectical versus Empirical Thinking, 6, 8-9.

[78] Jolanta Darczewska, The Devil Is in the Details, 7, 10, 16-22.

[79] Han Bouwmeester, “Lo and Behold,” 137.

[80] Timothy Thomas, “The Evolving Nature of Russia’s Way of War,” 38.

[81] Robert Seely, “Defining Contemporary Russian Warfare,” Royal United Services Institute Journal, 162, no. 1 (2017), 56, doi:10.1080/03071847.2017.1301634.

[82] Jolanta Darczewska, The Devil Is in the Details, 22-25.

[83] Timothy Thomas, Kremlin Control: Russia’s Political and Military Reality, (Fort Leavenworth, Kansas: Foreign Military Studies Office, 2017), 49, https://community.apan.org/wg/tradoc-g2/fmso/m/fmso-books/197266.

[84] Morgan Maier, A Little Masquerade, 1, 6.

[85] Ibid., 2-4, 6-8.

[86] Ibid., 10-13.

[87] Ibid.

[88] Ibid.

[89] Ibid.

[90] Ibid., 8.

[91] Han Bouwmeester, “Lo and Behold,” 140.

[92] Timothy Thomas, “Russia’s Reflexive Control Theory and the Military,” Journal of Slavic Military Studies, 17, no. 2 (2004), 241, doi: 10.1080/13518040490450529.

[93] Timothy Thomas, “Russia’s Reflexive Control Theory and the Military,” 243.

[94] Ibid., 241.

[95] Ibid., 241-242.

[96] Ibid.

[97] Can Kasapoglu, “Russia’s Renewed Military Thinking: Non-Linear Warfare and Reflexive Control,” NATO Defense College, November 2015, no. 121 (2015), 6, http://www.jstor.org/stable/resrep10269.

[98] Emilio Iasiello, “Russia’s Improved Information Operations: From Georgia to Crimea,” Parameters, 47, no. 2 (2017), 52, http://ssi.armywarcollege.edu/pubs/Parameters/issues/Summer_2017/8_Iasiello_RussiasImprovedInformationOperations.pdf.

[99] Emilio Iasiello, “Russia’s Improved Information Operations,” 52-53.

[100] Ibid., 53.

[101] Thomas D. Grant, “Annexation of Crimea,” American Journal of International Law, 109, no. 1 (2015), 70-71, doi: 10.5305/amerjintelaw.109.1.0068.

[102] Can Kasapoglu, “Russia’s Renewed Military Thinking,” 6.

[103] Emilio Iasiello, “Russia’s Improved Information Operations,” 54.

[104] Ibid., 56.

[105] Can Kasapoglu, “Russia’s Renewed Military Thinking,” 10.

[106] Ibid., 2, 5.

[107] Emilio Iasiello, “Russia’s Improved Information Operations,” 56-57.

[108] Ibid.

[109] Maria Snegovaya, Putin’s Information Warfare in Ukraine: Soviet Origins of Russia’s Hybrid Warfare (Russia Report 1), (Washington, D.C.: Institute for the Study of War, 2015), 21, http://www.understandingwar.org/sites/default/files/Russian%20Report%201%20Putin%27s%20Information%20Warfare%20in%20Ukraine%20Soviet%20Origins%20of%20Russias%20Hybrid%20Warfare.pdf.

[110] Shaun Walker, “Putin Admits Russian Military Presence in Ukraine for First Time,” The Guardian, last modified December 17, 2015, https://www.theguardian.com/world/2015/dec/17/vladimir-putin-admits-russian-military-presence-ukraine.

[111] Gabriela Galindo, “White House: US Does Not Recognize Russia’s Claim on Crimea,” Politico, last modified July 3, 2018, https://www.politico.eu/article/russia-claim-on-crimea-united-states-does-not-recognize/.

[112] Emilio Iasiello, “Russia’s Improved Information Operations,” 55.

[113] Ibid., 57-59.

[114] Ibid., 56-59.

[115] Ibid., 58.

[116] Ibid., 55.

[117] Ibid., 56.

[118] U.S. Department of the Army, The Operational Environment and Changing Character of Future Warfare, 9.

[119] Anne-Marie Brady and Wang Juntao, “China’s Strengthened New Order and the Role of Propaganda,” Journal of Contemporary China, 18, no. 62 (2009), 784, doi: 10.1080/10670560903172832.

[120] Jayson M. Spade, Information as Power: China’s Cyber Power and America’s National Security, (Carlisle Barracks, Pennsylvania: U.S. Army War College, 2012), 11, https://www.hsdl.org/?view&did=719179.

[121] Larry Wortzel, The Chinese People’s Liberation Army and Information Warfare, (Carlisle, PA: U.S. Army War College Strategic Studies Institute, 2014), 1, https://ssi.armywarcollege.edu/pubs/display.cfm?pubID=1191.

[122] Dean Cheng, “PLA Views on Informationized Warfare, Information Warfare and Information Operations,” in Chinese Cybersecurity and Defense, ed. Daniel Ventre (Hoboken, NJ: John Wiley & Sons, 2014), 56-57.

[123] Dean Cheng, “PLA Views on Informationized Warfare 56-57.

[124] Ibid., 57.

[125] Dean Cheng, “The Chinese People’s Liberation Army and Special Operations,” Special Operations, 25, no. 3 (2012), 24, http://www.soc.mil/swcs/SWmag/archive/SW2503/SW2503TheChinesePeoplesLiberationArmy.html.

[126] M. Taylor Fravel, “China’s New Military Strategy,” 3.