Foundations for Assessment: The Hierarchy of Evaluation and the Importance of Articulating a Theory of Change

Dr. Christopher Paul

Abstract

There is currently a great deal of (appropriate and needed) attention to assessment in a range of defense communities. Two foundational elements for planning and conducting assessments can benefit these communities: explicit theories of change and a hierarchy for evaluation. Having an explicit theory of change helps the assessor identify what to measure and supports an assessment structure that tests assumptions as hypotheses, which leads to improved operations, outcomes, and assessments. The hierarchy of evaluation described here includes five nested levels: (1) needs, (2) design and theory, (3) process and implementation, (4) outcomes/impact, and (5) cost-effectiveness. Rigorous assessments capture all of these levels and recognize their nested relationship, which helps the assessor match assessments to stakeholder needs and identify where improvements are needed when operations do not produce desired outcomes.

Foundations for Assessment

The U.S. Department of Defense and supporting intellectual communities are abuzz with discussions about assessment and how it is conducted. The topic has been prominent in recent working groups and conferences and is a focus of think-tank studies. Numerous articles, reports, and white papers have pointed out challenges associated with assessment or have made suggestions for its improvement.[i] Assessment in doctrine is the subject of ongoing debate, with much internal discussion of how assessment should be treated in pending revisions to both joint and service publications, and plans are under consideration for a new separate, manual on the topic.

Admittedly, assessment—especially for military efforts or operations not principally governed by physics—can be tricky. It is relatively easy to observe the amount of destruction levied on, say, a bridge by an air sortie, and it is similarly relatively straightforward to determine whether that level of destruction is sufficient to meet campaign objectives or whether an additional sortie is required. It is much more difficult to assess the effectiveness of development aid, efforts to build partner-nation capabilities, or efforts to influence foreign audiences, let alone to assess the overall contribution of myriad such activities and progress toward the goals of a larger irregular warfare campaign.

Despite the challenge, done right, the juice is worth the squeeze. Effective assessment can support critical decisionmaking; lead to the termination or revision of struggling efforts; improve resource allocation; foster continual improvement at the strategic, operational, and tactical levels; and help turn failures into successes. The discussions surrounding assessment have revealed many strong practices, numerous useful insights, and a range of broadly applicable principles. They have also prompted critical disagreements and produced some less-than-useful advice.

This essay does not resolve any of the ongoing debates, nor does it review, synthesize, or even make much further mention of the many good (and some poor) ideas put forward in contemporary discussion. It does, however, offer two principles that have been foundational to the way I think, write about, and conduct assessments: the importance of an explicit theory of change and the hierarchy of evaluation. This short essay explores these two principles with the hope that they enter and are embraced by ongoing discussions in this area.

An Explicit Theory of Change Facilitates Assessment

Implicit in many examples of effective assessment and explicit in much of the work by scholars of evaluation research is a theory of change. The theory of change for an activity, line of effort, or operation is the underlying logic for how planners think elements of the overall activity, line of effort, or operation will lead to desired results. Simply put, a theory of change is a statement of how you believe the things you are doing are going to lead to the objectives you seek. A theory of change can include assumptions, beliefs, or doctrinal principles. The main benefit of articulating a theory of change in the assessment context is that it allows assumptions of any kind to be turned into hypotheses. These hypotheses that can then be tested explicitly as part of the assessment process, with any failed hypotheses replaced in subsequent efforts until a validated, logical chain connects activities with objectives and objectives are met.

Here is an example theory of change: Training and arming local security guards makes them more able and willing to resist insurgents, which will increase security in the locale. Increased security will lead to increased perceptions of security, which will promote participation in local government, which will lead to better governance. Improved security and better governance will lead to increased stability.

This theory of change shows a clear logical connection between the activities (training and arming locals) and the desired outcome (increased stability). It makes some assumptions, but those assumptions are clearly stated, so they can be challenged if they prove incorrect. Further, those activities and assumptions suggest things to measure: performance of the activities (the training and arming) and the outcome (change in stability), to be sure, but also elements of all the intermediate logical nodes: capability and willingness of local security forces, change in security, change in perception of security, change in participation in local government, change in governance, and so on. Better still, if one of those measurements does not yield the desired results, assessors will have a pretty good idea of where in the chain the logic is breaking down (which hypotheses are not substantiated).[ii] They can then make changes to the theory of change and to the activities being conducted, reconnecting the logical pathway and continuing to push toward the objectives.

Such a theory of change might have begun as something quite simple: Training and arming local security guards will lead to increased stability. While this gets at the kernel of the idea, it isn’t particularly helpful for building assessments. Stopping there would suggest that we only need to measure the activity and the outcome. However, it leaves a huge assumptive gap. If training and arming go well but stability does not increase, we’ll have no idea why. To begin to expand on a simple theory of change, ask the question, “Why? How might A lead to B?” (In this case, how do you think training and arming will lead to stability?). A thoughtful answer to this question usually leads one to add another node to the theory of change. If needed, the question can be asked again relative to this new node until the theory of change is sufficiently articulated.

How do you know when the theory of change is sufficiently articulated? There is no hard-and-fast rule; it is at least as much art as it is science. Too many nodes, too much detail, and you end up with something like the infamous “Afghan Stability/COIN Dynamics” spaghetti diagram. Add too few nodes and you end up with something too simple that leaves too many assumptive gaps. If an added node invokes thoughts like “Well, that’s pretty obvious,” perhaps it is overly detailed.

Fortunately, if an initial theory of change is not sufficiently detailed in the right places or does not fit well in a specific operating context, iterative assessments will point toward places where additional detail is required. As assessment proceeds, whenever a measurement is positive on one side of a node but negative on the other and you cannot tell why, that is where either a mistaken assumption has been made or an additional node is required. For example, imagine a situation in which measures show real increases in security (reduced SIGACTs, reduced total number of attacks/incursions, reduced casualties/cost per attack, all seasonally adjusted), but measures of perception of security (from surveys and focus groups, as well as observed market/street presence) do not correspond. Because we are not willing to give up on the assumption that improvements in security lead to improvements in perception of security, we need to look for another node. We can speculate and add another node, or we can do some quick data collection, getting a hypothesis from personnel operating in the area or from a special focus group in the locale. Perhaps the missing node is awareness of the changing security situation. If preliminary information confirms this as a plausible gap, then this not only suggests an additional node in the theory of change and an additional factor to measure, but it also indicates the need for a new activity: some kind of effort to increase awareness of changes in the security situation.

Improvements to the theory of change improve assessments, but they can also improve operations. Further, articulating a theory of change during planning allows you to begin your activities with some questionable assumptions—and with the confidence that they will be either validated by assessment or revised. Theory of change–based assessment supports learning and adapting in operations. This approach can also help tailor generic operations and assessments to specific contexts. By treating a set of generic starting assumptions as just that, a place to start, and testing those assumptions as hypotheses, a theory of change (and the operations and assessments it supports) can evolve over time to accommodate contextually specific factors, whether such factors are cultural, the result of individual personalities, or just the complex interplay of different distinct elements of a given environment or locale.

A preliminary theory of change can be improved not only by asking after connective nodes (“How might A lead to B?”) but also by asking after missing disruptive nodes. Asking, “What might prevent A from leading to B?” or “What could disrupt the connection between A and B?” can help identify possible logical spoilers or disruptive factors. Again, by articulating the possible disruptor as part of the theory of change, it can then be added to the list of things to attempt to measure. For example, when connecting training and arming local guards to improved willingness and capability to resist insurgents, we might red-team disruptive factors such as “trained and armed locals defect to the insurgency” or “local guards sell weapons instead of keeping them.” We can then add these spoilers as an alternative path on our theory of change and attempt to measure the possible presence of these spoilers.

Another way to ensure that a theory of change is sufficiently articulated is to make sure that it has nodes (and accompanying measures) at at least three levels (Level 2, Level 3, and Level 4) in the hierarchy of evaluation discussed in the next section.

The Hierarchy of Evaluation[iii]

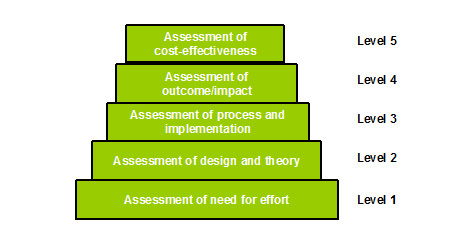

“The hierarchy of evaluation” as developed by evaluation researchers Peter Rossi, Mark Lipsey, and Howard Freeman[iv] is presented in the figure. The hierarchy divides all potential evaluations and assessments into five nested levels. They are nested in that each higher level is predicated on success at a lower level. For example, positive assessments of cost-effectiveness (the highest level) are possible only if supported by positive assessments at all other levels. Further details can be found in the subsection “Hierarchy and Nesting,” below.

The Hierarchy of Evaluation

SOURCE: Adapted from Figure 7.1 in Christopher Paul, Harry J. Thie, Elaine Reardon, Deanna Weber Prine, and Laurence Smallman, Implementing and Evaluating an Innovative Approach to Simulation Training Acquisitions, Santa Monica, Calif.: RAND Corporation, 2006..

Level 1 – Assessment of Need for Effort

Level 1, at the bottom of the hierarchy and foundational in many respects, is the assessment of the need for the program or activity. This is where evaluation connects most explicitly with target ends or goals. Evaluation at this level focuses on the problem to be solved or goal to be met, the population to be served, and the kinds of services that might contribute to a solution.[v]

Evaluation at the needs-assessment level is often skipped, being regarded as wholly obvious or assumed away. Where such a need is genuinely obvious or the policy assumptions are good, this is not problematic. Where need is not obvious or goals are not well articulated, troubles in Level 1 of the evaluation hierarchy can complicate assessment at each higher level.

Level 2 – Assessment of Design and Theory

The assessment of concept, design, and theory is the second level in the hierarchy. After a needs assessment establishes that there is a problem or a policy goal to pursue, as well as the intended objectives of such a policy, different solutions can be considered. Assessment at this level focuses on the design of a policy or program and is where an explicit theory of change should be articulated and assessed. This is a critical and foundational level in the hierarchy. If program design is based on poor theory or mistaken assumptions, then perfect execution may still not bring desired results. Similarly, if the theory does not actually connect the activities with the objectives, efforts may generate effects other than those intended. Unfortunately, this level of evaluation, too, is often skipped or completed minimally and based on unfounded assumptions.

Note that assessments at this level are not about execution (i.e., “Are the services being provided as designed?”). Such questions are asked at the next level, Level 3. Design and theory assessments (Level 2) seek to confirm that what is planned is adequate to achieve the desired objectives.

Level 3 – Assessment of Process and Implementation

Level 3 in the hierarchy of evaluation focuses on program operations and the execution of the elements prescribed by the theory and design in Level 2. Efforts can be perfectly executed but still not achieve their goals if the design is inadequate. Conversely, poor execution can foil the most brilliant design. For example, a well-designed series of training exercises could fail to achieve desired results if executing personnel do not show up or do not have the needed equipment.

Level 3 evaluations include “outputs,” the countable deliverables of a program. Traditional measurements at Level 3 are measures of performance (MOP).

Level 4 – Assessment of Outcomes

Level 4 is near the top of the evaluation hierarchy and concerns outcomes and impact. At this level, outputs are translated into outcomes or achievements. Put another way, outputs are the products of activities, and outcomes are the changes resulting from these efforts. This is the first level of assessment at which solutions to the problem that originally motivated the efforts can be seen. Measures at this level are often referred to as measures of effect (MOE).

Level 5 – Assessment of Cost-Effectiveness

The assessment of cost-effectiveness sits at the top of the evaluation hierarchy, at Level 5. Only when desired outcomes are at least partially observed can efforts be made to assess their cost-effectiveness. Simply stated, before you can measure “bang for buck,” you have to be able to measure “bang.”

Evaluations at this level are often most attractive in bottom-line terms, but they depend heavily on lower levels of evaluation. It can be complicated to measure cost-effectiveness in situations with unclear resource flows or when exogenous factors significantly affect outcomes. As the highest level of evaluation, this assessment depends on the lower levels, as described in the next section, and can provide feedback inputs for policy decisions primarily based on the lower levels. For example, if target levels of cost-efficiency are not being met, cost data (Level 5) in conjunction with process data (Level 3) can be used to streamline the process or otherwise selectively reduce costs.

Hierarchy and Nesting

This framework is a hierarchy because the levels nest with each other; solutions to problems observed at higher levels of assessment often lie at levels below. If the desired outcomes (Level 4) are achieved at the desired levels of cost-effectiveness (Level 5), then lower levels of evaluation are irrelevant. But what about when they are not?

When desired high-level outcomes are not achieved, information from the lower levels of assessment needs to be available and examined. For example, if an effort is not realizing target outcomes, is that because the process is not being executed as designed (Level 3) or because the theory of change is incorrect (Level 2)? Evaluators have problems when an assessment scheme does not include evaluations at a sufficiently low level to inform effective policy decisions and diagnose problems. When the lowest levels of evaluation have been assumed away, that is acceptable only if the assumptions prove correct.

A thoughtfully articulated theory of change helps identify which assumptions can and should be measured as part of assessment. A good theory of change will include nodes at at least three levels in the hierarchy: Level 2 (assessment of design and theory), Level 3 (assessment of process and implementation), and Level 4 (assessment of outcomes).

In doctrine, MOP (clearly at Level 3) are described as contributing to the assessment that asks, “Are we doing things right?” while MOE (at Level 4) are described as contributing to assessments that ask, “Are we doing the right things?” Although these are both good questions, in my view they do not align well to MOP and MOE. While MOP at Level 3 can be sufficiently described answering “Are we doing things right?” the question “Are we doing the right things?” is appropriate for level 2, the assessment of design and theory. MOE, at Level 4, are the answer to the question “Are we seeing the results we want to?” Assessing at only Level 3 (MOP) and Level 4 (MOE) does not necessarily answer questions about whether the activities performed are the right ones. While satisfactory MOP and unsatisfactory MOE will provide some information, a scheme with an articulated and assessed theory of change—and with measures at Level 2 (design and theory)—is much more likely to produce actionable information should outcomes/MOE at Level 4 not be satisfactory.

Taken together, the imperative of an explicit theory of change and the insights drawn from the hierarchy of evaluation provide an excellent foundation for thinking about assessment. They do not do the hard work of identifying indicators or collecting data, but they do provide a framework that makes it more likely that the hard work of conducting assessment will actually pay off in improved decisions and incremental improvement over time.

[i] See, for example, Ben Connable, Embracing the Fog of War: Assessment and Metrics in Counterinsurgency, Santa Monica, Calif.: RAND Corporation, MG-1086-DOD, 2012; William P. Upshur, Jonathan W. Roginski, and David J. Kilcullen, “Recognizing Systems in Afghanistan: Lessons Learned and New Approaches to Operational Assessments,” Prism, Vol. 3, No. 3, pp. 87–104; David C. Becker and Robert Grossman-Vermaas, “Metrics for the Haiti Stabilization Initiative,” Prism, Vol. 2, No. 2, March 2011, pp. 145–158; Stephen Downes-Martin, “Operations Assessment in Afghanistan Is Broken: What Is to be Done?” Naval War College Review, Vol. 64, No. 4, Autumn 2011, pp. 103–125; Jonathan Schroden, “Why Operations Assessments Fail,” Naval War College Review, Vol. 64, No. 4, Autumn 2011, pp. 88–102; Dave LaRivee, “Best Practices Guide for Conducting Assessments in Counterinsurgencies,” Small Wars Journal, August 17, 2011; Jennifer D. P. Moroney, Beth Grill, Joe Hogler, Lianne Kennedy-Boudali, and Christopher Paul, How Successful Are U.S. Efforts to Build Capacity in Developing Countries? A Framework to Assess the Global Train and Equip “1206” Program, TR-1121-OSD, Santa Monica, Calif.: RAND Corporation, 2011.

[ii] Note this essay makes no prejudgments about how exactly an assessor should measure these things (quantitative, qualitative, or otherwise), how they should be summarized, or how exactly sufficiency of meeting desired results should be assessed.

[iii] The text in this section is partially drawn from the author’s contribution to How Successful Are U.S. Efforts to Build Capacity in Developing Countries? A Framework to Assess the Global Train and Equip “1206” Program (Moroney et al., 2011).

[iv] Peter H. Rossi, Mark W. Lipsey, and Howard E. Freeman, Evaluation: A Systematic Approach, 7th ed., Thousand Oaks, Calif.: Sage Publications, 2004.

[v] Rossi, Lipsey, and Freeman, 2004, p. 76.

About the Author(s)

Comments

Dr. Paul,

I started reading this article with a very critical eye, because I largely see the cottage industry of assessments as the curse of McNamara that simply won't go away. It is fake science little better than snake oil in most cases. The result is a situation where the tail wags the dog, and we just can't seem to get away from it.

We seemly seem to wish away that if we're talking about war and warfare our assessments of projects and individual actions means little if our adversary is still willing to fight. An areas that secure one week, is not the next week. If kids are going to school in villages A, B, and C because we built the schools and aggressively patrol the area to reduce the overt insurgent presence, yet the Taliban's still maintains the shadow government that is not always visible to us, and it is they who appoint the teachers who then promote radicalization. Success or failure? What beans should we count?

I really like your description of the theory of change, but somewhere along the line you made me chuckle with your explanation that if a commander doesn't like the results of his assessment and really believes in his theory of change, you can simply change what you assess. You wrote if people don't think they're more secure then show them your assessment so I assume they'll be more likely to say they feel better when asked again. Isn't that creating a lie to support what the commander wants to believe? This further demonstrates our assessment process is a self-licking ice-cream cone that simply confirms what our commanders want to hear. Instead of telling the people they're more secure even if they don't feel that way, maybe we should ask why they feel unsecure? Are there incidents that are not reported (guaranteed), are they afraid of our troops (collateral damage), are they thinking forward about after we leave and the retaliation they'll suffer. As you said it is at least as much art as science.

If we don't select and promote mature leaders who are self-critical instead of the ones who want the system to confirm to their perceptions, then assessments will never contribute to decision making in a meaningful way.

Your theory of change argument is sound, but do we really need a cottage industry of assessors? Do we really have to develop objectives that are measurable to ensure the cottage industry survives and continues to wag the dog? Wouldn't it be better to simply have commanders and leaders at all levels who are self-critical and constantly assess the environment and adjust their plans accordingly instead of assessors counting irrelevant beans that mislead as often as they inform commanders?

Show me where assessments made a difference? Our assessments said what we wanted them say for decades (Vietnam, Kosovo, Iraq, Afghanistan, etc.) and rarely resulted in plan change for the better, and unfortunately resulted in some bad strategies not being changed because assessors could provide the data to support staying the course.

Do we need to assess? Absolutely. Do we stand a separate system and a cottage industry of assessors to do it? Not at all, we just need to train our force to constantly assess the impact of their operations based on their theory of change.

The fact is we produced the cottage industry to respond to the never ending demand for metrics from Congress and the GAO, but it has little to do with actual success on the ground.