Operationalizing Big Data

Robert E. Smith

"Serious sport is war minus the shooting.”

-- George Orwell

Discussing how to operationalize the new Army Operating Concept in the face of technical parity, TRADOC CG GEN David G. Perkins stated “Where we have the advantage is the way that our technology interfaces with the Soldiers, the Soldier-technology interface, the way that, again, they can innovate with that, adapt and innovate. How quickly can they adapt to the conditions that they’re operating in, and how rapidly can we increase that rate of innovation?” (Perkins, Interview: ‘Win in a Complex World’— But How?, 2015) In a complex world, the range of military operations is staggering. To realize Perkins’ vision there are places the Army needs to advance: The first is that Soldiers must accrue experience at a much faster rate over a wide range of operations so they can adapt and innovate. The second is that equipment performance requirements and how equipment is used must be intrinsically linked in the future: a heavily-armored/slow vehicle will be used differently than a fast/light/mobile vehicle for the same mission. Perkins states a capability is “technology in the hands of Soldiers, who are trained how to use it, and can apply it on the battlefield.” (Perkins, 2014) So how do we find optimal capabilities? Some clues are provided from emerging professional sports and video gaming technologies.

George Orwell once stated that "serious sport is war minus the shooting.” Coaches watching a sporting event spend hours and hours watching and reviewing players and movements. They aggregate movements and plays in their minds and try and derive tendencies and strategies from thousands of movement traces. They might make predictions on what areas of the field a player is most likely to operate in, where they are most likely to pass, and where are they most likely to take a shot. The ability of a coach to understand behaviors and capabilities wins and loses games. In a similar way, understanding Soldier and squad behavior may win or lose engagements. While humans are remarkable at this, the brain simply isn’t designed to aggregate hundreds or thousands of traces. Optical tracking of player movements using multiple cameras enables computers to discover patterns that are simply too complex or subtle for humans. Tracking is done at resolutions as fine as 15 frames per second yielding enormous data sets. This comes out to multiple billions of data points per season. New advances in machine learning are opening new paths to capitalize on big data.

According to William Roper, the Director of the DoD’s Strategic Capabilities Office, in a 2017 talk on the future of warfare (Roper, 2017): “Data is going to be one of the primary tools, fuels, and weapons in future warfare. We don’t treat data the same way that a company like Google, or Apple, or Amazon do. For us data is kind-of like the exhaust that comes out of our systems… that is not the way people working machine learning or AI are thinking. They’re thinking the people who have the most data is going to be able to train the most intelligent machine and that machine is going to have the best advantage.” Roper suggests the Pentagon should be stockpiling all of its data from every flight, every mission, and every exercise in a way that is machine discoverable. He expresses a fear of us not doing this because “what if in the future we fight a military all of whose systems learn. So, day 2 of the war they’re smarter than day 1. But we’re fighting with systems that don’t learn. Then we put the burden completely on people to try and make whatever they need to do work in a system that is effectively fixed.” Roper points out “Try to take a pentagon that is device centric—device being like fighter, bomber, submarine, or tank – and shift it to be data centric. To merely think of their systems as being data producers and the data being more important than the systems themselves.”

Exploiting Virtual Reality

Military training in the future will move more and more into the virtual domain. As this shift occurs, it is important that Soldiers are trained to be creative and thrive in new and complex situations. In his book, “Head Strong: How Psychology is Revolutionizing War” (Matthews, 2013), Matthews imagines a future virtual training environment where a fictional Soldier trains in a virtual simulator. The simulator systematically exposes the Soldier to a deep and broad variety of simulations. He/she can move through a virtual world that sounds, feels, and looks like the real world. With each exposure, the learner begins to build his or her library of experiences and scripts to tap into when intuitive decisions are called for. Matthews points out that experts with massive amounts of experience, such as master chess players, firefighters, law enforcement officers, and others who make quick and difficult decisions build huge mental libraries of scripts and patterns. Despite the uncertainty of the situation, the expert usually sees a pattern and arrives at a single course of action. If the decision is not effective, the expert usually can invoke a modification to the original plan easily. If patterns can be discovered in vast training data, it may be possible to train Soldiers to an expert level much more quickly. Likely, these optimal patterns are unique to each Soldier based on tastes, preferences, physical/mental abilities, culture, and brain wiring.

Already the Army is progressing down this path. The Dismounted Soldier Training System, or DSTS, is a virtual training tool that is quickly becoming the norm for Soldiers of the 157th Infantry Brigade, First Army Division East. The DSTS works as follows: Each Soldier stands on a four-foot diameter rubber pad. This pad is the center of a 10-foot by 10-foot training area for each squad member, and the pad ensures Soldiers remain in a specific area within the training suite. Soldiers can see and hear the virtual environment and also communicate with members of the squad using a helmet-mounted display with headphone/microphone set. "A Soldier uses his body to perform maneuvers, such as walking or throwing a hand grenade, by physically making those actions. The sensors capture the Soldier's movements, and those movements are translated to control the Soldier's avatar within the simulation," explained Matthew Roell, DSTS operator. (Zamora, 2013)

Figure 1: Dismounted Soldier Training System, or DSTS. Soldiers of the 157th Infantry Brigade, First Army Division East, take advantage of the Dismounted Soldier Training System. The DSTS provides a realistic virtual training platform programmable for any theater of operations while mitigating risk. (Zamora, 2013)

Figure 2: Dismounted Soldier Training System, or DSTS. A visitor to the Dismounted Soldier Training System can observe what individual Soldiers are viewing, through the helmet-mounted display, on large flat screen television monitors. (Zamora, 2013)

Training could produce a vast amount of data on effective warfighting. In the future complex world, it’s impossible for trainers to develop a playbook and optimal solution to every imaginable problem. For mutidomain megacity warfare, there may be thousands of paths to mission success. Using the data from physics-based virtual reality trainers, it may be possible for the trainers to learn from the trainees what seems to work. The important thing is to train Soldiers to thrive in complex situations.

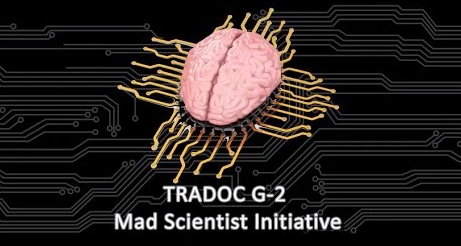

Early Synthetic Prototyping

Most people think singularly of training when they consider virtual worlds. In the future, equipment will be tested on a virtual battlefield for the purposes of materiel acquisition. Performance requirements in the future must be intrinsically linked to equipment tactically, operationally, and strategically. Army Capabilities Integration Center (ARCIC) is currently standing up a game-based future sandbox environment called Early Synthetic Prototyping (ESP). The ESP initiative offers a viable methodology to determine what combination of tactics and materiel is optimal over various scenarios. The goal is to allow any Soldier to log in with a “CAC” card and fight future concepts in a virtual gaming environment. ESP will enable thousands of Soldiers to tailor tactics, strategies, force structures, and materiel to try to minimize cost and to maximize mission effectiveness. ESP hopes to harness the free flow of ideas between technologists, program offices, and Soldiers to identify and assess concepts early in the design phase at a time when costs are low. A leader board and discussion forum will help enhance idea exploration and piggy-backing of new ideas. Consider the fact that after one month of the release of Call of Duty Black Ops, gamers accumulated 68,000 years of play. Similarly, the DOD hopes to collect data in quantities never before explored. ESP expects to collect upwards of 120,000 hours of gameplay telemetry yearly. Figure 3 illustrates how understanding the operational environment quantitatively can directly drive performance specifications for the acquisition community.

Figure 3: Digital Warfighting based Tradespace Exploration. The Acquisitions community largely cares about performance requirements, unit cost, developmental risk, operation and sustainment cost, and growth potential. Linking tradespace analysis to virtual battles allows requirements to be tied to operational outcomes by rank ordering them, deriving performance utility curves, and assessing mission sensitivity.

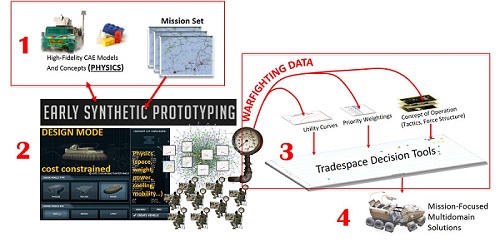

TARDEC has run a number of experiments on future ground vehicle concepts by repurposing commercial games. TARDEC was able to observe how Soldiers might operate future equipment, how terrain and mobility interact, and to stimulate discussions with Soldiers by letting them experience the future operating environment. Every experiment has resulted in unique and creative ideas from the units participating. In future experiments, TARDEC hopes to allow Soldiers to customize their vehicles within a cost budget and see how that affects tactics. With enough data, this would allow the calculation tactical utility curve for requirements parameters. Figure 4 shows an example of an experiment conducted with TRADOC ARCIC at Ft. Bliss to understand how Soldiers might use the Next Generation Close Combat Vehicle IFV shown in Figure 5. The elevating suspension was a key feature for mine blast protection, the ability to pop-up/return to defilade, and off-road mobility. The vehicle in an elevated position is also more visible and prone to rollover.

Figure 4: Next Generation Close Combat Vehicle Virtual Demonstrator Test. Conducted at Ft. Bliss, Brigade Modernization Command, Dec. 2014. 76 Soldiers over two days.

Figure 5: Next Generation Close Combat Vehicle Study. The concept model was run through high-fidelity physics-based engineering models and input into the VBS3 game environment with proper engineering performance.

Immediately after finishing the first experiment at Ft. Bliss, the team was asked questions which were unanswerable from just manually replaying the after-action reviews of each individual game. Example questions asked were:

General (requires abstraction)

- What are they doing?

- Why they are doing it?

- How effective is this?

- When entities see each other on battlefield (effects of signature management, information warfare)?

Shoot

- Threat Cardioid (engagement by sector) and what engaged

- Number rounds expended

- Active protection

Move

- Where are they looking?

- Vehicle speed

- Terrain versus movement choices

- Fuel economy (can be backed out later using engineering engine simulations)

Communicate

- What are they talking about/when/how often/where/with whom?

DARPA OFFSET

Clearly for autonomous weapons systems, the ability to have a smarter artificial intelligence will create a major advantage. An even larger advantage is generated when an optimized human-technology interface is developed. A new DARPA Program called OFFensive Swarm-Enabled Tactics (OFFSET) seeks to empower troops with technology to control scores of inexpensive unmanned air and ground vehicles at a time. Inexpensive drones are available on the global marketplace. William Roper, director of the Strategic Capabilities Office, suggests (Roper, 2017) “our investment is in the high end of low technology. Let’s add software that allows those systems to collaborate, to function with something like a fighter or ship, or submarine in a way a terrorist group could never do. Software is easy to protect, hardware is not.”

The bottleneck to developing effective swarming software is the lack of a swarm tactics development ecosystem. DARPA states OFFSET seeks to develop a real-time, networked virtual environment that would support a physics-based, swarm tactics game. In the game, players would use the interface to rapidly explore, evolve, and evaluate swarm tactics to see which would potentially work best on various unmanned platforms in the real world. Users could submit swarm tactics and track their performance from test rounds on a leaderboard, as well as dynamically interact with other users. The human operator is envisioned to provide control of the swarm at the level of commander’s intent.

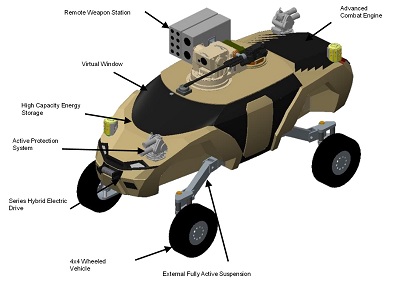

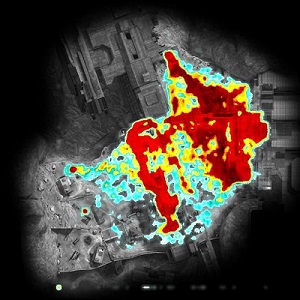

Visualization of Data

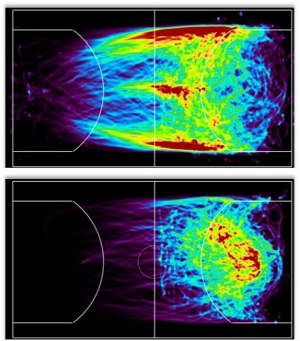

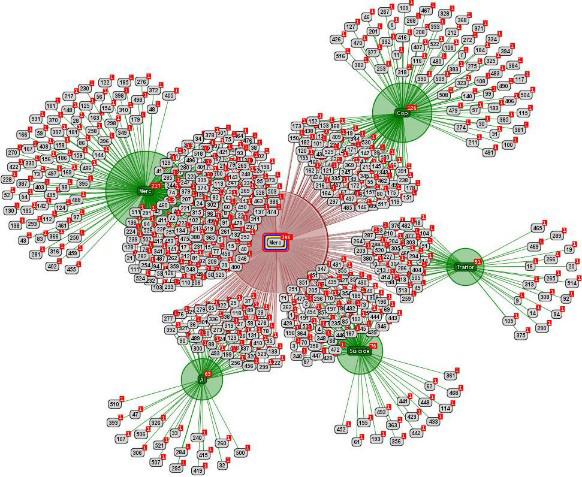

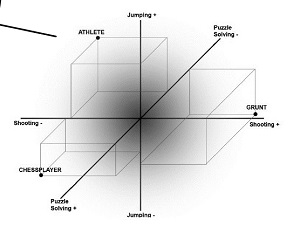

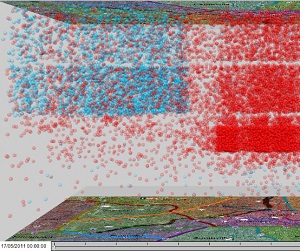

Part of the puzzle in comprehending information from large spatio-temporal data sets, is to balance how the data is aggregated and how to visualize the results. Research needs to be done on how to present this data in a manner to allow insights to be derived. Again cues may be taken from the professional sports domain and video game analytics. A small sampling of what they are doing is shown in Figure 6 through Figure 10. The primary goal of the gaming industry is to maximize fun in game play by balancing play and eliminating quirks/cheating. Ideally, the game player is kept in a state of flow. Csikszentmihalyi (1990) describes flow as an optimal mental state where a person is completely occupied with a task that matches the person's skills, being neither too hard (leading to anxiety) or easy (leading to boredom). The goal of professional sports is to win games, but in the future, leagues as a whole benefit if the action is more exciting and they can sell more tickets.

Figure 6: Example visualization of a heatmap of player kills using kernel density methodology from HALO Research. (Bungie, Inc., 2010)

Figure 7: Basketball Shooting vs. Passing for a Particular Player. This player primarily passes after moving across the perimeter. (Kowshik, Chang, & Maheswara, 2012)

Figure 8: Cause of Death Clustering. The data relates role at death with cause of death in Fragile Alliance. (Nacke, Ambinder, Canossa, Mandryk, & Stach, 2009)

Figure 9: Player persona space. Drachen provides an example of persona mining from Tomb Raider Underworld based on: Shooting: Indicated by high or low number of deaths inflicted to enemies & animals; Jumping: Indicated by high or low number of deaths caused by falling, drowning, being crushed (navigation); Puzzle solving: Indicated by high or low requests for help to solve puzzles (interaction with the world) . Three personas are identified: chess player, athlete, and grunt. (Canossa & Drachen, 2009)

Figure 10: Geospatial Communications Over Time. Using voice to text, it may be possible to ascertain not just when and what about Soldiers are communicating. This image actually shows messages about the flu-like disease (red) and stomach disease (light blue) in time (vertical axis).

Behavior Mining

Behavior mining offers the potential to discover subtle behaviors that are too complex or subtle for human brains to detect. Recent advances in data mining may allow discovery of desirable and undesirable tactical behaviors, and how technical performance of equipment changes those tactics. Data mining behaviors is extremely challenging because it requires an understanding of context, which computers notoriously do poorly. DARPA's Mind's Eye program was able to develop the capability for visual intelligence by automating the ability to learn representations of action between objects in a scene. It may be possible in the gaming environment to similarly generate an understanding of sequences of action between objects in a virtual environment that leads to successful or detrimental outcomes. Another challenge is that true behavior requires and understanding of “Theory of Mind”. Theory of Mind is essentially the ability of a player to realize that an opponent also has beliefs, desires, intentions, and perspectives different than his/her own. This means the player is constantly guessing what is going on inside the opponent’s head. Finally, players in a crowd may behave quite differently according to their tastes/preferences and the validity of successful tactics may require additionally classifying player types (example “Rambo” versus a chess player persona).

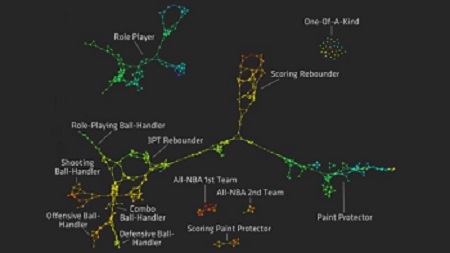

At the lowest end of this, data analysis is looking at play-based statistics. For example, Alagappan (2013) took statistics for players in the NBA and discovered there are not the traditional 5 positions in basketball, but actually 13 positions played by players (Figure 11). Alagappan pointed out the value created is that teams can build positions around players rather than trying to fit players into predetermined positions. Alagappan demonstrated how the Houston Rockets are built to shoot either near the basket or from the three-point range, with little in between. The San Antonio Spurs, in contrast, present a more balanced map, with their starting lineup shooting more equally from close in, from midrange and from long distance. Using exploratory military training environments, it may be possible to understand subtle roles played by squad members during operations.

Figure 11: The warmer colors signify outstanding performance in a skill, such as rebounding, while the line segments connect players with similar performance in several skills. Combining an area of mathematics generally thought of as very abstract—topology—with statistics, researchers were able to show that there are actually ten significant positions on a basketball team, rather than the usual five. Source: (Alagappan, 2013)

Going beyond analysis that uses only play-by-play event-driven statistics (i.e., rebounds, shots), it is possible to look at spatio-temporal data and attempt to determine individual and/or group behaviors. Individual and group behaviors are intrinsically linked as the context of teammates and adversaries locations, roles, and skills affect the individual. Understanding how interaction unfolds over time is a key factor for understanding the dynamic aspects of player behavior. Roles in team based sports may be short lived as players switch roles in support of the team. For example a soccer player who starts as a left-wing, may switch to right-wing during a play. Recall we discussed that experts with massive amounts of experience build huge mental libraries of scripts and patterns. If computers are able to first recognize the context and goals of both the team and individuals, temporal patterns of behavior (pattern sequences) should be attainable. Presently this is the bleeding edge of research. Once desirable and undesirable game/training results are achieved related to those patterns they can be used as a jumping point for further innovation or training purposes. This includes optimizing the role equipment performance plays in the tactics, techniques, and procedures.

Rajiv Maheswaran (2015), has been applying spatio-temporal pattern mining to professional basketball. Maheswaran gave a top-notch TED talk showing how the computer is able to recognize movements that only professionals even know about. The pick-and-roll move is of particular interest to coaches because there are many, many, subtle variations and it is one of the most important plays. Coaches want to know how to run it and defend it and unlock the key to winning and losing most games. Identifying the subtle variations is the thing that matters. Maheswaran’s software is now used by almost all contenders for the NBA championship this year (2015), this effectively proves that computers definitely can find things too subtle or complex for humans. Maheswaran states “it's very exciting because you have coaches who've been in the league for 30 years that are willing to take advice from a machine”.

Figure 12: Maheswaran examined the pick-and-roll using spatio-temporal data mining. Essentially, there are two offensive and two defensive players. The player with ball can either take, or he can reject. His teammate can either roll or pop. The guy guarding the ball can either go over or under. His teammate can either show or play up to touch, or play soft and together they can either switch or blitz. Movement is very messy and players wiggle a lot and getting these variations identified with very high accuracy, both in precision and recall, is difficult. Source: (Maheswaran, 2015).

Instead of just using pure spatio-temporal data mining and guessing at goals and motivations for player behaviors, it may be possible to create a condensed digest of decisive points in games and simply ask players (autonomous interviewing). In machine learning parlance, this provides labeled data. A recent demonstration by IBM (Smith , 2016) used Watson to generate a movie trailer for the movie Morgan in conjunction with 20th Century Fox. In order to create a trailer, first IBM trained Watson on 100 trailers from other movies. The training included a visual analysis to tag trailer segments with emotional labels based on objects in the scene, lighting, and framing. It also analyzed the audio musical score and tone of voices. Next Watson “watched” the movie Morgan and suggested the 10 best segments of the movie to make a trailer that would keep audiences on the edge of their seats. The segments were then arranged and edited together. The results are viewable in the referenced article.

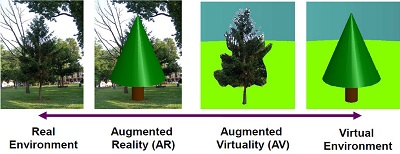

Augmented Reality

Augmented reality is simply a technology that superimposes a computer-generated image on a user's view of the real world. In comparison, virtual reality, is the computer-generated simulation of a full three-dimensional environment that can be interacted with in a seemingly real or physical way. Figure 13 helps to clarify the difference.

Figure 13: Milgram’s Virtuality Continuum (Milgram, 1994). The mixed reality spectrum provides some framework for the difference between augmented reality (virtual objects in a real scene, and virtual environments (virtual objects in a virtual scene).

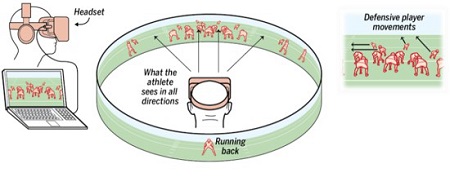

Virtual reality is not widespread in sports yet, but is becoming widespread in video gaming. Cellular phone display technology created the conditions for a breakthrough product, Oculus Rift, to provide a low cost virtual reality display for gaming. At this point, in 2017 there's an amazing variety of VR headsets to choose from, including HTC Vive, Sony PlayStation VR, and Samsung Gear VR. Oculus Rift has a resolution of 1080×1200 per eye, a 90 Hz refresh rate, and a 110º field of view, it also has integrated head tracking so to look around in a scene, all you need do is move your head. Virtual reality is already being explored for sports.

According to an article on the SXSports event in Austin (Tracy, 2015), Stanford is using virtual reality to review football plays and give players a break from the field. “We see it in high school, over-exertion and decreased participation,” assistant football coach Derek Belch observers. “Using virtual reality will give the players a break from two-a-days and help them mentally prepare for situations.” Chris Kluwe, a former NFL punter for the Vikings states “On the field, everything is chaos. We have little sense of what is going on. AR and VR will help us see why the player didn’t make the play as if we were in his shoes.”

Figure 14: STRIVR Labs, a Palo Alto-based startup founded by Torrey Pines alum and former Stanford kicker Derek Belch. Source: (Loh, 2015)

Figure 15: Six NFL teams -- the Cardinals, Dallas Cowboys, Minnesota Vikings, Tampa Bay Buccaneers, San Francisco 49ers and New England Patriots -- are using virtual reality this season. Source: (Martin & Wire, 2015)

Figure 16: Augmented reality could show each player the play right in front of their face. It is even possible to overlay movements directly on the field. Source: (Kluwe, 2014)

Figure 17: Augmented reality could be used to highlight players that are open and even warn players about events out of their field of vision. Source: (Kluwe, 2014)

In his excellent TED Talk, “How augmented reality will change sports ... and build empathy,” former NFL punter Chris Kluwe (2014) describes augmented reality for football (Figure 16 and Figure 17). “Imagine you're a player walking back to the huddle, and you have your next play displayed right in front of your face on your clear plastic visor that you already wear right now. No more having to worry about forgetting plays. No more worrying about having to memorize your playbook. You just go out and react.” Kluwe goes on to describe, “augmented reality is not just an enhanced playbook. Augmented reality is also a way to take all that data and use it in real time to enhance how you play the game... You also have information from helmet sensors and accelerometers, technology that's being worked on right now. You take all that information, and you stream it to your players. The good teams stream it in a way that the players can use. The bad ones have information overload.” This clearly sounds like the problem the US Army is facing regarding data-to-decisions.

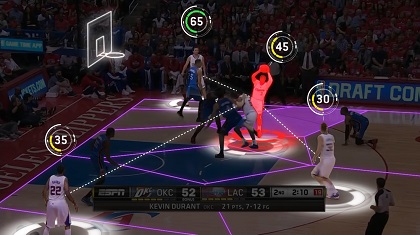

Maheswaran, who was mining basketball data, provides an example regarding basketball. Maheswaran did not discuss the use of augmented reality, but it is easy to see that leap. He took spatio-temporal features for a particular play for every shot over a season for all players. Some of the features include: Where is the shot? What's the angle to the basket? Where are the defenders standing? What are their distances? What are their angles? Where are all the players and what are their velocities. He is then able to identify the quality of the shot and the quality of the shooters. So imagine with augmented reality if you could display this data over each player so in-game players could immediately use it, similar to that in Figure 18.

Figure 18: This sample image from Second Spectrum shows the company's data visualizations for the NBA. They crunch game data — past and present — to show live statistics and information during games. Source: (Maheswaran, 2015)

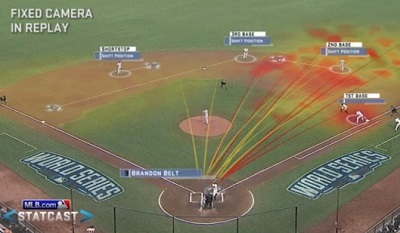

Another place where statistical data mining and optical tracking is affecting sports is for sportscasting. Again, it doesn’t take much imagination to take the leap to see where this data could also be displayed to players in realtime using augmented reality, or after the game for learning. Major League Baseball debuted a new system called Statcast in April 2015 (See Figure 19 and Figure 20). It is described (Swish Analytics, 2015) as a state-of-the-art tracking technology capable of gathering and displaying "previously immeasurable" aspects of baseball games. It can measure and display: Pitching: Pitch velocity, release point, time of pitch; Batting: Bat velocity, launch angle, ball direction, hangtime, travel distance, and projected landing point; Base running: First step times, acceleration, top speed, trajectory; and Defense: Distance covered, efficiency (how efficient a player's route is in tracking a ball), transferring of the ball from glove to throwing hand and the velocity of throws.

Figure 19: Statcast sheds light on two stunning catches. (Leach, 2015).

Figure 20: Another Statcast example of baseball data mining. Source: (Swish Analytics, 2015).

Conclusion

Raw technology is the easiest thing for our adversaries to copy because they can purchase it on the global market. In fact, sometimes our adversaries will have better equipment because of the scale at which the U.S. Army fields equipment. The Army needs to figure out how to turn its size and bureaucracy into asymmetric advantage. Learning optimal ways to use and tune future equipment along with training Soldiers does exactly that. New technologies available from anywhere in the world must be rapidly ingested, understood operationally, and fielded in such a way that we provide Soldiers overmatch capabilities. Again, to emphasize, a capability is “technology in the hands of Soldiers, who are trained how to use it, and can apply it on the battlefield.” (Perkins, 2014)

Perkins states (2015) “the technology used by Soldiers five years hence is likely to be unrecognizable to today’s Soldiers. If a chessboard was ever an accurate analogy for the global security environment, the board has been upended. Tomorrow’s Soldiers will play a different game.” The Army should be taking examples from the cutting edge of video gaming and professional sports to learn how to operationalize big data. There is a vast amount of research the Army should be investing in, particularly in spatio-temporal data mining. Across the mixed reality spectrum, we can find better ways to keep life bars from draining via training, better-tuned acquisitions, and implementing big data on the battlefield.

References

Ala gappan, M. (2013). The new positions of basketball. TEDxSpokane. Retrieved from http://tedxtalks.ted.com/video/The-new-positions-of-basketball

Andrienko, G., Bak, P., Keim, D., & Wrobel, S. (2013). Visual Analytics of Movement. Springer-Verlag Berlin Heidelberg.

Bungie, Inc. (2010). Halo: Reach. Microsoft Game Studio.

Canossa, A., & Drachen, A. (2009). Play-personas: behaviours and belief systems in user-centred game design. Human-Computer Interaction–INTERACT, (pp. 510-523).

Csikszentmihalyi, M. (1990). Flow: The psychology of optimal experience. New York: Harper Perennial.

Jankun-Kelly, T., Elmqvist, N., & Bowman, B. (2012, Nov.). Toward Visualization for Games: Theory, Design Space, and Patterns. IEEE Transactions on Visualization & Computer Graphics, 18, 1956-1968.

Kluwe, C. (2014, Mar.). How augmented reality will change sports ... and build empathy. TED2014 . Retrieved from https://www.ted.com/talks/chris_kluwe_how_augmented_reality_will_change_sports_and_build_empathy

Kowshik, G., Chang, Y.-H., & Maheswara, R. (2012). Visualization of event-based motion-tracking sports data. University of Southern California.

Leach, M. (2015). Statcast sheds light on two stunning catches. MLB.com. Retrieved from http://m.mlb.com/news/article/79566520/statcast-sheds-light-on-amazing-catches-by-yasiel-puig-and-andrew-mccutchen

Loh, S. (2015, May 21). Virtual reality QB trainer a 'game changer'. San Diego Union-Tribune. Retrieved from http://www.sandiegouniontribune.com/news/2015/may/21/virtual-reality-quarterback-trainer-derek-belch/

Maheswaran, R. (2015, Mar.). The math behind basketball's wildest moves. TED2015. Retrieved from https://www.ted.com/talks/rajiv_maheswaran_the_math_behind_basketball_s_wildest_moves

Martin, J., & Wire, C. (2015, Sep. 9). Will virtual reality change the NFL? CNN.com. Retrieved from http://www.cnn.com/2015/09/09/us/nfl-virtual-reality-training/

Matthews, M. D. (2013). Head Strong: How Psychology is Revolutionizing War. Oxford University Press.

Milgram, P. a. (1994). A taxonomy of mixed reality visual displays. IEICE TRANSACTIONS on Information and Systems, 77.12, pp. 1321-1329.

Nacke, L., Ambinder, M., Canossa, A., Mandryk, R., & Stach, T. (2009). Game metrics and biometrics: the future of player experience research. Conference on Future Play. Algoma University.

Perkins, D. G. (2014, Nov. 4). Perkins shares leadership insights, discusses the future Army. Retrieved from https://www.youtube.com/watch?v=hz93q6OcHYU

Perkins, D. G. (2015, January–March). Interview: ‘Win in a Complex World’— But How? Army AL&T Magazine, pp. 106-115. Retrieved from http://usacac.army.mil/sites/default/files/documents/cact/GEN%20PERKINS%20HOW%20TO%20WIN%20IN%20COMPLEX%20WORLD.pdf

Roper, W. (2017, Jun 5). The Future of Warfare -SXSW 2017. (N. Thompson, Interviewer) Retrieved from https://www.youtube.com/watch?v=GLh_ApVVBU4

Smith , J. R. (2016, August 31). IBM Research Takes Watson to Hollywood with the First “Cognitive Movie Trailer”. Retrieved from https://www.ibm.com/blogs/think/2016/08/cognitive-movie-trailer/

Smith, R. E., & Vogt, B. D. (2014, July). A Proposed 2025 Ground Systems, Systems Engineering Process. Acquisitions Research Journal, 21(3), 750-772. Retrieved from http://dau.dodlive.mil/files/2014/07/ARJ-70_Smith.pdf

Swish Analytics. (2015, Apr. 20). Statcast Debuts Tomorrow: What You Need to Know. Retrieved from https://swishanalytics.com/blog/2015-04-20/MLB-statcast-debuts-tomorrow

Tracy, P. (2015, March 18). SXSW Sports: The Future of Virtual and Augmented Reality in Sports. SportTechie. Retrieved Oct 5, 2017, from https://www.sporttechie.com/sxsw-sports-the-future-of-virtual-and-augmented-reality-in-sports/

Zamora, P. (2013, Mar. 4). Virtual training puts the 'real' in realistic environment. Retrieved from http://www.army.mil/article/97582/Virtual_training_puts_the__real__in_realistic_environment/