Combat Identification in Cyberspace

Robert Zager and John Zager

Cybersecurity threats represent one of the most serious national security, public safety, and economic challenges we face as a nation.

-2010 National Security Strategy

On June 19, 2013, Under Secretary of Defense Frank Kendall, in testimony before the Subcommittee on Defense of Senate Appropriations Committee, said that the F-35 Joint Strike Fighter had been hacked and the stolen data had been used by America’s adversaries.[1] This testimony merely confirmed earlier reports in The Washington Post that Chinese hackers had compromised more than two dozen major weapons systems.[2] Regrettably, these revelations are merely the latest in a long series of breaches.[3]

The pervasiveness of cyberspace was emphasized in the July 2011 Department of Defense Strategy for Operating in Cyberspace:

Along with the rest of the U.S. government, the Department of Defense (DoD) depends on cyberspace to function. It is difficult to overstate this reliance; DoD operates over 15,000 networks and seven million computing devices across hundreds of installations in dozens of countries around the globe. DoD uses cyberspace to enable its military, intelligence, and business operations, including the movement of personnel and material and the command and control of the full spectrum of military operations.[4]

Section 1 of this paper discusses the objectives of our cyber-adversaries and their infiltration methods. Section 2 discusses the divergence of the U.S. definition of the cyber threat from the real threat and how this divergence plays to the strengths of our adversaries. Section 3 is a discussion of the technical and human factors that our adversaries exploit. Section 4 concludes with a discussion of existing technical methods that can be adopted and extended which will increase the effectiveness of U.S. cyber defenses.

Section 1. The Objectives of Cyberattacks

In this paper the term cyberattack is used to encompass all forms of unauthorized access to computer networks. The objectives of cyberattacks vary widely and include:

- Reconnaissance. The adversary can perform technical reconnaissance to test the victims’ defenses and possibly deploy malicious code that would lie dormant until activated at some future date.[5]

- Disinformation. In the attack against the AP wire service, the attackers used the reach and credibility of the AP to disseminate misinformation that the President of the United States was injured in a bombing.[6] This misinformation resulted in a $140 billion loss of stock market value in a matter of a minutes.[7]

- Disruption. Disruption is a diverse category. In cases such as the LulzSec attack on the CIA, the engagement was a Distributed Denial of Service (DDoS) attack, the objective of which appeared to be rendering the systems of the victim effectively off-line.[8] In cases such as the FBI website defacement, attackers used their access to deface websites as a means of embarrassing the victim.[9] Disruption also occurs as a consequence of attacks with other objectives as systems are taken off-line for remediation.[10]

- Diversion. The objective of a cyberattack can be diversion of the victim’s cyber defenses.[11] Consistent with attacks in the physical world, a diversionary attack is an attack made to divert the defender’s resources away from the attacker’s primary objective.

- Theft. Stealing money is a common objective of cyberattacks. Other theft motivations include stealing user data for that can be sold for money or used in subsequent attacks.

- Espionage. Exfiltration of data is a common objective of cyber-engagements. Data exfiltration is a particularly effective form of espionage. Commercial espionage has compromised commercially valuable information and valuable intellectual property.[12] Recent reports indicate the Chinese have stolen system designs for the F-35, the V-22, the C-17, the Patriot Advanced Capability-3, the Global Hawk Drone and many other critical weapons systems.[13]

- Sabotage. Data processing systems control real world processes. Compromised data processing systems can be used to create physical damage as was demonstrated with the Stuxnet virus which destroyed Iranian nuclear centrifuges.[14]

- Combinations. Attackers sometimes coordinate objectives. For example, in September of 2012 the attackers conducted espionage to obtain bank operations manuals. Using knowledge of internal controls obtained from the stolen documents, the attacker mimicked bank procedures and initiated and authorized invalid wire transfers. The wire transfers went unnoticed as IT was busy dealing with the attacker’s simultaneous diversionary DDoS attack.[15]

The methods used to accomplish the objectives are dependent upon the desired objective. In selecting an attack method, a key distinction between objectives is the location of the attack surface. If the attack surface is exposed to the public on the internet - for example an online customer facing application - then access is gained by typing the application’s URL into the web browser and subjecting the website to direct attack.

In the realm of state affiliated cyberattacks, the targeted systems are not exposed to the public on the internet; the targeted systems reside on one or more intranets protected from the world by a firewall and access control architecture. Placing the attack surface behind a well-conceived and well-implemented firewall and access control architecture makes it very difficult for attackers to access the attack surface using technical means.

While we may imagine that state affiliated attackers spend their time frantically typing away (or using clever automation tools to perform the task) to overcome defenses and gain access, like the Matthew Broderick character in the 1983 movie WarGames, the reality is that hackers do not do this. Instead, they enlist an unwitting ally to defeat the firewalls and install command and control software which does the attacker’s bidding. That ally is the people who are authorized users of the intranet systems. How can a Chinese hacker convince a trusted employee to install command and control software? By deception. Can it be that easy? Mandiant, in their report APT1, found that 100% of the Chinese cyberattacks used a specific form of deception termed “spearphishing” to compromise U.S. systems.[16]

Section 2. The Divergence of the Perceived Threat and the Real Threat

Figure 1 is the Cyber Threat Taxonomy set forth in the Defense Science Board Report “Resilient Military Systems and the Advanced Cyber Threat” published in January of 2013 (DSB Report).[17]

Figure 1- Cyber Threat Taxonomy

The Cyber Threat Taxonomy is built upon the correlation between the sophistication and cost of the attack, on the one hand, and its consequences, on the other. Quoting from the DSB Report:

- Tiers I and II attackers primarily exploit known vulnerabilities

- Tiers III and IV attackers are better funded and have a level of expertise and sophistication sufficient to discover new vulnerabilities in systems and to exploit them

- Tiers V and VI attackers can invest large amounts of money (billions) and time (years) to actually create vulnerabilities in systems, including systems that are otherwise strongly protected.

The DSB Report makes a series of recommendations that are based on the assumption that there is a direct relationship between the difficulty of perpetrating an exploit and its effectiveness. The detailed elaboration of the Cyber Threat Taxonomy (DSB Report Page 21 and following) draws on the Cold War experiences with Soviet operations, using analogies to the physical methods discussed in “Learning from the Enemy: The Gunman Project”.[18]

Similarly, analogies to kinetic operations undergird the information sharing strategies outlined by General Alexander[19] and the “Presidential Policy Directive – Critical Infrastructure Security and Resilience.”[20] The unstated assumption of information sharing strategies is that there is persistent information about attacks that can be used to counter an attack. However, the internet is, by design, anonymous and transitory; attackers can enhance that anonymity by using anonymizing networks and can route their traffic through intermediate nodes in countries disinclined to aid the United States. These factors make tracking an attack to its source a difficult, time consuming and problematic exercise which may make identification impossible. Enhancing their anonymity, attackers do not reuse attack elements that allow correlation and preemptive measures. For example, state affiliated actors use throw-away domains, so that attacks do not share a common origin.[21] Even in cases in which a lazy attacker uses common origins which are successfully located and rendered ineffective, attacking these sources merely inconveniences the attackers as they move to new attack points with a few keystrokes.[22]

Analogies between kinetic operations and cyberspace fail to account for the vast differences between kinetic operations and cyber operations.[23] Cyberadversaries do not need physical access to targeted systems. Cyberadversaries can be anywhere and nowhere (Pakistan has cyberattack infrastructure in the U.S.; India has theirs in Norway[24]). A cyberattacker can be in Beijing at one moment and then appear in New York City at the speed of light; or be in both places simultaneously. Cyberadversaries can easily hide their identity, masquerade as others, appear as multiple attackers, and disappear. Defenses that worked yesterday may not work today.[25] Overlapping layers of defenses may be ineffective. Identifying an attacker may be impossible.[26] Threats may go undetected for many years.[27] The differences between kinetic and cyber operations which are lost in analogy are the very factors that are exploited by cyberwarriors. This exploitation is most effectively deployed in spearphishing attacks.

Although neither technically advanced nor expensive to perpetrate, spearphishing is devastatingly effective. In its NetTraveler report, Kaspersky observed,

During our analysis, we did not see any advanced use of zero-day vulnerabilities or other malware techniques such as rootkits. It is therefore surprising to observe that such unsophisticated attacks can still be successful with high profile targets.[28]

The premise of the Cyberthreat Taxonomy, that lower tier attackers constitute a nuisance, is factually incorrect. As mentioned above, Mandiant attributed 100% of Chinese cyberattacks to spearphishing.[29] Trend Micro estimates that 91% of targeted attacks involve spearphishing.[30] Verizon estimates that more than 95% of all state-affiliated espionage used phishing as a means of compromising targeted systems.[31] General Alexander may have had such data in mind when he told the Senate that 80 percent of exploits and attacks that come in could be stopped by cyber hygiene.[32] The Department of Defense Strategy for Operating in Cyberspace makes the same cyber hygiene point that General Alexander made:

Most vulnerabilities of and malicious acts against DoD systems can be addressed through good cyber hygiene. Cyber hygiene must be practiced by everyone at all times; it is just as important for individuals to be focused on protecting themselves as it is to keep security software and operating systems up to date.[33]

The DSB Report acknowledges the importance of cyber hygiene[34] and makes specific recommendations about cyber hygiene and spearphishing:

DoD needs to develop training programs with evolving content that reflects the changing threat, increases individual knowledge, and continually reinforces policy. Training and education programs should include innovative and effective testing mechanisms to monitor and catch an individual’s breach of cyber policy. For example, DoD could conduct random, unannounced phishing attacks against DoD employees similar to one conducted in April of 2011 by a high tech organization to test the cyber security awareness of its workforce. Within a one week period the organization’s CIO sent a fake email to about 2000 of its employees. The fake email appeared to originate from the organization’s Chief Financial Officer and warned the employees that the organization had incorrectly reported information to the Internal Revenue Service that could result in an audit of their tax return. To determine if they were affected, they were asked to go to (click) to a particular website. Almost 50% of the sample clicked on the link and discovered that this had been a cyber security test. Each of them had failed. Had this been a real phishing attack, every one of these employees not only would have compromised their machines but would have put the entire organization at risk.

Following an initial education period, failures must have consequences to the person exhibiting unacceptable behavior. At a minimum the consequences should include removal of access to network devices until successful retraining is accomplished. Multiple failures should become grounds for dismissal. An effective training program should contribute to a decrease in the number of cyber security violations.[35]

… cybersecurity is fundamentally about an adversarial engagement. Humans must defend machines that are attacked by other humans using machines.

It is certainly true that if personnel would stop taking the spearphishing bait, a significant shift in the cybersecurity balance of forces would take place as 80,000 Chinese hackers would be rendered ineffective.[36] Discharging personnel who fall prey to spearphishing is an effective policy if, and only if, people can perform the task of avoiding spearphishers in an operational environment. If, on the other hand, people cannot be trained to avoid deceptive emails in an operational environment, then this policy will be ineffective in accomplishing the desired cyber hygiene and the spearphishers will continue to prevail.

Section 3. Spearphishing - The Infiltration Superhighway

In order to ascertain if people can avoid being deceived by spearphishers in an operational environment, one must understand:

- The spearphishing process;

- Email technology; and

- The human psychology of email interactions.

These critical cyber-factors were not analyzed in the DBS Report, by General Alexander, or in the Department of Defense Strategy for Operating in Cyberspace. As we shall see, the lessons of Cold War espionage and kinetic warfare are of scant value in countering cyber threats.[37]

A. The Spearphishing Process.

[Chinese spearphishing] has a well-defined attack methodology, honed over years and designed to steal massive quantities of intellectual property. They begin with aggressive spear phishing, proceed to deploy custom digital weapons, and end by exporting compressed bundles of files to China – before beginning the cycle again. They employ good English — with acceptable slang — in their socially engineered emails. They have evolved their digital weapons for more than seven years, resulting in continual upgrades as part of their own software release cycle. Their ability to adapt to their environment and spread across systems makes them effective in enterprise environments with trust relationships.[38]

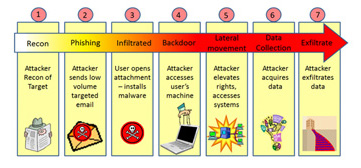

Figure 2 summarizes the life cycle of a spearphishing attack:

Figure 2- Spearphishing Attack Lifecycle

The recon phase is facilitated by vast amounts of data that are available on the internet. Hackers know how to use the internet to find out who works inside organizations. Social media was used to identify the CIA agent responsible for the successful bin Laden operation.[39] Finding targets is as easy as taking pictures of employees in the parking lot. Carnegie Mellon University researchers led by Alessandro Acquisti took photographs of student volunteers. Using facial recognition software on social networking sites, the researchers were able to identify 31% of the students by name. In another experiment, the Carnegie Mellon team was able to identify 10% of people who had posted their photos on public dating sites. The researchers have posted their research online, Faces of Facebook: Privacy in the Age of Augmented Reality.[40] The researchers report that they have been able to use profile photos and facial-recognition software to get details such as birth date and social security number predictions. Hackers use LinkedIn as another source of useful information about people and the places they work.[41] Hackers even set up fake social media accounts to collect information about people inside an organization.[42]

Social networking sites are not the only source of useful social engineering data. Companies unwittingly publish vast amounts of information on their websites and in their public document filings in the form of metadata that is attached to their files. The metadata can include user names, IP addresses and email addresses.[43]

B. Email Technology.

Email is, by design, two-faced. It presents one face to the worldwide email system. It presents a completely different face to the email recipient. The duplicitous design of email makes it ideally suited to undetectable deception. Our adversaries understand and exploit this duplicity. The U.S. does not understand this duplicity and ignores it. By failing to understand the email cyberspace, the U.S. has ceded the email attack surface to our adversaries.

The face email presents to the worldwide email delivery system conforms to published standards to deliver email. The worldwide standards-based email system is designed to deliver email.[44] The technical requirements to create a technically valid email are published standards.[45] By conforming to the technical standards, hackers exploit this bias to deliver small numbers of socially engineered emails.[46]

The face email presents to the email recipient is an interface system that displays the email, including sender identification data, to the recipient. The information which is displayed to the user is the information that was created by the sender and is substantially independent of the delivery face.[47]

Spearphishers exploit the independence of these faces to deliver email which masquerades as originating from a trusted sender. Figure 3 is an Outlook display showing a real email from the President. Figure 4 is the encoded email data for that same message. Figure 4 illustrates the two independent faces. The material in the blue boxes is the delivery face; the material in the red box is the display face.

Figure 3- White House Email As Displayed

Figure 4- White House Email as Encoded

The careful observer will note that the server which sent this email was NOT whitehouse.gov – it was sent from service.govdelivery.com. The recipient does not generally know this. Email security systems evaluate service.govdelivery.com, paying no heed to what the user sees. With minimal technical skills, a sender can pass the tests which are applied to the sending server -- at which point, the recipient is told the message came from whitehouse.gov.

C. The Human Psychology of Email.

Human beings can only detect what is perceptible. The spearphisher’s job is to conduct research which will enable the attacker to draft a socially engineered email which, when encountered by a human recipient in a real world situation, is perceived to contain highly relevant content that induces the recipient to open the message and take the call to action.

In cases of spearphishing emails in which the displayed information appears to be 100% authentic, training, reinforced with reprimand and dismissal, will not endow people with abilities which they do not possess.[48] As Mandiant observed, the Chinese employ hackers who are skilled in the English language in order to evade detection. U.S. cyber defenses (cyber hygiene) rely upon email recipients making correct email processing decisions; cyberattackers exploit this reliance as a means of infiltration.

[Email will] come in error-free, often using the appropriate jargon or acronyms for a given office or organization.

- Col. Gregory Conti, USA, Director, Cyber Research Center,

U.S. Military Academy

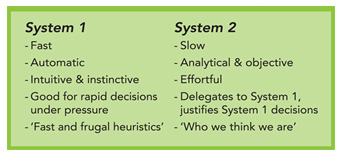

Not all spearphishing emails are perfectly constructed. Sometimes spearphishing emails do contain clues that betray their inauthenticity. However, errors are often offset by the spearphisher’s expertise in social engineering. Social engineering is the science of shaping a decision space to influence human behavior. Social engineering often exploits the fact that the human mind does not operate like a rational computer. Daniel Kahneman, the Nobel Prize winning psychologist, determined that human thinking uses two independent systems:[49]

Figure 5- The Two Systems of Human Thought

Social engineering targets the superficial thought processes of System 1. An example of social engineering that you encounter almost every day is the layout of a grocery store. The dairy products are in the back, forcing you to walk past tempting items that are not on your shopping list in order to reach your dairy destination. You must also pass through the check-out stand, which is populated with all manner of tantalizing items you did not intend to purchase. How often does your grocery receipt reflect purchases that are not on your shopping list? You have been manipulated by social engineering.

The power of social engineering was revealed in a startling example of how little people think about seemingly innocuous activities. Frank Abagnale, whose life was chronicled in the movie Catch Me If You Can, relates the story of one of his early scams.[50] He observed people using a night deposit box. The next day he rented a guard uniform, put an “out of order” sign on the night deposit box and stood next to the night deposit box. People gave him their bank deposits without questioning the absurdity of an inert box being out of order.

The power to influence decisions by shaping the decision space is forcefully demonstrated in the famous invisible gorilla experiment.[51] In this experiment, the subject is asked to observe a basketball being passed between players. While the players are passing the ball, a gorilla walks between the players. Many experimental subjects do not see the gorilla. By providing the right distractions, even items in plain sight can be made to disappear.

In a series of three experiments conducted at the United States Military Academy, cadets were subjected to spearphishing attacks.[52] The researchers found that training had little impact on the power of deception, despite obvious factual errors in the emails. To the extent training had any impact, those impacts wore off in about four hours. Other research has found similar results.[53]

The ineffectiveness of training strongly indicates that email processing is a System 1 activity. This indication was confirmed in research led by scientists at the University at Buffalo who found that the fast and frugal heuristics of email processing were:[54]

Perceived Relevance;

Urgency Clues; and

Habit.

In the first Carronade experiment, the researchers noted that cadets sometimes felt that there was “something wrong” with the experimental emails, yet took the bait anyway. Quoting one cadet, “The e-mail looked suspicious but it was from an Army colonel, so I figured it must be legitimate.” The researchers took awareness as a hopeful sign that training could be effective. Students of Kahneman, and hackers, know that the twinge of uncertainty expressed by the cadets merely confirms that System 1 is a very powerful force that often ignores the better judgment of System 2.

The power of the triad of perceived relevance, urgency clues and habit overwhelming careful analysis can be seen in the infamous case of the fake AP tweet which falsely announced that President Obama had been injured in a bombing at the White House. Figure 6 is the highly targeted socially engineered email which was sent to an AP reporter.[55]

Figure 6- The AP Spearphishng Attack

When the reporter clicked the link, a chain of events was initiated which ultimately captured the AP Wire Service Twitter credentials and transmitted those credentials to the hackers. Using the real AP Wire Service Twitter credentials, hackers issued an AP Tweet that caused $140B in stock market losses.[56] The reporter clicked the link despite having been warned earlier in the day about potential spearphishing attacks and despite the defect that the “from” sender was not the signer of the text. While one can harshly judge the reporter who made the error, that error must be considered in light of the reporter’s operating environment. The reporter’s job is to quickly assimilate and report information about the news. On cursory examination, this was a routine email from a colleague which actuated the email response triad - Perceived Relevance, Urgency Clues, and Habit.

4. Extending Existing Technical Methods to Fight Spearphishing

The attack surface is the individual person’s email inbox. This attack surface exploits the user who becomes a bridge between the publicly exposed interface (the employee’s email) and the intranet (which is accessible by the targeted employee). Exploiting email technology and social engineering, that bridge becomes an infiltration superhighway for the skilled spearphisher. When targeted by cyberattackers, the cyberdefender has a display which contains an undifferentiated mélange of real emails (which, incidentally are not always perfectly drafted), error free attacks, and attacks of sufficient quality to trigger System 1. Figure 7 is such an email interface.

Figure 7- The Indecipherable Inbox

This interface provides the cyberdefender with no effective way to differentiate friendly emails from foe emails. Looking at the two purported IRS emails, the recipient has no simple way to determine authenticity. One suspects that the highlighted email from a .com domain that discusses gambling losses might be a cyberattack. Training cannot enhance the information deficiencies of this display. This is analogous to a radar display that lacks Identification: Friend or Foe (IFF) discriminators. The ability of combatants to distinguish friend from foe is essential.[57]

It’s not that the Chinese have some unbeatable way of breaking into a network. What is innovative is their targeting.

- John Pescatore, director of emerging security trends at the SANS Institute

Yet, existing email standards have the seeds of an effective defensive strategy. Senders have two groups of settings to configure in order to send email. These settings serve as self-issued credentials. The first group of settings are the required DNS settings which identity the sending domain and the servers authorized by that domain to send email on its behalf. The second group of settings are the voluntary email authentication settings.

Referring back to figure 4, the email is telling the user that it came from whitehouse.gov. Any sender can input and display whitehouse.gov in this field – that is the stock-in-trade of spearphishers. However, only the people who have access to the DNS settings of the real whitehouse.gov domain can configure the DNS and email authentication records of whitehouse.gov. Thus, only the real whitehouse.gov can publish valid whitehouse.gov email credentials on the internet.

Similarly, only the people who control the DNS and email authentication records of service.gov.delivery.com can publish self-issued credentials for service.govdelivery.com. Email that fails authentication can be subjected to discrepancy processing. At first blush this appears to solve the fake email problem; however, the current system has four shortcomings which are illustrated by Figure 4:

- Authentication is tested against the sending servers, not the name displayed to recipients. Only servers really authorized by service.govdelivery.com can send email on behalf of service.govdelivery.com. This restriction does not prevent spearphishers from falsely displaying whitehouse.gov.

- Authentication is a self-issued credential. This permits spearphishers to establish their own sending domains and their own authorized servers and send fully authenticated email from nefarious domains which pass authentication and falsely display whitehouse.gov

- The DMARC email standard can be used to prevent unauthorized use of the name which is displayed to the recipient, for example, whitehouse.gov. However, DMARC does not prevent the use of cousin domains, a domain that is socially engineered to deceive recipients.[58] Should whitehouse.gov adopt DMARC, spearphishers are free to use confusingly similar names. This problem is exacerbated by the large number of top level domains. For example, DMARC would not stop a spearphisher from using whitehouse-gov.us. The problem is further aggravated by the 2009 extension of the email character set, which allows for the use of a large number of characters that are very similar, thereby expanding the character set for homographic spearphishing attacks.[59]

- Users do not know all the legitimate domains with which they interact, making them susceptible to deception by clever ruses.[60] Would anyone correctly guess that the real email address of a Silicon Valley Member of Congress uses the “address-verify.com” domain or that irs.gov is NOT the domain the IRS uses for email?

These four deficiencies result in the indecipherable display encountered in Figure 7.

It is possible for email recipients to repurpose email authentication into an effective IFF system for email. This repurposing consists of three elements.[61]

The first element is a determination of sender trustworthiness by the recipient. For example, the recipient needs to determine if email that can be verified as coming from whitehouse.gov is trustworthy. The same inquiry can be made of email coming from facebook.com. This requires technical analysis of the domains’ self-issued credentials and a risk assessment. One could reasonably conclude that email which really comes from whitehouse.gov is trustworthy because, after detailed technical analysis, whitehouse.gov is determined to be careful and uses this domain in a trustworthy manner. On the other hand, anyone who has access to a computer can send email from facebook.com, rendering email from that domain suspect. Of the over 246 million domains which can send email[62], we know that whitehouse.gov email is friendly. An organization can analyze its own email traffic to construct a table of trusted email counterparts. Crucial on this list is the most commonly spoofed domain – the organization’s own domain which is used to send fake internal email.

The second element is to use the published authentication records to validate the domain that recipients see instead of the domain that operates the sending servers. In our whitehouse.gov example, the authenticity of the email to be tested is whitehouse.gov, instead of service.govdelivery.com.

The third and final element is to combine the trust determination of the first element with the displayed sender validation of the second element to modify the display as a user aid.

By combining these three elements users can readily distinguish trustworthy email really sent by the President from email which seeks to deceive by masquerading as being from the President.[63]

Figure 8 shows Figure 7 modified by combining these three elements into an email display which provides the cyberdefender (the email recipient) with cyber combat identification in the email space. In Figure 8, email from trusted counterparts is designated by a special trust icon in the icon column of the email listview. An additional trust indicator appears in the email itself below the subject and next to the sender.

Figure 8- IFF For Email

In Figure 7, the recipient was at the mercy of good luck in deciding which “IRS” emails to trust; probably trusting the one from the .gov domain about health care reform updates. In fact, the email from the .com domain about gambling was real! In figure 8, it is easy to distinguish the real IRS email from the attack.

This IFF for email system leverages a feature of cyberspace which cannot be exploited by spearphishers to engage in the deception they require. This is because self-issued email credentials, although lacking inherent integrity, are subject to forensic validation by IT experts of the recipient, thereby allowing experts employed by the recipient organization to determine whom to trust.

In the absence of this IFF for email systems, personnel receiving spearphishing emails are left to guesswork in determining if the email should be trusted. That guesswork, termed cyber hygiene in current defense parlance, is made in a decision space that is manipulated by the attacker. With this IFF for email system, IT is able to provide personnel with real-time identification of trusted senders.

Users will decide which emails to trust. In the absence of IFF guidance from IT, the trust decision will be made in a decision space shaped by the cyberattackers seeking to deceive recipients. By adopting IFF functionality for email, users will have information that will improve cyber hygiene practices and help prevent infiltrations.

The views expressed herein are the views of the authors and do not reflect the views of Iconix, Inc. or Hofstra University.

End Notes

[1] Freedberg, Sydney. “Top Official Admits F-35 Stealth Fighter Secrets Stolen.” Breaking Defense, 20 June 2013, Web. 07 August 2013, http://breakingdefense.com/2013/06/20/top-official-admits-f-35-stealth-fighter-secrets-stolen/

[2] Nakashima, Ellen. “Confidential report lists U.S. weapons system designs compromised by Chinese cyberspies.” The Washington Post, 27 May 2013, Web. 07 August 2013. http://www.washingtonpost.com/world/national-security/confidential-report-lists-us-weapons-system-designs-compromised-by-chinese-cyberspies/2013/05/27/a42c3e1c-c2dd-11e2-8c3b-0b5e9247e8ca_story.html

[3] See, Brickey, Jon, et al. “The Case for Cyber.” Small Wars Journal, 13 September 2013, Web. 07 August 2013, http://smallwarsjournal.com/jrnl/art/the-case-for-cyber, for a list of recent cyber compromises.

[4] “Department of Defense Strategy for Operating in Cyberspace,” Department of Defense, July 2011, Web. 07 August 2013, http://www.defense.gov/news/d20110714cyber.pdf

[5] FBI Director Robert S. Mueller, III, “Remarks, RSA Cyber Security Conference, San Francisco, CA, March 01, 2012”.Web, 2 April 2012, http://www.fbi.gov/news/speeches/combating-threats-in-the-cyber-world-outsmarting-terrorists-hackers-and-spies

[6] Romenesco, Jim. “AP Warned Staffers Just Before @AP Was Hacked,” jimromesnesko.com, 23 April 2013, Web 24 April 2014, http://jimromenesko.com/2013/04/23/ap-warned-staffers-just-before-ap-was-hacked/

[7] Johnson, Steven. “Analysis: False White House tweet exposes instant trading dangers,” Reuters, 23 April 2013, web 23 April 2013, http://www.reuters.com/article/2013/04/23/us-usa-markets-tweet-idUSBRE93M1FD20130423

[8] Cluley, Graham. “CIA website brought down by DDoS attack, LulzSec hackers claim responsibility,” nakedsecurity, 15 June 2011, Web 1 August 2013, http://nakedsecurity.sophos.com/2011/06/15/cia-website-down-hackers-lulzsec/

[9] Durden, Tyler. “PBS Hacker LulzSec Takes On Archnemesis FBI, Defaces FBI-Affiliate Website In Protest Against NATO And Obama,” Zero Hedge, 6 May, 2011, Web August 1, 2013, http://www.zerohedge.com/article/pbs-hacker-lulzsec-takes-archnemesis-fbi-defaces-fbi-affiliate-website

[10] Munger, Frank. “It’s Back! (the Internet, that is), knoxnews.com, 29 April 2011, Web 9 August 2013, http://knoxblogs.com/atomiccity/2011/04/29/its_back_the_internet_that_is/#more-4513, reporting that Oak Ridge National Laboratory was back on-line after being disconnected from the internet for two weeks due to remediation of a spearphishing attack.

[11] Vijayan, Jaikumar. “U.S. banks on high alert against cyberattacks.”, Computerworld, 20 September 2012, Web 3 August 2013, http://www.computerworld.com/s/article/9231515/U.S._banks_on_high_alert_against_cyberattacks

[12] “Foreign Spies Stealing U.S. Economic Secrets in Cyberspace,” Office of the National Counterintelligence Executive, October 2011, Web 15 November 2011, http://www.ncix.gov/publications/reports/fecie_all/Foreign_Economic_Collection_2011.pdf

[13] Nakashima (n 2)

[15] Vijayan (n 11)

[16] Mandiant Corporation. “APT1: Exposing One of China’s Cyber Espionage Units,” Mandiant Corporation, 18 February 2013, Web 26 February 2013, http://intelreport.mandiant.com/Mandiant_APT1_Report.pdf

[17] Defense Science Board. “Resilient Military Systems and the Advanced Cyber Threat,” Department of Defense, January 2013, Web March 1, 2013, http://www.acq.osd.mil/dsb/reports/ResilientMilitarySystems.CyberThreat.pdf

[18] Maneki, Sharon. “Learning from the Enemy: The Gunman Project;” Center for Cryptologic History, National

Security Agency; 2009, Web, 8 August 2013, http://www.nsa.gov/public_info/_files/cryptologic_histories/Learning_from_the_Enemy.pdf

[19] General Keith Alexander; testimony to U.S. Senate Armed Services Committee on U.S. Strategic Command and U.S. Cyber Command in Review of the Defense Authorization Request for Fiscal Year 2013; Tuesday, March 27, 2012, Web 8 August 2013, http://www.armed-services.senate.gov/Transcripts/2012/03%20March/12-19%20-%203-27-12.pdf

[20] President Barack Obama. “Presidential Policy Directive – Critical Infrastructure Security and Resiliance, Presidential Policy Directive/PPD-21”, The White House, 12 February 2013, Web 8 August 2013, http://www.whitehouse.gov/the-press-office/2013/02/12/presidential-policy-directive-critical-infrastructure-security-and-resil

[21] Fireeye. Fireeye Advanced Threat Report – 1H2012, FireEye, 2012, Web 8 August 2013, http://www2.fireeye.com/get-advanced-threat-report-1h2012.html?x=FE_HERO, registration required

[22] Yardron, Danny and Gorman,Siobhan.” U.S., Firms Draw a Bead on Chinese Cyberspies,”Wall Street Journal, 13 July 2013, Page A1. “The hackers quickly changed their Internet signatures and resumed probing U.S. companies, the officials said. "Part of the problem is we can close this door and it's fairly easy for them to open another door," a U.S. official said.”

[23] Miller, Matthew, et al. “Why Your Intuition About Cyber Warfare is Probably Wrong.” Small Wars Journal, 29 November 2012, Web 8 August 2013, http://smallwarsjournal.com/jrnl/art/why-your-intuition-about-cyber-warfare-is-probably-wrong

[24] Pakistan’s USA attack infrastructure, “Where There is Smoke, There is Fire: South Asian Cyber Espionage Heats Up,” ThreatConnect, 2 August 2013, Web 2 August 2013, http://www.threatconnect.com/news/where-there-is-smoke-there-is-fire-south-asian-cyber-espionage-heats-up/

India’s Norway attack infrastructure, “Unveiling an Indian Cyberattack Infractructure”, Norman Shark, 17 March 2013, Web 10 August 2013, http://normanshark.com/hangoverreport/

[25] For a discussion of the ineffectiveness of defensive software, see, Perlroth, Nicole. “Outmaneuvered at Their Own Game, Antivirus Makers Struggle to Adapt,” The New York Times, 31 December 2012, Web 13 August 2013, http://www.nytimes.com/2013/01/01/technology/antivirus-makers-work-on-software-to-catch-malware-more-effectively.html?pagewanted=all&_r=0

For a discussion of the real world ineffectiveness of responses to zero day attacks, see, Bilge, Leyla and Dumitras, Tudor. “Before We Knew It: An Empirical Study of Zero-Day Attacks In The Real World,” Carnegie Mellon University, 12 April 2010, Web 13 August 2013, http://users.ece.cmu.edu/~tdumitra/public_documents/bilge12_zero_day.pdf

Hackers use the very defensive software relied upon for protection as part of the malware release cycle. See, Danchev, Dancho. “How cybercriminals apply Quality Assurance (QA) to their malware campaigns before launching them,” Webroot Threatblog, 14 June 2013, Web, 15 June 2013, http://blog.webroot.com/2013/06/14/how-cybercriminals-apply-quality-assurance-qa-to-their-malware-campaigns-before-launching-them/

Hackers keep current on defensive technologies. Hackers are already overcoming leading edge sandboxing strategies. See, Hwa, Chong Rong. “Trojan.APT.BaneChant: In-Memory Trojan That Observes for Multiple Mouse Clicks,” FireEye Blog, 1 April 2013, Web 6 June 2013, http://www.fireeye.com/blog/technical/malware-research/2013/04/trojan-apt-banechant-in-memory-trojan-that-observes-for-multiple-mouse-clicks.html

[26] Miller (n 23)

[27] “”Red October” Diplomatic Cyberattacks Investigation.”, Securelist, 14 January 2013, Web 8 August 2013, http://www.securelist.com/en/analysis/204792262/Red_October_Diplomatic_Cyber_Attacks_Investigation, describes a widespread attack that went undetected for over 5 years.

[28] Global Research and Analysis Team. “The NetTraveler (AKA ‘Travnet’),” Kaspersky Lab, 2013, Web 5 June 2013, http://www.securelist.com/en/downloads/vlpdfs/kaspersky-the-net-traveler-part1-final.pdf, Page 4

[29] Mandiant (n 16)

[30] TrendLabs APT Research Team. “Spear-Phishing Email: Most Favored APT Attack Bait,” Trend Micro Incorporated, 2012, Web, 30 November, 2012, http://www.trendmicro.com/cloud-content/us/pdfs/security-intelligence/white-papers/wp-spear-phishing-email-most-favored-apt-attack-bait.pdf

[31] Verizon RISK Team. “The 2013 Data Breach Investigations Report,” Verizon, 2013, Web 3 May 2013, http://www.verizonenterprise.com/DBIR/2013/

[32] Alexander (n 19), Page 11.

[33] “Department of Defense Strategy for Operating in Cyberspace,” (n 4)

[34] DSB Report, (n 17) See, for example, Pages 10, 32, 64-66

[35] DSB Report (n 17) Pages 68-69.

[36] Rashid, Fahmida. “Chinese Hacking Group Linked to NetTraveler Espionage Campaign,” SecurityWeek, 4 June 2013, Web 1 August 2013, http://www.securityweek.com/chinese-hacking-group-linked-nettraveler-espionage-campaign

[37] See Miller, Matthew (n 23) for a detailed discussion of the inapplicability of kinetic operations concepts to cyber operations.

[38] Mandiant (n 16) Page 27.

[39] Gell, Aaron. “How a White House Flickr Fail Outed Bin Laden Hunter 'CIA John',”. New York Observer, 12 July 2011, Web August 1, 2013 http://www.observer.com/2011/07/exclusive-bin-laden-hunter-cia-john-identified/

[40] Acquisti, Alessandro, et al. “Faces of Facebook: Privacy in the Age of Augmented Reality,” http://www.heinz.cmu.edu/~acquisti/face-recognition-study-FAQ/

[41] Higbee, Aaron, “Revealing some of the tactics behind a spear phishing attack,” ITProPortal, 24 December 2012, Web 1 August 2013, http://www.itproportal.com/2012/12/24/revealing-some-tactics-behind-spear-phishing-attack/

[42] Lewis, Jason. “How spies used Facebook to steal Nato chief’s details,” The Telegraph, 10 March 2012, Web 1 August 2013, http://www.telegraph.co.uk/technology/9136029/How-spies-used-Facebook-to-steal-Nato-chiefs-details.html

[43] Gilbert, David. “Gone Spearphishing: How Cybercriminals are Changing the Way They Work,” International Business Times, 11 July 2012, Web 1 August 2013 http://www.ibtimes.co.uk/articles/361877/20120711/spear-phishing-antivirus-firewall-cyber-criminals.htm

[46] http://iconixtruemark.files.wordpress.com/2012/06/defending-against-spoofed-domain-spearphishing-attacks-6-1-12.pdf

Return Path, “The Sender Reputation Report: Key Factors that Impact Email Deliverability,” available by registering at http://www.returnpath.net/landing/reputationfactors/index.php?campid=701000000005fQf

http://www.cisco.com/en/U.S./prod/collateral/vpndevc/ps10128/ps10339/ps10354/targeted_attacks.pdf

[47] There are circumstances in which technical sending data is displayed to recipients. For example, gmail displays the name of the sending server. However, because people are unable to decode this technical information, this technical content has little, if any, impact on email interaction decisions. The AntiPhishing Working Group noted, “[T]he domain name itself usually does not matter to phishers, and a domain name of any meaning, or no meaning at all, in any TLD, will usually do. Instead, phishers almost always place brand names in subdomains or subdirectories. This puts the misleading string somewhere in the URL, where potential victims may see it and be fooled. Internet users are rarely knowledgeable enough to be able to pick out the “base” or true

domain name being used in a URL.” http://www.apwg.org/reports/APWG_GlobalPhishingSurvey_1H2011.pdf, page 14.

[48] Watch a video demonstration by former FBI Agent Eric Fiterman in which he creates an undetectable spearphishing email then uses that email to install and operate a remote command and control server at http://money.cnn.com/video/technology/2011/07/25/t-tt-hacking-phishing.cnnmoney/

[49] Kahneman, Daniel (25 October 2011). Thinking, Fast and Slow. Macmillan. ISBN 978-1-4299-6935-2.

[50] http://www.bizjournals.com/twincities/blog/banking/2012/12/crime-doesnt-pay-warns-catch-me-if.html

[51] Watch the gorilla experiment and other demonstrations of selective attention at: http://www.dansimons.com/videos.html

[52] Ferguson, Aaron J., PhD., "Fostering Email Security Awareness: The West Point Carronade." Educause Quarterly, 1 (2005) : 54-57. Jackson, James J., et al., "Building a University-wide Automated Information Assurance Awareness Exercise." 35th ASEE/IEEE Frontiers in Education Conference T2E-11. Dodge Jr., Ronald C., et al., "Phishing for user security awareness." Computers & Security,26 (2007) 73-80.

[53] http://www.computerworld.com/s/article/9218214/Government_tests_show_security_s_people_problem?pageNumber=1

Roberts, Paul. “Three Quarters of Employees Duped By Phishing Scams.” http://threatpost.com/en_us/blogs/expert-three-quarters-employees-duped-phishing-scams-040711.

[54] Vishwanath, Arun, Ph.D., et al. “Why do people get phished?” Decision Support Systems 51 (2011) 576-586.

[55] Romenesko, (n 6)

[56] Johnson, (n 7)

[57] See Hesser, Robert and Rieken, Danny. “FORCEnet ENGAGEMENT PACKS: “OPERATIONALIZING” FORCEnet TO DELIVER TOMORROW’S NAVAL NETWORK-CENTRIC COMBAT REACH, CAPABILITIES . . . TODAY,” Naval Postgraduate School, December 2003, Web 19 June 2013 http://www.dtic.mil/dtic/tr/fulltext/u2/a420550.pdf for a comprehensive discussion of central role of combat identification in modern kinetic warfare.

[58] Section 1.2 of the DMARC standard discusses its limited utility in fighting spearphishing. http://www.ietf.org/id/draft-kucherawy-dmarc-base-01.txt

[60] Jakobsson & Ramzon. Crimeware: Understanding New Attacks and Defenses (Stoughton, MA; Pearson Education, Inc., 2008), 582.

[61] These innovations are covered by one or more of the following patents held by Iconix:

U.S. 7,413,085; U.S. 7,422,115; U.S. 7,487,213; U.S. 7,801,961; U.S. 8,073,910

[63] Email masquerading as from the President was used to compromise the National Science Foundation’s Office of Cyber Infrastructure. Krebs, Brian. “’White House’ eCard Dupes Dot-Gov Geeks,” KrebsonSecurity, 3 January 2011, Web 10 August 2013, http://krebsonsecurity.com/2011/01/white-house-ecard-dupes-dot-gov-geeks/